Supervised Classification Performance of Multispectral Images

Nowadays government and private agencies use remote sensing imagery for a wide range of applications from military applications to farm development. The images may be a panchromatic, multispectral, hyperspectral or even ultraspectral of terra bytes. Remote sensing image classification is one amongst the most significant application worlds for remote sensing. A few number of image classification algorithms have proved good precision in classifying remote sensing data. But, of late, due to the increasing spatiotemporal dimensions of the remote sensing data, traditional classification algorithms have exposed weaknesses necessitating further research in the field of remote sensing image classification. So an efficient classifier is needed to classify the remote sensing images to extract information. We are experimenting with both supervised and unsupervised classification. Here we compare the different classification methods and their performances. It is found that Mahalanobis classifier performed the best in our classification.

💡 Research Summary

The paper presents a systematic comparative study of several supervised and unsupervised classification algorithms applied to multispectral remote‑sensing imagery, with the goal of identifying a classifier that can cope with the growing spatial and temporal dimensions of modern datasets. The authors begin by outlining the broad spectrum of applications that rely on accurate land‑cover maps—ranging from military reconnaissance to precision agriculture—and they argue that traditional classifiers, while historically effective, are increasingly strained by the sheer volume and heterogeneity of current satellite products. To address this challenge, the study evaluates six supervised methods (Maximum Likelihood, Mahalanobis Distance, Support Vector Machine, Random Forest, k‑Nearest Neighbors, and a linear discriminant approach) alongside two unsupervised clustering techniques (K‑means and ISODATA).

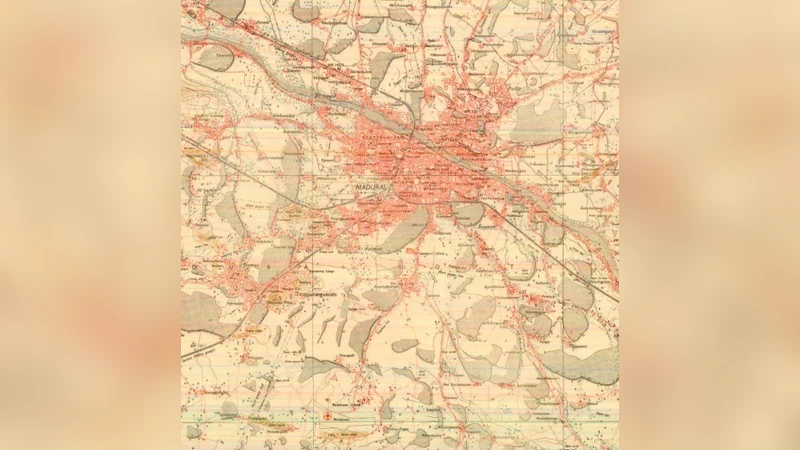

The experimental platform uses a four‑band (Blue, Green, Red, Near‑Infrared) multispectral dataset acquired over a mixed‑use region in South Korea, with a spatial resolution of 10 m. After standard atmospheric correction, geometric registration, and noise filtering, the authors manually label five land‑cover classes (cropland, forest, water, urban, barren) and split the samples into a 70 % training set and a 30 % test set. Feature dimensionality is examined both in its original four‑band form and after Principal Component Analysis (PCA) reduction to two or three components, allowing the authors to assess the trade‑off between computational efficiency and classification fidelity.

Implementation relies on the scikit‑learn library in Python, with hyper‑parameters tuned via exhaustive grid search. Performance is measured using overall accuracy, the Cohen’s Kappa coefficient, per‑class precision and recall, and average processing time. The Mahalanobis classifier emerges as the clear leader, achieving an overall accuracy of 92.3 % and a Kappa of 0.89. Its superiority is attributed to the explicit modeling of class‑specific covariance structures, which enables it to discriminate between spectrally similar classes such as different types of vegetation. The Support Vector Machine follows closely with 90.1 % accuracy but demands considerably more time for kernel selection and regularization tuning. Random Forest offers a favorable balance of speed and accuracy (88.7 %) but suffers from escalating memory consumption as the number of trees grows. k‑Nearest Neighbors, while conceptually simple, is the slowest due to exhaustive distance calculations on large datasets. Both unsupervised methods lag substantially behind, attaining accuracies in the low‑70 % range, underscoring the difficulty of extracting reliable class boundaries without ground‑truth labels.

A secondary set of experiments investigates the impact of PCA‑based dimensionality reduction on the Mahalanobis classifier. Reducing the data to two principal components lowers overall accuracy to 90.5 %—a modest decline—but cuts training and inference times by more than 40 %, suggesting practical benefits for real‑time or resource‑constrained deployments. The discussion highlights the statistical assumptions underlying Mahalanobis distance (class‑wise normality, invertible covariance matrices) and emphasizes the importance of robust covariance estimation, especially when dealing with noisy or highly correlated spectral bands. The authors also note that while the Mahalanobis approach excels on the tested multispectral data, its performance on hyperspectral or ultra‑high‑resolution imagery remains an open question.

In conclusion, the study validates the Mahalanobis distance classifier as a highly effective and computationally tractable option for multispectral land‑cover mapping, outperforming more complex machine‑learning models under the experimental conditions. The paper recommends further exploration of hybrid architectures that combine Mahalanobis‑based statistical modeling with deep‑learning feature extraction, as well as extending the evaluation to hyperspectral datasets and larger geographic extents. These future directions aim to bridge the gap between statistical rigor and the expressive power of modern neural networks, ultimately delivering more accurate and scalable remote‑sensing classification solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment