A Survey of Na"ive Bayes Machine Learning approach in Text Document Classification

Text Document classification aims in associating one or more predefined categories based on the likelihood suggested by the training set of labeled documents. Many machine learning algorithms play a vital role in training the system with predefined categories among which Na"ive Bayes has some intriguing facts that it is simple, easy to implement and draws better accuracy in large datasets in spite of the na"ive dependence. The importance of Na"ive Bayes Machine learning approach has felt hence the study has been taken up for text document classification and the statistical event models available. This survey the various feature selection methods has been discussed and compared along with the metrics related to text document classification.

💡 Research Summary

The paper presents a comprehensive survey of the Naïve Bayes (NB) machine‑learning approach for text document classification. It begins by positioning text classification within the broader landscape of machine‑learning tasks such as information retrieval, spam filtering, and text mining, noting that traditional manual categorisation is labor‑intensive. Six classical families of classifiers are listed—Rocchio, K‑Nearest Neighbour, regression, Bayesian (including NB and Bayesian networks), decision trees, and rule‑based systems—highlighting that NB stands out for its simplicity, ease of implementation, and surprisingly strong performance on large corpora despite the strong independence assumption.

The core technical discussion focuses on two generative event models that embody the NB assumption:

-

Multivariate Bernoulli Model – Documents are represented as binary vectors indicating the presence or absence of each vocabulary term. The probability of a document given a class is the product of individual word‑presence probabilities, including the probability of non‑occurrence for absent words. Parameter estimation uses maximum‑likelihood or Bayesian MAP with Laplace smoothing (add‑one). This model ignores term frequency, making it less suitable when word counts carry discriminative information.

-

Multinomial Model – Documents are treated as “bags of words” where each term’s count is modeled by a multinomial distribution. The likelihood of a document is the multinomial probability of the observed term counts, again smoothed by Laplace (α=1). This model captures frequency information and consistently outperforms the Bernoulli variant in empirical studies.

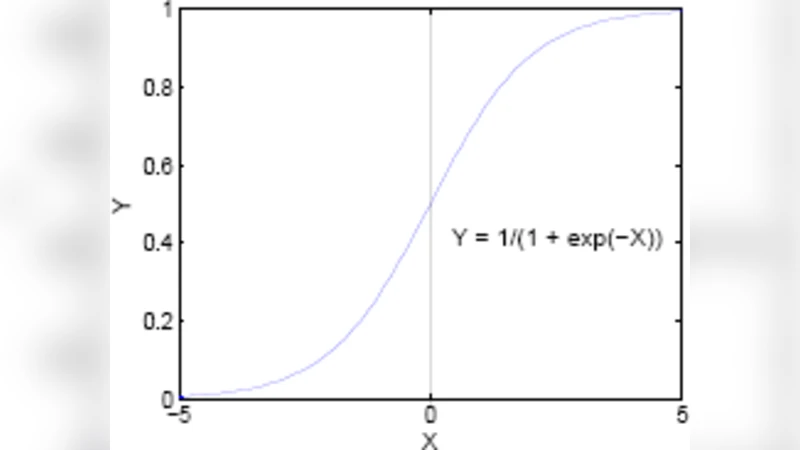

The paper also addresses extensions for continuous features (Gaussian NB) and contrasts NB with logistic regression, noting that logistic regression provides a linear decision boundary but lacks the probabilistic interpretability inherent in Bayesian approaches.

Further, the survey explores hybridizations that aim to mitigate NB’s weaknesses:

- Boosting (Active Learning) – Weak NB classifiers are iteratively re‑weighted to focus on mis‑classified instances, improving robustness to noise. Experiments on 15 UCI datasets demonstrate that a pre‑discretization step based on entropy aids performance on continuous attributes.

- Probability Estimation Trees (PET) – Decision‑tree structures are used to estimate conditional probabilities more finely. While PET can improve shallow‑depth performance, it suffers from reduced model transparency and potential over‑fitting.

- Maximum Entropy (MaxEnt) Integration – By coupling NB with a MaxEnt model for Base Noun Phrase (BaseNP) identification, the authors achieve ~93 % precision and recall, illustrating that shallow parsing can substantially boost classification accuracy.

The authors devote a section to data characteristics that affect NB performance. They emphasize that NB works well when features are either nearly independent (as the model assumes) or functionally dependent, but its accuracy deteriorates in intermediate cases. The “Zero‑Bayes‑Risk” scenario—where one class contains a single example—provides a theoretical proof of NB optimality for two‑class nominal problems. Monte‑Carlo simulations confirm that entropy of class‑conditional marginals is a better predictor of NB error than mutual information between features.

Experimental methodology includes evaluations on standard corpora (20‑Newsgroup, Reuters‑21578) and a suite of UCI datasets. Results show that:

- On large datasets, the multinomial NB achieves high micro‑ and macro‑F1 scores. Variants such as SRF (Smoothed Random Forest) with λ = 0.2 outperform the baseline multinomial NB, while differences between token‑based and unique‑term‑based variants are minimal.

- On small datasets, plain NB suffers from under‑fitting; combining NB with SVM, neural networks, or decision trees yields noticeable gains.

- The authors also discuss precision, recall, and F‑measure formulas, and present a comparative analysis of micro‑ versus macro‑averaged F1, noting that macro‑F1 benefits more from normalization in imbalanced category settings.

In conclusion, the survey affirms that Naïve Bayes remains a cornerstone for text classification due to its computational efficiency and solid baseline performance, especially when paired with appropriate feature‑selection, smoothing, and hybrid learning techniques. The paper suggests future research directions such as advanced smoothing for high‑dimensional sparse data, integration of NB with deep learning architectures, and online learning mechanisms for streaming text streams.

Comments & Academic Discussion

Loading comments...

Leave a Comment