Security issues are crucial in a number of machine learning applications, especially in scenarios dealing with human activity rather than natural phenomena (e.g., information ranking, spam detection, malware detection, etc.). It is to be expected in such cases that learning algorithms will have to deal with manipulated data aimed at hampering decision making. Although some previous work addressed the handling of malicious data in the context of supervised learning, very little is known about the behavior of anomaly detection methods in such scenarios. In this contribution we analyze the performance of a particular method -- online centroid anomaly detection -- in the presence of adversarial noise. Our analysis addresses the following security-related issues: formalization of learning and attack processes, derivation of an optimal attack, analysis of its efficiency and constraints. We derive bounds on the effectiveness of a poisoning attack against centroid anomaly under different conditions: bounded and unbounded percentage of traffic, and bounded false positive rate. Our bounds show that whereas a poisoning attack can be effectively staged in the unconstrained case, it can be made arbitrarily difficult (a strict upper bound on the attacker's gain) if external constraints are properly used. Our experimental evaluation carried out on real HTTP and exploit traces confirms the tightness of our theoretical bounds and practicality of our protection mechanisms.

Deep Dive into Security Analysis of Online Centroid Anomaly Detection.

Security issues are crucial in a number of machine learning applications, especially in scenarios dealing with human activity rather than natural phenomena (e.g., information ranking, spam detection, malware detection, etc.). It is to be expected in such cases that learning algorithms will have to deal with manipulated data aimed at hampering decision making. Although some previous work addressed the handling of malicious data in the context of supervised learning, very little is known about the behavior of anomaly detection methods in such scenarios. In this contribution we analyze the performance of a particular method – online centroid anomaly detection – in the presence of adversarial noise. Our analysis addresses the following security-related issues: formalization of learning and attack processes, derivation of an optimal attack, analysis of its efficiency and constraints. We derive bounds on the effectiveness of a poisoning attack against centroid anomaly under different condi

Machine learning methods have been instrumental in enabling numerous novel data analysis applications. Currently indispensable technologies such as object recognition, user preference analysis, spam filtering -to name only a few -all rely on accurate analysis of massive amounts of data. Unfortunately, the increasing use of machine learning methods brings about a threat of their abuse. A convincing example of this phenomenon are emails that bypass spam protection tools. Abuse of machine learning can take on various forms. A malicious party may affect the training data, for example, when it is gathered from a real operation of a system and cannot be manually verified. Another possibility is to manipulate objects observed by a deployed learning system so as to bias its decisions in favor of an attacker. Yet another way to defeat a learning system is to send a large amount of nonsense data in order to produce an unacceptable number of false alarms and hence force a system's operator to turn it off. Manipulation of a learning system may thus range from simple cheating to complete disruption of its operations.

A potential insecurity of machine learning methods stems from the fact that they are usually not designed with adversarial input in mind. Starting from the mainstream computational learning theory (Vapnik, 1998;Schölkopf and Smola, 2002), a prevalent assumption is that training and test data are generated from the same, fixed but unknown, probability distribution. This assumption obviously does not hold for adversarial scenarios. Furthermore, even the recent work on learning with differing training and test distributions (Sugiyama et al., 2007) is not necessarily appropriate for adversarial input, as in the latter case one must account for a specific worst-case difference.

The most important application field in which robustness of learning algorithms against adversarial input is crucial is computer security. Modern security infrastructures are facing an increasing professionalization of attacks motivated by monetary profit. A wide-scale deployment of insidious evasion techniques, such as encryption, obfuscation and polymorphism, is manifested in an exploding diversity of malicious software observed by security experts. Machine learning methods offer a powerful tool to counter a rapid evolution of security threats. For example, anomaly detection can identify unusual events that potentially contain novel, previously unseen exploits (Wang and Stolfo, 2004;Rieck and Laskov, 2006;Wang et al., 2006;Rieck and Laskov, 2007). Another typical application of learning methods is automatic signature generation which drastically reduces the time needed for a production and deployment of attack signatures (Newsome et al., 2006;Li et al., 2006). Machine learning methods can also help researchers to better understand the design of malicious software by using classification or clustering techniques together with special malware acquisition and monitoring tools (Bailey et al., 2007;Rieck et al., 2008).

In order for machine learning methods to be successful in security applications -and in general in any application where adversarial input may be encountered -they should be equipped with countermeasures against potential attacks. The current understanding of security properties of learning algorithms is rather patchy. Earlier work in the PACframework has addressed some scenarios in which training data is deliberately corrupt (Angluin and Laird, 1988;Littlestone, 1988;Kearns and Li, 1993;Auer, 1997;Bschouty et al., 1999). These results, however, are not connected to modern learning algorithms used in classification, regression and anomaly detection problems. On the other hand, several examples of effective attacks have been demonstrated in the context of specific security and spam detection applications (Lowd and Meek, 2005a;Fogla et al., 2006;Fogla and Lee, 2006;Perdisci et al., 2006;Newsome et al., 2006;Nelson et al., 2008), which has motivated a recent work on taxonomization of such attacks (Barreno et al., 2006(Barreno et al., , 2008)). However, it remains largely unclear whether machine learning methods can be protected against adversarial impact.

We believe that an unequivocal answer to the problem of “security of machine learning” does not exist. The security properties cannot be established experimentally, as the notion of security deals with events that do not just happen on average but rather only potentially may happen. Hence, a theoretical analysis of machine learning algorithms for adversarial scenarios is indispensable. It is hard to imagine, however, that such analysis can offer meaningful results for any attack and any circumstances. Hence, to be a useful guide for practical applications of machine learning in adversarial environments, such analysis must address specific attacks against specific learning algorithms. This is precisely the approach followed in this contribution.

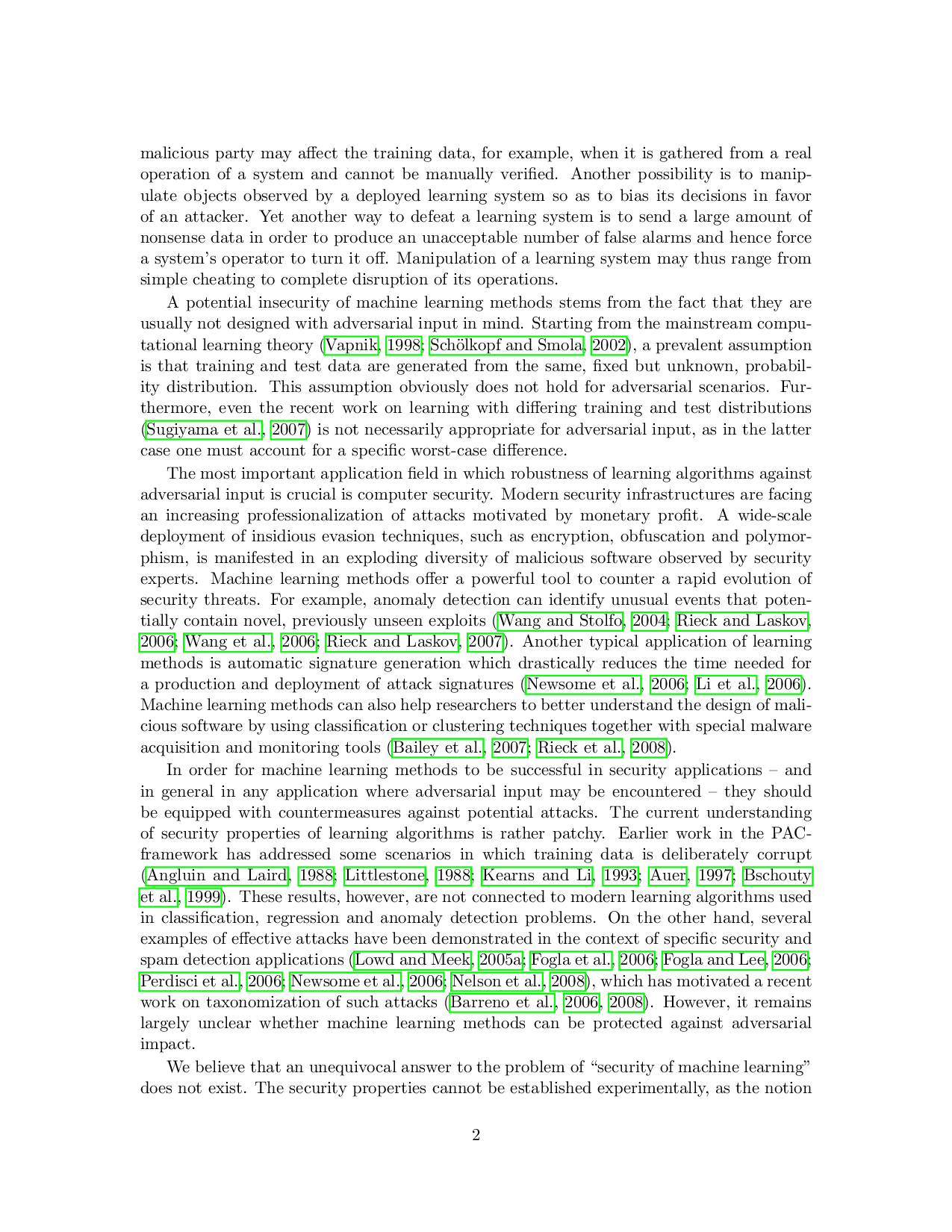

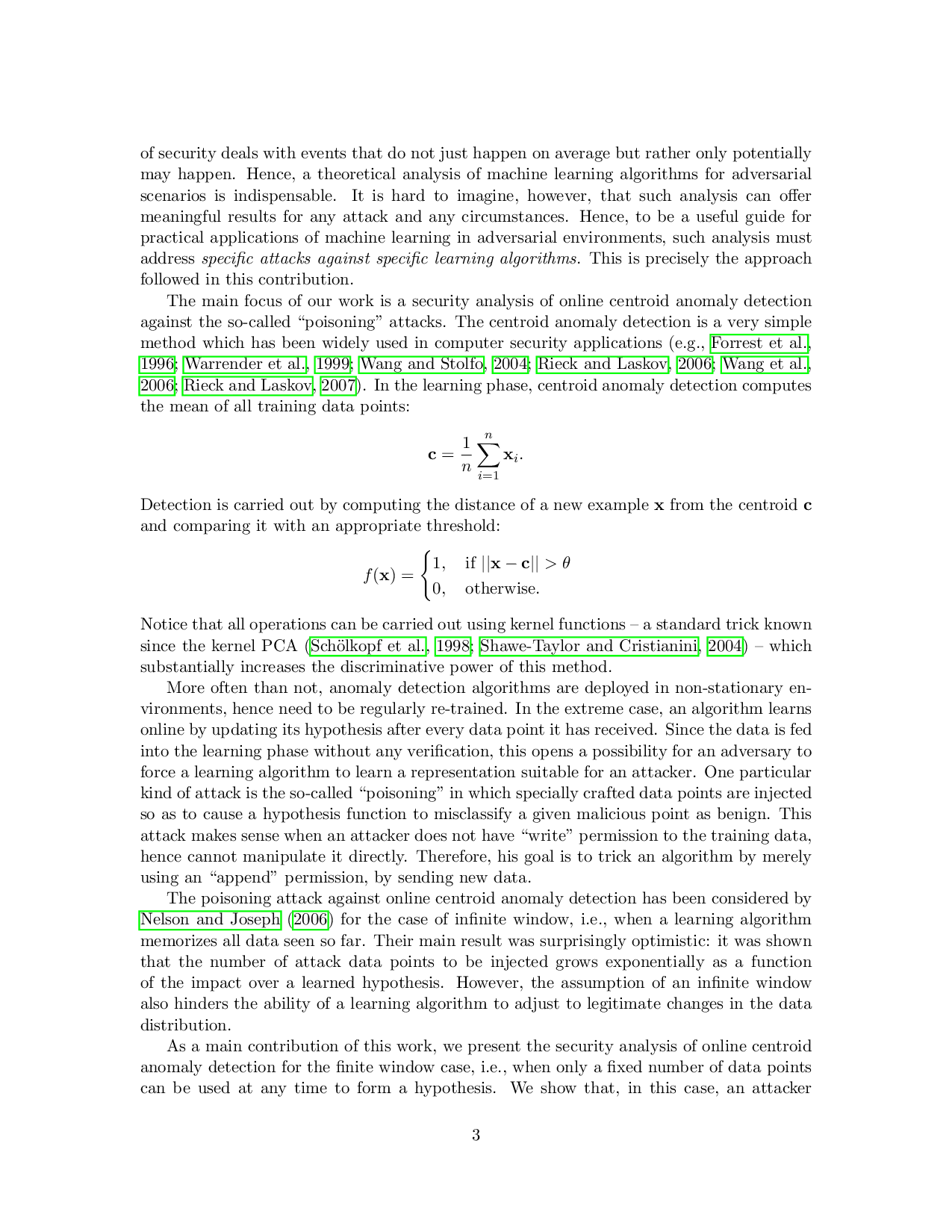

The main focus of our work is

…(Full text truncated)…

This content is AI-processed based on ArXiv data.