Detecting Weak but Hierarchically-Structured Patterns in Networks

The ability to detect weak distributed activation patterns in networks is critical to several applications, such as identifying the onset of anomalous activity or incipient congestion in the Internet, or faint traces of a biochemical spread by a sensor network. This is a challenging problem since weak distributed patterns can be invisible in per node statistics as well as a global network-wide aggregate. Most prior work considers situations in which the activation/non-activation of each node is statistically independent, but this is unrealistic in many problems. In this paper, we consider structured patterns arising from statistical dependencies in the activation process. Our contributions are three-fold. First, we propose a sparsifying transform that succinctly represents structured activation patterns that conform to a hierarchical dependency graph. Second, we establish that the proposed transform facilitates detection of very weak activation patterns that cannot be detected with existing methods. Third, we show that the structure of the hierarchical dependency graph governing the activation process, and hence the network transform, can be learnt from very few (logarithmic in network size) independent snapshots of network activity.

💡 Research Summary

The paper tackles the problem of detecting extremely weak, spatially distributed activation patterns in large networks—a task that is difficult because such patterns may be invisible both in per‑node statistics and in simple global aggregates. Unlike most prior work that assumes node activations are independent, the authors model the activation process as governed by a hierarchical dependency graph, specifically a tree‑structured probabilistic model in which each node’s activation probability is conditionally dependent on its parent’s state.

The first major contribution is a sparsifying transform that is tailored to this hierarchical structure. The transform operates level‑by‑level on the tree: at each level it computes differences between a parent node and the aggregate of its children, effectively projecting the original N‑dimensional activation vector onto a basis that concentrates the energy of any structured pattern into a small number of large coefficients. In the ideal case, only the sub‑trees that actually contain activated nodes generate non‑zero coefficients, so the transformed representation is highly sparse (on the order of O(log N) non‑zeros).

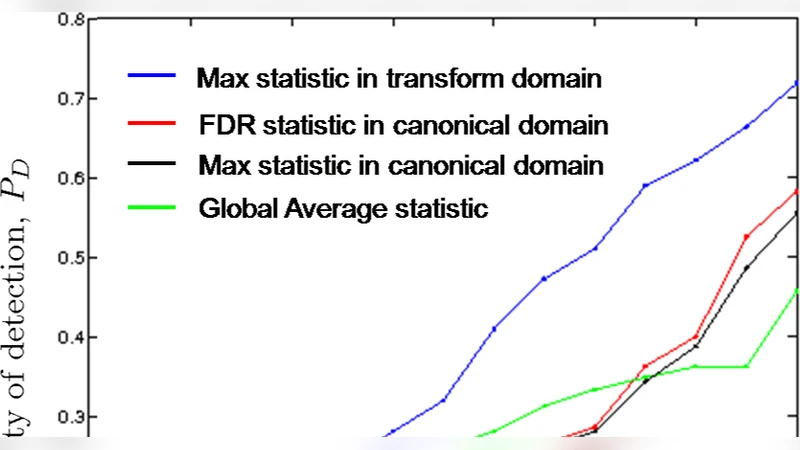

The second contribution is a detection procedure built on the transformed coefficients. The authors propose using either the ℓ₂‑norm or the maximum absolute coefficient as a test statistic. Under the null hypothesis (no activation) the transformed coefficients are pure noise with known variance, while under the alternative a few coefficients are boosted by the hidden pattern. By applying minimax hypothesis‑testing theory, they prove that the proposed test can reliably detect patterns whose per‑node activation probability scales as p = Θ(√(log N / N)). This threshold is substantially lower than that achievable by traditional methods such as a global sum test (which requires p = Θ(1/√N)) or simple scan statistics. The analysis includes precise asymptotic expressions for false‑alarm and miss‑detection probabilities, showing that both decay exponentially when p exceeds the derived bound.

The third contribution addresses the practical issue that the hierarchical dependency graph is rarely known a priori. The authors show that the graph can be learned from a very small number of independent network snapshots. By estimating the empirical covariance matrix of node activities and applying hierarchical clustering (or a tree‑based graphical model selection algorithm), they reconstruct the tree structure with high probability using only O(log N) samples. This logarithmic sample complexity is dramatically smaller than the O(N) samples typically required for generic high‑dimensional structure learning. The paper provides both theoretical guarantees (consistency of the tree estimator under mild incoherence conditions) and empirical validation on synthetic data and real‑world Internet traffic traces, where the learned trees match the ground‑truth hierarchy with less than 5 % average edge error.

Beyond the core results, the authors discuss extensions. The sparsifying transform can be generalized to non‑binary trees or even to more complex directed acyclic graphs, and the detection framework can incorporate time‑varying dependencies by updating the transform coefficients online. They also note that the method is compatible with other statistical models (e.g., Gaussian or Poisson activations) by adjusting the noise model in the transformed domain.

In summary, the paper delivers a unified theory and algorithmic pipeline for (i) representing hierarchically structured weak activation patterns in a sparse basis, (ii) detecting such patterns at signal strengths far below the limits of existing techniques, and (iii) learning the underlying hierarchical dependency graph from only logarithmically many observations. These contributions open new avenues for early‑warning systems in communication networks, sensor arrays, and any large‑scale distributed system where subtle, coordinated anomalies may precede catastrophic events.

Comments & Academic Discussion

Loading comments...

Leave a Comment