Security Analysis of Online Centroid Anomaly Detection

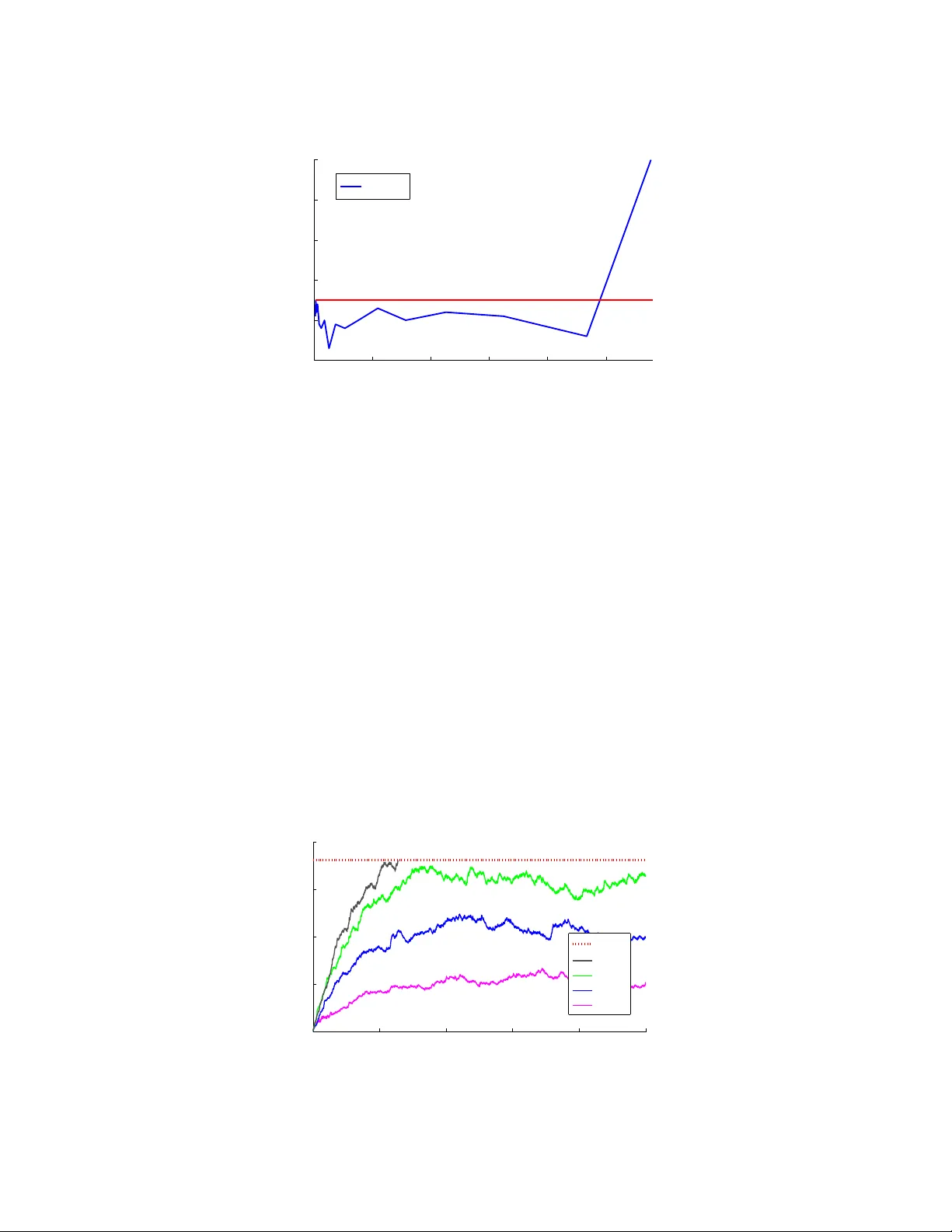

Security issues are crucial in a number of machine learning applications, especially in scenarios dealing with human activity rather than natural phenomena (e.g., information ranking, spam detection, malware detection, etc.). It is to be expected in …

Authors: ** *L. Laskov, S. Kloft, C. Krueger