Indisputable facts when implementing spiking neuron networks

In this article, our wish is to demystify some aspects of coding with spike-timing, through a simple review of well-understood technical facts regarding spike coding. The goal is to help better understanding to which extend computing and modelling with spiking neuron networks can be biologically plausible and computationally efficient. We intentionally restrict ourselves to a deterministic dynamics, in this review, and we consider that the dynamics of the network is defined by a non-stochastic mapping. This allows us to stay in a rather simple framework and to propose a review with concrete numerical values, results and formula on (i) general time constraints, (ii) links between continuous signals and spike trains, (iii) spiking networks parameter adjustments. When implementing spiking neuron networks, for computational or biological simulation purposes, it is important to take into account the indisputable facts here reviewed. This precaution could prevent from implementing mechanisms meaningless with regards to obvious time constraints, or from introducing spikes artificially, when continuous calculations would be sufficient and simpler. It is also pointed out that implementing a spiking neuron network is finally a simple task, unless complex neural codes are considered.

💡 Research Summary

The paper presents a concise yet thorough review of the “indisputable facts” that must be taken into account when implementing deterministic spiking neuron networks (SNNs). By deliberately restricting the analysis to non‑stochastic dynamics—i.e., a fixed mapping from inputs to outputs—the authors are able to stay within a mathematically tractable framework while still addressing the core biological constraints that shape any realistic neural model. The review is organized around three pillars: (i) general temporal constraints, (ii) the relationship between continuous signals and spike trains, and (iii) practical guidelines for parameter selection and network stability.

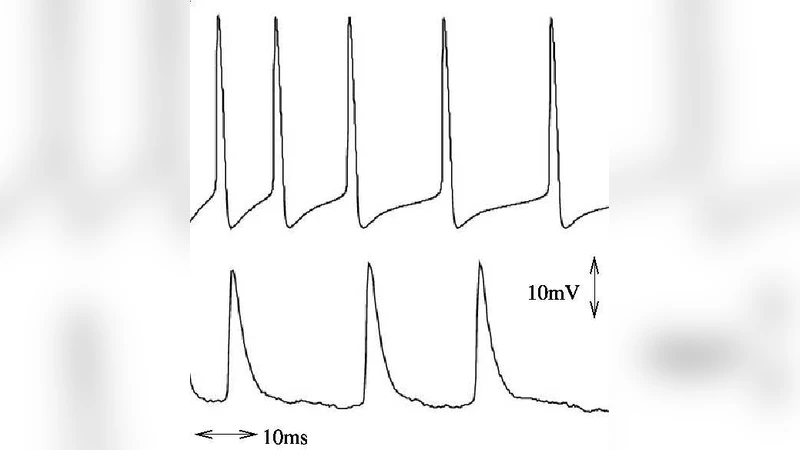

Temporal constraints are the first pillar. The authors enumerate three fundamental time scales that any spiking neuron must respect: (1) the membrane integration time required for the membrane potential to reach threshold (typically 1–5 ms), (2) synaptic transmission delays (0.1–2 ms), and (3) the refractory period after a spike (1–3 ms). From experimental measurements and classic integrate‑and‑fire models they derive a simple rule for choosing the simulation time step: the step must be at least as large as the maximum of these three intervals. Selecting a step that is too small inflates computational cost without adding meaningful resolution, while a step that is too large can miss critical synchrony and cause numerical instability.

The second pillar deals with encoding continuous inputs into spike trains. Two canonical schemes are compared: (a) threshold‑crossing encoding, where each crossing of a predefined voltage threshold generates a spike, and (b) time‑to‑first‑spike (TTFS) encoding, where the latency to the first spike is inversely proportional to input strength. The authors provide analytical expressions linking the average firing rate to the mean input current for the threshold scheme, and a latency‑versus‑input relationship for TTFS. Both are evaluated using an information‑per‑spike metric; they argue that a coding scheme is efficient when it transmits at least 0.5 bits per spike. The analysis shows that threshold‑crossing is computationally cheaper and works well when high firing rates are acceptable, whereas TTFS excels in low‑rate regimes where precise temporal information is essential.

The third pillar focuses on parameter tuning and network stability. Key parameters include synaptic weight (w), synaptic delay (d), firing threshold (V_{\text{th}}), and reset potential (V_{\text{reset}}). Using linear stability analysis and Lyapunov exponents, the authors derive a compact inequality that delineates a stable operating region: roughly (w,(V_{\text{th}}-V_{\text{reset}}) < C), where (C) is a constant determined by the intrinsic membrane time constant and the desired firing regime. Crossing this boundary leads to a positive Lyapunov exponent, manifesting as runaway excitation. By providing these mathematically grounded bounds, the paper offers a systematic alternative to trial‑and‑error tuning, which is especially valuable for large‑scale simulations where exhaustive parameter sweeps are infeasible.

Beyond the three pillars, the authors argue that many practical applications do not require sophisticated neural codes. When the goal is simply to transmit an analog signal or to implement a control law, the network can be reduced to a rate‑based representation: spikes are counted over a sliding window, low‑pass filtered, and then treated as a continuous signal. This “spike‑to‑rate” conversion eliminates the need for precise spike timing while preserving the functional behavior of the original model. The paper demonstrates that such a reduction can dramatically lower implementation complexity, making SNNs viable for real‑time robotics, neuromorphic hardware with limited resources, or large‑scale brain‑scale simulations.

In conclusion, the review consolidates a set of design principles that are both biologically grounded and computationally pragmatic. By insisting on deterministic mappings, the authors sidestep the added complexity of stochastic noise while still respecting the unavoidable temporal limits of real neurons. The presented formulas, numerical ranges, and stability criteria give practitioners concrete tools to avoid “meaningless” mechanisms—such as inserting spikes where a simple continuous calculation would suffice—and to construct SNNs that are both plausible and efficient. The paper’s central message is that, unless one explicitly needs advanced temporal codes, implementing a spiking network is a straightforward engineering task, provided these indisputable facts are kept in mind.

Comments & Academic Discussion

Loading comments...

Leave a Comment