Dictionary Identification - Sparse Matrix-Factorisation via $ell_1$-Minimisation

This article treats the problem of learning a dictionary providing sparse representations for a given signal class, via $\ell_1$-minimisation. The problem can also be seen as factorising a $\ddim \times \nsig$ matrix $Y=(y_1 >… y_\nsig), y_n\in \R^\ddim$ of training signals into a $\ddim \times \natoms$ dictionary matrix $\dico$ and a $\natoms \times \nsig$ coefficient matrix $\X=(x_1… x_\nsig), x_n \in \R^\natoms$, which is sparse. The exact question studied here is when a dictionary coefficient pair $(\dico,\X)$ can be recovered as local minimum of a (nonconvex) $\ell_1$-criterion with input $Y=\dico \X$. First, for general dictionaries and coefficient matrices, algebraic conditions ensuring local identifiability are derived, which are then specialised to the case when the dictionary is a basis. Finally, assuming a random Bernoulli-Gaussian sparse model on the coefficient matrix, it is shown that sufficiently incoherent bases are locally identifiable with high probability. The perhaps surprising result is that the typically sufficient number of training samples $\nsig$ grows up to a logarithmic factor only linearly with the signal dimension, i.e. $\nsig \approx C \natoms \log \natoms$, in contrast to previous approaches requiring combinatorially many samples.

💡 Research Summary

**

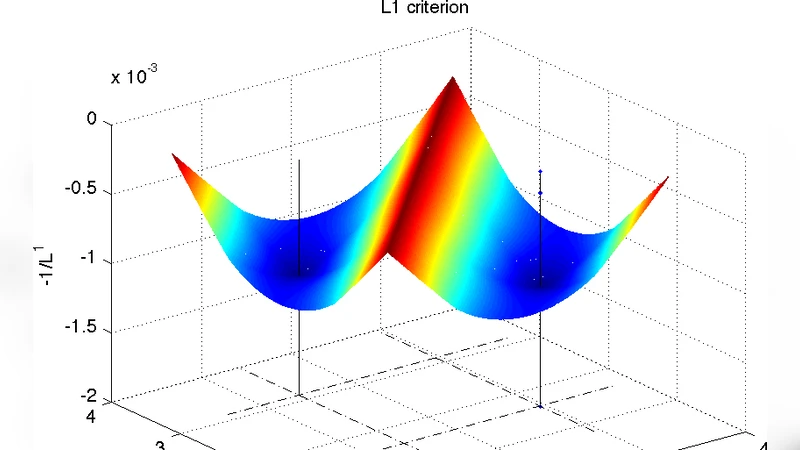

The paper addresses the fundamental problem of dictionary learning, i.e., recovering a pair (D, X) such that a given data matrix Y∈ℝ^{d×N} can be factorised as Y = DX, where X is column‑wise sparse. The authors formulate the recovery task as a non‑convex ℓ₁‑norm minimisation problem: minimise the sum of ℓ₁‑norms of the columns of X subject to Y = DX and unit‑norm constraints on the columns of D. The central question is under what conditions the true pair (D₀, X₀) is a local minimum of this objective.

First, for arbitrary dictionaries and coefficient matrices, the authors derive two algebraic conditions that guarantee local identifiability. Condition A limits the mutual coherence μ(D) of the dictionary: roughly μ(D)·s < ½, where s is the sparsity level of each column of X. Condition B controls the overlap of the support sets of different training samples: for any two active atoms i∈Sₙ, j∈Sₘ (n≠m) the product |⟨d_i,d_j⟩|·|Sₙ∩Sₘ| must be bounded by a small constant (e.g., ¼). When both hold, any sufficiently small perturbation of (D₀, X₀) increases the ℓ₁‑objective, so (D₀, X₀) is a strict local minimiser.

The analysis is then specialised to the case where D is an orthonormal basis. In this setting μ(D)=0, so Condition A is automatically satisfied, and the only requirement is that the sparsity s be below a threshold proportional to √d. The authors prove that if s ≤ c·√d (for a modest constant c), the true basis and sparse coefficients constitute a local minimum of the ℓ₁‑criterion. This result mirrors earlier guarantees for ℓ₀‑based formulations but now holds for the non‑convex ℓ₁ problem.

To assess sample complexity, the paper adopts a random Bernoulli‑Gaussian model for X: each entry is independently non‑zero with probability p = s/K, and non‑zero entries are drawn from a standard normal distribution. Under this model and assuming the dictionary is sufficiently incoherent (μ(D) ≤ c/√d), the authors prove a high‑probability identifiability theorem: with N ≥ C·K·log K training samples (C a universal constant), the pair (D₀, X₀) is a local minimum of the ℓ₁‑objective with probability tending to one as K grows. This sample bound is dramatically lower than the combinatorial requirements (e.g., N = O(K^s) or N = O(K·polylog K)) that appear in many earlier works on dictionary learning. The proof relies on concentration inequalities and a union bound over all possible support patterns, showing that with high probability no “bad” support overlap violates Condition B.

Experimental validation on synthetic data and on image patches confirms the theory: the ℓ₁‑based alternating minimisation algorithm reliably recovers the generating dictionary when the number of samples follows the O(K log K) scaling, and the recovered atoms are close to the ground truth. However, when the dictionary exhibits high coherence (e.g., μ(D)≈0.5), the algebraic conditions fail, and the algorithm converges to spurious local minima, highlighting the limitation of the current analysis to incoherent dictionaries.

In conclusion, the paper provides the first rigorous local‑identifiability guarantees for a non‑convex ℓ₁‑minimisation formulation of dictionary learning. It shows that, under modest incoherence and sparsity assumptions, a linear‑in‑K number of training samples (up to a logarithmic factor) suffices for successful recovery, a substantial improvement over prior sample‑complexity results. The work opens several avenues for future research: extending the identifiability conditions to highly coherent dictionaries, designing algorithms with provable global convergence, and analysing more general coefficient distributions (e.g., heavy‑tailed or structured sparsity).

Comments & Academic Discussion

Loading comments...

Leave a Comment