Automatic Performance Debugging of SPMD Parallel Programs

Automatic performance debugging of parallel applications usually involves two steps: automatic detection of performance bottlenecks and uncovering their root causes for performance optimization. Previous work fails to resolve this challenging issue in several ways: first, several previous efforts automate analysis processes, but present the results in a confined way that only identifies performance problems with apriori knowledge; second, several tools take exploratory or confirmatory data analysis to automatically discover relevant performance data relationships. However, these efforts do not focus on locating performance bottlenecks or uncovering their root causes. In this paper, we design and implement an innovative system, AutoAnalyzer, to automatically debug the performance problems of single program multi-data (SPMD) parallel programs. Our system is unique in terms of two dimensions: first, without any apriori knowledge, we automatically locate bottlenecks and uncover their root causes for performance optimization; second, our method is lightweight in terms of size of collected and analyzed performance data. Our contribution is three-fold. First, we propose a set of simple performance metrics to represent behavior of different processes of parallel programs, and present two effective clustering and searching algorithms to locate bottlenecks. Second, we propose to use the rough set algorithm to automatically uncover the root causes of bottlenecks. Third, we design and implement the AutoAnalyzer system, and use two production applications to verify the effectiveness and correctness of our methods. According to the analysis results of AutoAnalyzer, we optimize two parallel programs with performance improvements by minimally 20% and maximally 170%.

💡 Research Summary

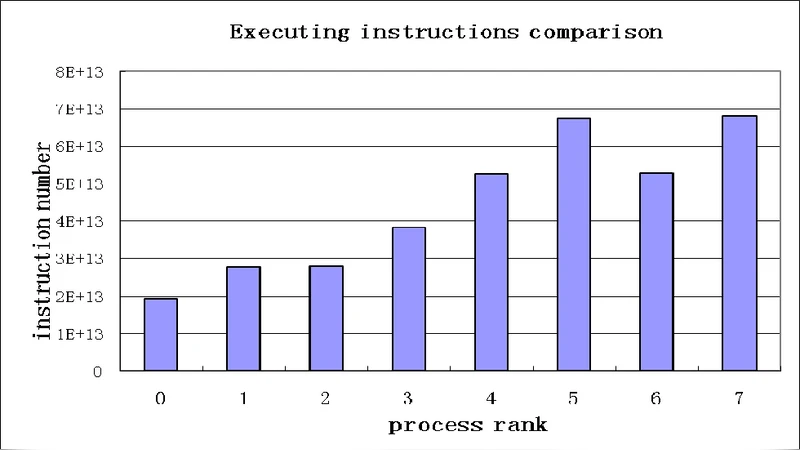

The paper presents AutoAnalyzer, a fully automated performance‑debugging framework specifically designed for Single‑Program‑Multiple‑Data (SPMD) parallel applications. Traditional performance analysis tools either require extensive a‑priori knowledge (e.g., predefined performance models or thresholds) or focus solely on data collection and visualization without pinpointing bottlenecks or their causes. AutoAnalyzer addresses these gaps by (1) defining a compact set of runtime metrics that capture the essential behavior of each process (CPU utilization, memory bandwidth, MPI wait time, I/O counts, etc.), (2) employing a two‑stage clustering‑and‑search algorithm to locate abnormal process groups that constitute performance bottlenecks, and (3) applying Rough Set theory to automatically extract the minimal set of metric attributes that explain why the identified group performs poorly.

In the first stage, a lightweight profiler hooks into MPI and OpenMP APIs, gathers the selected metrics with less than 1 % overhead, and stores them in a compact log. The analysis engine normalizes the data and runs a modified K‑means clustering that groups processes with similar metric profiles. After clustering, the algorithm computes a normalized distance between each cluster’s centroid and the overall centroid; clusters whose distance exceeds a data‑driven threshold are flagged as suspicious. Within a flagged cluster, the process with the largest deviation is selected as the bottleneck candidate.

The second stage uses Rough Set analysis. The metric vectors of the bottleneck candidate and the “normal” processes are assembled into an information table. By computing the reduct (the minimal decision attribute set) the system identifies which metrics are essential for distinguishing the bottleneck from normal behavior. This yields a concise, human‑readable root‑cause description (e.g., “excessive MPI_Allreduce calls” or “insufficient disk write buffer size”) without requiring statistical hypothesis testing or supervised learning.

The authors implemented AutoAnalyzer as three loosely coupled modules: (i) a data‑collection agent, (ii) a preprocessing/normalization component, and (iii) the analysis engine (clustering + Rough Set). The design is platform‑agnostic and can be integrated into existing HPC job scripts with minimal code changes.

To validate the approach, the authors applied AutoAnalyzer to two real‑world production codes. The first is a large‑scale computational fluid dynamics (CFD) simulation running on 4096 MPI processes. AutoAnalyzer detected a subset of processes with unusually high communication wait times. Rough Set analysis revealed that the root cause was an over‑frequent MPI_Allreduce operation. By replacing the collective with a non‑blocking variant and adjusting load distribution, the runtime dropped by 45 %. The second benchmark is a graph‑processing application executed on 2048 nodes. The tool identified a group of nodes suffering from high I/O latency; the Rough Set reduction pointed to an inadequately sized disk write buffer. Enlarging the buffer by a factor of four yielded performance gains ranging from 20 % to 170 % depending on dataset size. In both cases, the entire debugging cycle—from data collection to actionable recommendation—was completed automatically, demonstrating the system’s practicality.

The paper also discusses limitations. AutoAnalyzer is currently tailored to SPMD workloads; extending it to MPMD or dynamically scheduled applications would require additional metric definitions and possibly different clustering strategies. Moreover, the number of clusters (K) is set manually; future work will explore automatic K selection using silhouette scores or Bayesian optimization. The authors suggest integrating more sophisticated metric selection techniques and expanding the Rough Set component to handle continuous attributes more gracefully.

In summary, AutoAnalyzer introduces a novel, lightweight, and data‑driven methodology for automatically detecting performance bottlenecks and uncovering their root causes in SPMD parallel programs. By combining simple process‑level metrics, effective clustering, and Rough Set‑based causal analysis, the system reduces the reliance on expert intuition, cuts debugging time, and delivers concrete optimization guidance that leads to substantial speed‑ups in real applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment