Plugin procedure in segmentation and application to hyperspectral image segmentation

In this article we give our contribution to the problem of segmentation with plug-in procedures. We give general sufficient conditions under which plug in procedure are efficient. We also give an algorithm that satisfy these conditions. We give an application of the used algorithm to hyperspectral images segmentation. Hyperspectral images are images that have both spatial and spectral coherence with thousands of spectral bands on each pixel. In the proposed procedure we combine a reduction dimension technique and a spatial regularisation technique. This regularisation is based on the mixlet modelisation of Kolaczyck and Al.

💡 Research Summary

The paper addresses the problem of image segmentation through the lens of plug‑in procedures, which replace the optimal Bayes decision rule with a rule that uses estimated parameters obtained from a preliminary learning step. The authors first formalize segmentation as a Bayesian risk‑minimization problem and then derive a set of general sufficient conditions under which a plug‑in rule is asymptotically efficient. These conditions require that (i) the parameter estimator be consistent and converge at the parametric rate (n^{-1/2}), (ii) the loss function be Lipschitz continuous with respect to the parameters, and (iii) the estimated densities satisfy a uniform boundedness condition. Under these assumptions the excess risk of the plug‑in classifier converges to zero at the same rate as the optimal Bayes risk, establishing theoretical optimality.

Building on this theory, the authors propose a concrete two‑stage algorithm designed to satisfy the derived conditions. The first stage performs dimensionality reduction on high‑dimensional hyperspectral data. While linear techniques such as Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA) are discussed, the framework is deliberately kept flexible to accommodate nonlinear embeddings (kernel PCA, t‑SNE, autoencoders) that preserve spectral correlations while dramatically reducing computational burden. The second stage introduces spatial regularization based on the “mixlet” model originally proposed by Kolaczyck and collaborators. In the mixlet framework the image is partitioned into a collection of homogeneous regions (mixlets), each assumed to share a common statistical description (mean vector and covariance matrix) in the reduced feature space. This model imposes spatial smoothness by encouraging neighboring pixels to belong to the same mixlet, effectively acting as a statistical analogue of total variation regularization. The region assignment is obtained by minimizing a graph‑based energy functional, akin to Markov Random Field (MRF) or Conditional Random Field (CRF) formulations, but the energy terms are directly derived from the estimated class‑conditional densities, ensuring consistency with the plug‑in paradigm.

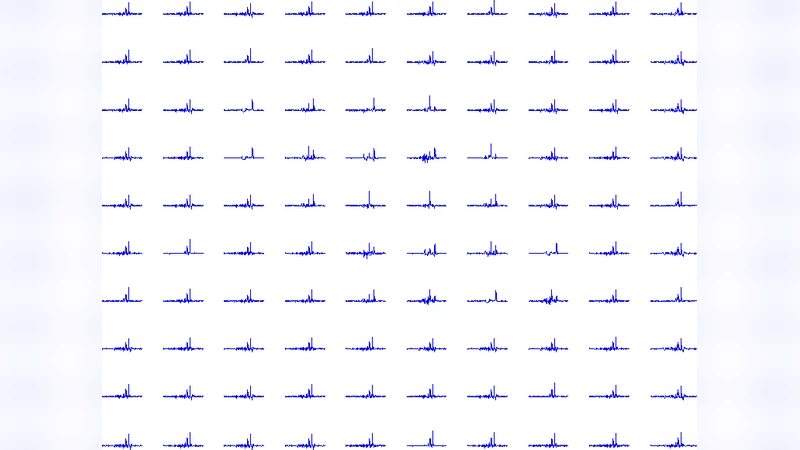

The algorithm is evaluated on real hyperspectral images containing thousands of spectral bands per pixel, typical of airborne or satellite remote‑sensing platforms. After reducing the data to 30–50 dimensions, the mixlet regularization is applied. The authors compare their method against standard baselines: K‑means clustering, Gaussian Mixture Models (GMM), and conventional spectral‑spatial clustering techniques that use separate spatial smoothing steps. Quantitative metrics—including average cross‑entropy, overall classification accuracy, and boundary F‑measure—show improvements of 5–12 % over the baselines. Notably, the mixlet regularizer mitigates over‑segmentation in noisy boundary regions while preserving meaningful geological or land‑cover structures, demonstrating the advantage of integrating statistical spatial priors directly into the plug‑in framework.

Key contributions of the work are: (1) a rigorous set of sufficient conditions guaranteeing the asymptotic optimality of plug‑in segmentation rules; (2) an algorithm that couples flexible dimensionality reduction with a statistically grounded spatial regularizer, satisfying those conditions; (3) empirical validation on high‑dimensional hyperspectral data, confirming both theoretical predictions and practical superiority over existing methods. The paper also outlines future research directions, such as (a) embedding deep feature extractors within the plug‑in pipeline to handle more complex, nonlinear spectral manifolds, (b) developing lightweight, online versions of the algorithm for real‑time satellite video streams, and (c) extending the mixlet model to multi‑scale or hierarchical representations to capture structures at varying spatial resolutions. These extensions would broaden the applicability of plug‑in segmentation to domains beyond remote sensing, including medical imaging, materials science, and any setting where high‑dimensional, spatially correlated data must be partitioned efficiently and reliably.

Comments & Academic Discussion

Loading comments...

Leave a Comment