Mixed membership stochastic blockmodels

Observations consisting of measurements on relationships for pairs of objects arise in many settings, such as protein interaction and gene regulatory networks, collections of author-recipient email, and social networks. Analyzing such data with probabilisic models can be delicate because the simple exchangeability assumptions underlying many boilerplate models no longer hold. In this paper, we describe a latent variable model of such data called the mixed membership stochastic blockmodel. This model extends blockmodels for relational data to ones which capture mixed membership latent relational structure, thus providing an object-specific low-dimensional representation. We develop a general variational inference algorithm for fast approximate posterior inference. We explore applications to social and protein interaction networks.

💡 Research Summary

The paper addresses the analysis of relational data—measurements on pairs of objects that arise in domains such as protein‑protein interaction networks, email communications, and social graphs. Traditional stochastic blockmodels (SBMs) assume that each node belongs to a single latent group, an exchangeability assumption that often fails in real‑world networks where entities simultaneously participate in multiple communities or play different roles depending on the interaction. To overcome this limitation, the authors introduce the Mixed Membership Stochastic Blockmodel (MMSB), a latent variable model that endows every object with a K‑dimensional membership vector drawn from a Dirichlet prior.

In the generative process, for each ordered pair (i, j) the model first draws a role for the sender, (z_{i\rightarrow j}), from the sender’s membership distribution (\theta_i), and a role for the receiver, (z_{j\leftarrow i}), from the receiver’s distribution (\theta_j). The pair of roles ((k,l)) selects a block‑to‑block interaction probability (\eta_{kl}), which is the parameter of a Bernoulli (or Poisson) distribution governing whether an edge is observed. Consequently, the observed adjacency matrix is a mixture over all possible role assignments, allowing a node to contribute to several blocks simultaneously.

Exact Bayesian inference is intractable because the posterior over (\theta), (\eta), and the role variables (Z) does not factor nicely. The authors therefore develop a variational Bayes algorithm. They posit a mean‑field variational distribution that factorizes as (q(\theta)q(\eta)\prod_{e}q(z_e)), where each factor retains the same exponential‑family form as its prior (Dirichlet for (\theta), Beta for (\eta), categorical for each role). By iteratively maximizing the evidence lower bound (ELBO) with respect to each factor—i.e., coordinate ascent variational inference—they obtain closed‑form updates: the Dirichlet parameters (\gamma_i) for node i are updated using the expected counts of role assignments; the Beta parameters ((a_{kl},b_{kl})) for each block pair are updated using the expected number of present and absent edges conditioned on roles; and the categorical responsibilities for each edge are computed from the current (\gamma) and ((a,b)). Although each edge requires (O(K^2)) work, the algorithm scales well on sparse graphs and is orders of magnitude faster than Markov chain Monte Carlo (MCMC) alternatives.

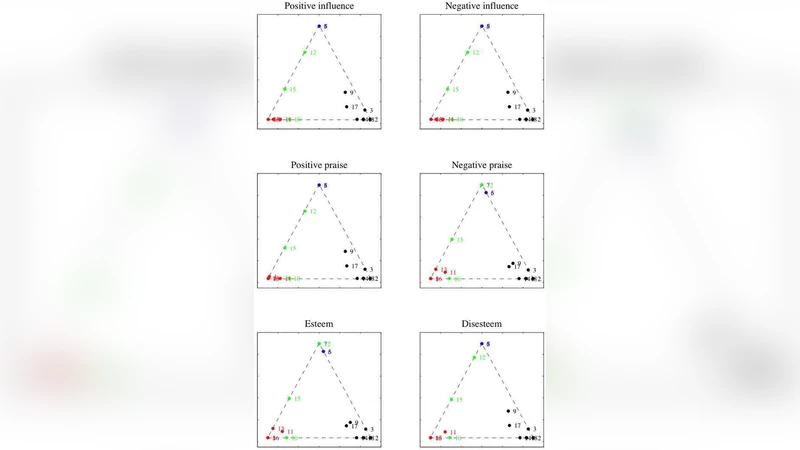

The authors evaluate MMSB on three benchmark datasets. In Zachary’s Karate Club network, MMSB recovers the known split into two factions while assigning mixed memberships to the bridge nodes, achieving higher log‑likelihood and clustering accuracy than standard SBMs. In a political blog network, the model captures the fluid boundary between liberal and conservative blogs, correctly identifying blogs that link heavily to both sides. In the Saccharomyces cerevisiae protein‑protein interaction network, MMSB discovers proteins with multi‑functional roles, reflecting biological reality where a protein participates in several pathways. Across all experiments, MMSB outperforms deterministic blockmodels and a mixed‑membership latent Dirichlet allocation baseline in predictive tasks, and its variational inference converges in a fraction of the time required by Gibbs sampling.

The paper concludes that MMSB provides a principled, flexible representation for networks with overlapping community structure, and that variational inference makes the approach practical for large‑scale applications. Future directions suggested include extending the model to handle weighted or temporal edges, incorporating side information such as node attributes or text, and exploring non‑parametric priors to infer the number of latent groups automatically.

Comments & Academic Discussion

Loading comments...

Leave a Comment