Bayesian Inference

This chapter provides a overview of Bayesian inference, mostly emphasising that it is a universal method for summarising uncertainty and making estimates and predictions using probability statements conditional on observed data and an assumed model (Gelman 2008). The Bayesian perspective is thus applicable to all aspects of statistical inference, while being open to the incorporation of information items resulting from earlier experiments and from expert opinions. We provide here the basic elements of Bayesian analysis when considered for standard models, refering to Marin and Robert (2007) and to Robert (2007) for book-length entries.1 In the following, we refrain from embarking upon philosophical discussions about the nature of knowledge (see, e.g., Robert 2007, Chapter 10), opting instead for a mathematically sound presentation of an eminently practical statistical methodology. We indeed believe that the most convincing arguments for adopting a Bayesian version of data analyses are in the versatility of this tool and in the large range of existing applications, rather than in those polemical arguments.

💡 Research Summary

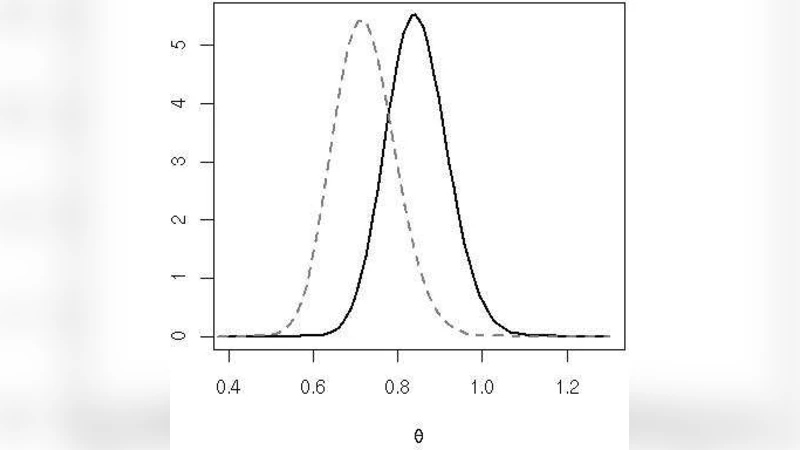

This chapter presents Bayesian inference as a universal statistical framework that quantifies uncertainty through probability statements conditioned on observed data and an assumed model. The exposition deliberately avoids philosophical debates about the nature of knowledge, focusing instead on a mathematically rigorous and practically oriented presentation. The core of Bayesian inference is Bayes’ theorem, which combines three elements: a prior distribution that encodes existing knowledge or expert opinion, a likelihood function that describes how data are generated under a given set of parameters, and a posterior distribution that updates the prior in light of the data. The posterior serves as a complete summary of uncertainty, allowing point estimates, credible intervals, and predictive distributions to be derived directly.

For standard models—such as normal, binomial, and Poisson—the use of conjugate priors yields analytically tractable posteriors, simplifying computation and interpretation. However, many real‑world problems involve hierarchical structures, non‑linear relationships, or non‑standard likelihoods where analytical solutions are unavailable. In these cases, numerical techniques become essential. The chapter outlines Markov chain Monte Carlo (MCMC) methods, including Gibbs sampling and Metropolis‑Hastings, as the workhorses for approximating complex posteriors. It also discusses convergence diagnostics (e.g., Gelman‑Rubin statistics) and strategies for improving sampling efficiency.

Beyond estimation, Bayesian inference excels at model comparison and prediction. The marginal likelihood (or evidence) enables the calculation of Bayes factors, providing a principled way to compare competing models. Posterior predictive distributions generate probabilistic forecasts for new observations, and the framework naturally accommodates uncertainty quantification for these predictions. Sensitivity analysis of the prior is emphasized, allowing practitioners to assess how robust their conclusions are to different prior specifications.

The chapter illustrates the versatility of Bayesian methods through diverse applications. In clinical trials, prior information from earlier studies can reduce required sample sizes while preserving rigorous inference. In economics, hierarchical panel models allow simultaneous estimation of country‑specific and time‑specific effects, with credible intervals reflecting all sources of uncertainty. In machine learning, Bayesian neural networks place distributions over weights, mitigating over‑fitting, and Bayesian optimization leverages posterior uncertainty to efficiently explore hyper‑parameter spaces.

Finally, the authors acknowledge limitations such as the subjective nature of prior choice, computational demands for high‑dimensional problems, and the need for careful model checking. Emerging solutions—variational inference, probabilistic programming languages like Stan and PyMC, and scalable MCMC algorithms—are highlighted as promising avenues for future research. The overarching message is that the most compelling argument for adopting Bayesian analysis lies in its flexibility, the breadth of existing applications, and its ability to coherently integrate data with prior knowledge, rather than in philosophical polemics.

Comments & Academic Discussion

Loading comments...

Leave a Comment