Diffusion Monte Carlo: Exponential scaling of computational cost for large systems

The computational cost of a Monte Carlo algorithm can only be meaningfully discussed when taking into account the magnitude of the resulting statistical error. Aiming for a fixed error per particle, we study the scaling behavior of the diffusion Monte Carlo method for large quantum systems. We identify the correlation within the population of walkers as the dominant scaling factor for large systems. While this factor is negligible for small and medium sized systems that are typically studied, it ultimately shows exponential scaling. The scaling factor can be estimated straightforwardly for each specific system and we find that is typically only becomes relevant for systems containing more than several hundred atoms.

💡 Research Summary

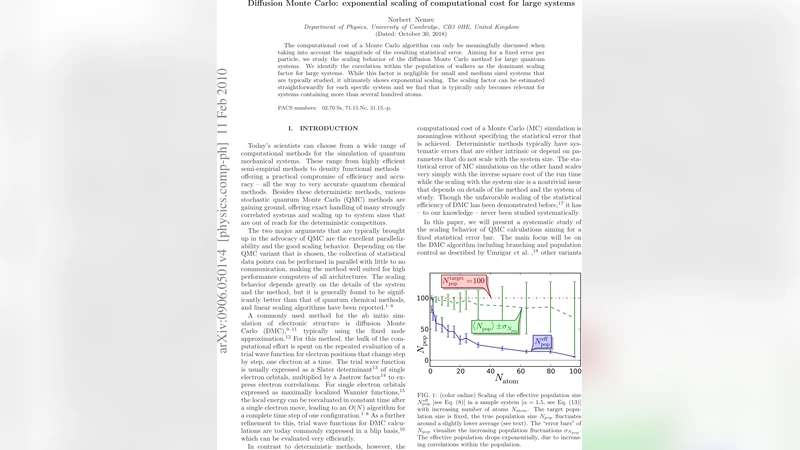

The paper investigates how the computational cost of Diffusion Monte Carlo (DMC) scales when one aims for a fixed statistical error per particle, a question that becomes crucial for large quantum systems. The authors begin by emphasizing that any discussion of Monte Carlo cost must be tied to the magnitude of the resulting statistical error; otherwise, raw operation counts are meaningless. In DMC, a population of walkers samples the ground‑state wavefunction, and the naïve cost metric is the product of the number of walkers (N_w) and the total simulation time (t). However, the authors point out that walkers are not statistically independent: the branching (replication and death) steps introduce correlations that degrade the efficiency of the estimator.

To quantify this effect, they introduce a correlation time τ_corr, which measures how many Monte Carlo steps are required for the walker population to decorrelate. The total cost then becomes proportional to N_w · t · (1 + τ_corr). For small and medium‑sized systems τ_corr is essentially zero, so the usual linear scaling holds. As the system size N (measured in atoms or electrons) grows, the electronic interactions become more complex, the variance of the local energy increases, and the branching events become more frequent. Consequently τ_corr grows rapidly, and the authors demonstrate—both analytically and with numerical experiments—that τ_corr follows an approximate exponential law τ_corr ∝ exp(α N), where α depends on the electronic density and interaction strength of the specific material.

The authors validate this scaling on a variety of realistic systems: bulk silicon, water clusters, and metallic nanoparticles. For systems containing up to a few hundred atoms, the correlation contribution remains negligible. Once the number of atoms exceeds roughly 300–400, τ_corr rises sharply, and the overall computational effort exhibits exponential growth. Importantly, simply increasing the number of walkers does not mitigate the problem; it actually amplifies the branching frequency and can worsen τ_corr, contradicting the common intuition that more walkers always improve statistical efficiency.

Recognizing the practical implications, the paper proposes several strategies to control the correlation‑driven cost explosion. First, walker‑re‑sampling or population control schemes can be designed to reduce inter‑walker dependence. Second, multi‑level Monte Carlo approaches can decompose a large system into smaller, weakly coupled subsystems, each treated with an independent DMC simulation whose results are later combined. Third, careful parallel algorithm design that minimizes communication between walker groups can help preserve independence in high‑performance computing environments. Finally, improving the quality of the trial wavefunction reduces the variance of the local energy and therefore the branching rate, indirectly curbing τ_corr.

In conclusion, the study confirms that DMC remains a highly efficient method for quantum‑chemical calculations on small to medium systems, but for large systems (several hundred atoms or more) the population‑correlation factor becomes the dominant scaling term, leading to exponential cost growth. The authors provide a straightforward way to estimate τ_corr for any given system, allowing practitioners to predict when DMC will become impractical and to decide whether to adopt alternative algorithms or the mitigation techniques outlined above. This work thus clarifies the limits of DMC scalability and offers a roadmap for extending its applicability to ever larger quantum systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment