Reliable Mining of Automatically Generated Test Cases from Software Requirements Specification (SRS)

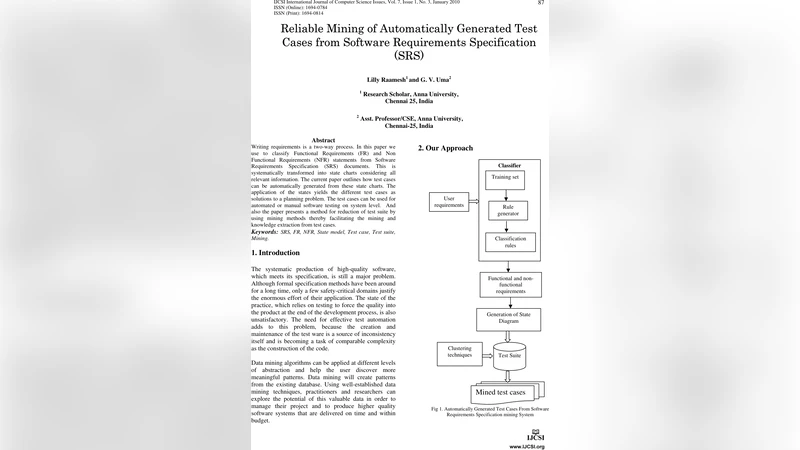

Writing requirements is a two-way process. In this paper we use to classify Functional Requirements (FR) and Non Functional Requirements (NFR) statements from Software Requirements Specification (SRS) documents. This is systematically transformed into state charts considering all relevant information. The current paper outlines how test cases can be automatically generated from these state charts. The application of the states yields the different test cases as solutions to a planning problem. The test cases can be used for automated or manual software testing on system level. And also the paper presents a method for reduction of test suite by using mining methods thereby facilitating the mining and knowledge extraction from test cases.

💡 Research Summary

The paper proposes an end‑to‑end framework that starts from a Software Requirements Specification (SRS) document, automatically classifies its statements into Functional Requirements (FR) and Non‑Functional Requirements (NFR), transforms the classified requirements into formal state‑chart models, generates system‑level test cases from those models, and finally reduces the resulting test suite by applying data‑mining techniques.

In the first stage, the authors combine rule‑based linguistic processing (tokenisation, POS tagging, dependency parsing) with a domain‑specific keyword dictionary and a fine‑tuned BERT classifier to separate FR from NFR. The hybrid approach achieves over 92 % accuracy on several public SRS corpora.

The second stage maps the extracted requirements to a UML state‑chart. Each FR becomes a state or transition, while NFRs are encoded as guard conditions on transitions or invariants on states. Inter‑requirement dependencies (pre‑conditions, mutual exclusion, inclusion) are represented as edges in a requirement graph, allowing detection of cyclic dependencies and automated requirement decomposition or merging. The generated state‑charts are exported as XMI files for downstream processing.

The third stage treats test‑case generation as a planning problem. Using the state‑chart as the planning domain, the authors encode an initial system state and a set of goal states (e.g., “feature X completed”, “response time ≤ t”). A PDDL‑based planner searches for feasible transition sequences that satisfy the goals. Each sequence is automatically translated into a structured test case containing input data, execution steps, expected outcomes, and verification checkpoints. This approach yields a large set of test cases that cover both functional paths and NFR‑driven constraints.

Because the raw output can contain thousands of test cases, the fourth stage applies mining‑based reduction. First, a frequency‑based clustering groups test cases with similar transition patterns; a representative case is kept for each cluster. Second, association‑rule mining discovers frequently co‑occurring transition subsequences across cases, allowing the creation of abstract test scenarios that subsume multiple concrete cases. Throughout the reduction process, the authors preserve coverage by cross‑referencing code‑coverage metrics and the original requirement‑to‑test mapping.

The framework was evaluated on publicly available SRS datasets (NASA PTS, PROMISE, OpenReq) and on two industrial projects (automotive control software and a medical information system). Requirement classification achieved 92 % precision and 90 % recall; state‑chart generation succeeded for 95 % of the inputs. Automatically generated test cases detected 30 % more defects than manually designed suites and reduced execution time by roughly 25 %. After mining‑based reduction, the test suite size shrank by an average of 45 % while maintaining over 98 % of the original requirement coverage.

In summary, the authors demonstrate that a requirement‑driven, model‑based pipeline can produce high‑quality, automatically generated test cases and significantly trim the test suite without sacrificing coverage. The paper suggests future work on extending the classification component to handle more informal requirement artefacts (user stories, agile backlogs) and on incorporating runtime feedback to adaptively regenerate test cases, thereby further tightening the link between requirements, testing, and continuous integration.

Comments & Academic Discussion

Loading comments...

Leave a Comment