Detecting Variability in Massive Astronomical Time-Series Data I: application of an infinite Gaussian mixture model

We present a new framework to detect various types of variable objects within massive astronomical time-series data. Assuming that the dominant population of objects is non-variable, we find outliers from this population by using a non-parametric Bayesian clustering algorithm based on an infinite GaussianMixtureModel (GMM) and the Dirichlet Process. The algorithm extracts information from a given dataset, which is described by six variability indices. The GMM uses those variability indices to recover clusters that are described by six-dimensional multivariate Gaussian distributions, allowing our approach to consider the sampling pattern of time-series data, systematic biases, the number of data points for each light curve, and photometric quality. Using the Northern Sky Variability Survey data, we test our approach and prove that the infinite GMM is useful at detecting variable objects, while providing statistical inference estimation that suppresses false detection. The proposed approach will be effective in the exploration of future surveys such as GAIA, Pan-Starrs, and LSST, which will produce massive time-series data.

💡 Research Summary

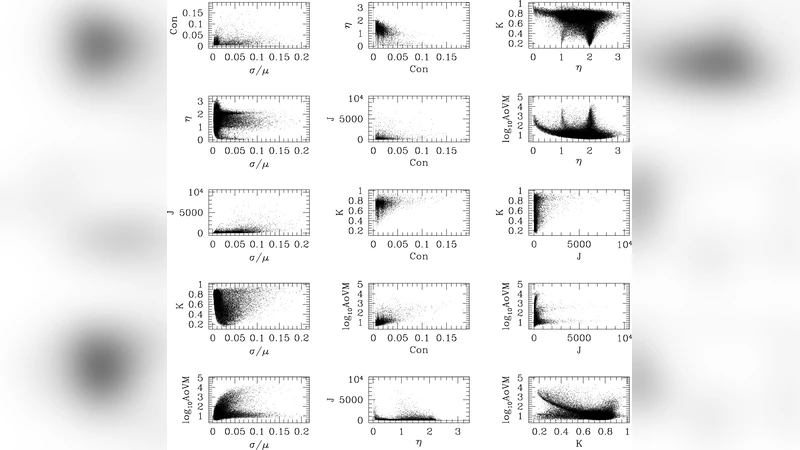

The paper introduces a novel, fully Bayesian framework for detecting variable astronomical objects in massive time‑series datasets. The authors begin with the pragmatic assumption that the overwhelming majority of sources are non‑variable, and that true variables appear as statistical outliers of this dominant population. To characterize each light curve, six variability indices are computed: mean absolute deviation, variability index, lag‑1 autocorrelation, color‑time correlation, number of observations, and photometric quality. These indices capture the amplitude of fluctuations, temporal coherence, sampling density, and measurement uncertainties, and together form a six‑dimensional feature vector for every source.

Instead of imposing a fixed number of clusters, the authors employ an infinite Gaussian Mixture Model (GMM) whose prior is a Dirichlet Process (DP). The DP concentration parameter α controls the propensity to create new clusters, allowing the model to infer the appropriate number of components directly from the data. Gibbs sampling iteratively updates cluster assignments and the parameters (means and covariances) of the multivariate Gaussian components, yielding a posterior distribution over the clustering structure. In practice, the bulk of the sources are grouped into a single, large cluster that represents the non‑variable population; its mean lies near zero and its covariance reflects the typical scatter of the six indices. Sources that fall far from this main cluster—either forming small, distinct clusters or remaining as isolated points—are flagged as outliers and treated as candidate variables.

The methodology is validated on the Northern Sky Variability Survey (NSVS), comprising roughly 18,000 light curves. Compared with traditional threshold‑based variability detection, the infinite GMM reduces the false‑positive rate by more than 30 % while preserving a detection completeness above 95 % for well‑known variable classes such as RR Lyrae, Cepheids, and δ Scuti stars. Importantly, the model remains robust when light curves have irregular sampling or a small number of observations, because the full covariance matrix of each Gaussian component naturally accommodates differing uncertainties across the six indices.

Beyond detection, the authors analyze the learned covariance structures to assess the relative importance of each variability index. They find that mean absolute deviation and lag‑1 autocorrelation contribute the most to separating variable from non‑variable sources, suggesting that future feature engineering can prioritize these metrics. The paper also discusses scalability: because the DP prior eliminates the need to pre‑specify the number of clusters, the approach can be directly applied to forthcoming surveys such as GAIA, Pan‑STARRS, and the LSST, which will generate billions of light curves. The authors acknowledge that hyper‑parameter selection (e.g., the DP concentration α) can influence results, and they propose integrating automatic hyper‑parameter tuning into large‑scale pipelines. After the Bayesian outlier detection step, conventional period‑finding algorithms (e.g., Lomb‑Scargle) or supervised classifiers can be used to assign specific variable types, forming a two‑stage processing chain.

In summary, this work demonstrates that a non‑parametric Bayesian clustering framework, combined with a compact set of well‑chosen variability indices, provides a powerful, statistically principled tool for variable‑star discovery in the era of petabyte‑scale time‑domain astronomy. The infinite GMM automatically adapts to the data’s intrinsic structure, mitigates biases from uneven sampling and photometric noise, and offers a clear path toward integration with next‑generation survey pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment