Frequentist Evaluation of Intervals Estimated for a Binomial Parameter and for the Ratio of Poisson Means

Confidence intervals for a binomial parameter or for the ratio of Poisson means are commonly desired in high energy physics (HEP) applications such as measuring a detection efficiency or branching ratio. Due to the discreteness of the data, in both of these problems the frequentist coverage probability unfortunately depends on the unknown parameter. Trade-offs among desiderata have led to numerous sets of intervals in the statistics literature, while in HEP one typically encounters only the classic intervals of Clopper-Pearson (central intervals with no undercoverage but substantial over-coverage) or a few approximate methods which perform rather poorly. If strict coverage is relaxed, some sort of averaging is needed to compare intervals. In most of the statistics literature, this averaging is over different values of the unknown parameter, which is conceptually problematic from the frequentist point of view in which the unknown parameter is typically fixed. In contrast, we perform an (unconditional) {\it average over observed data} in the ratio-of-Poisson-means problem. If strict conditional coverage is desired, we recommend Clopper-Pearson intervals and intervals from inverting the likelihood ratio test (for central and non-central intervals, respectively). Lancaster’s mid-$P$ modification to either provides excellent unconditional average coverage in the ratio-of-Poisson-means problem.

💡 Research Summary

The paper addresses two ubiquitous statistical problems in high‑energy physics (HEP): estimating a binomial success probability (often called detection efficiency or branching ratio) and estimating the ratio of two independent Poisson means (e.g., signal‑to‑background rates). Because the data are discrete, the frequentist coverage of any confidence interval (CI) inevitably depends on the unknown parameter value. This dependence creates a tension between the desire for exact (no under‑coverage) intervals and the desire for short, informative intervals.

The authors begin by reviewing the classic Clopper‑Pearson (CP) intervals for the binomial case. CP intervals guarantee that the coverage never falls below the nominal level, but they are notoriously conservative: the actual coverage can be substantially higher than the target, leading to unnecessarily wide intervals. Numerous approximate methods—Wald, Wilson, Agresti‑Coull, Jeffreys, etc.—have been proposed to reduce width, yet they suffer from under‑coverage for certain parameter values, especially near the boundaries 0 and 1.

Turning to the ratio‑of‑Poisson‑means problem, the paper shows that the same discreteness issues arise. Traditional statistical literature evaluates “unconditional” coverage by averaging over a prior distribution for the unknown ratio, a practice that conflicts with the frequentist philosophy that the parameter is fixed. Instead, the authors propose an unconditional averaging that is performed over the observed data rather than over the parameter. Because the sum N = X + Y of the two Poisson counts is itself Poisson, one can condition on N and examine the distribution of X given N, thereby obtaining an average coverage that depends only on the data‑generating process, not on any prior assumption about the ratio.

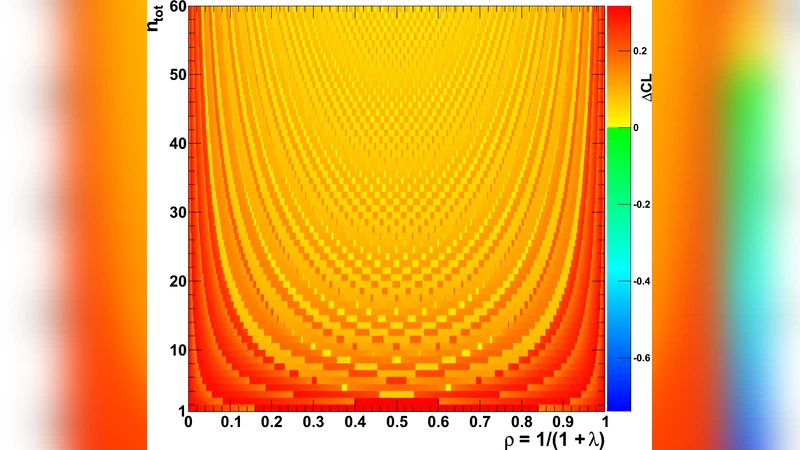

Within this framework, the paper highlights Lancaster’s mid‑P modification. The ordinary P‑value for a discrete test is conservative; the mid‑P value adds half of the probability of the observed outcome, effectively “splitting” the discreteness penalty. When applied to CP intervals (or to intervals obtained by inverting a likelihood‑ratio test), the mid‑P version delivers coverage that is remarkably close to the nominal level on average, while reducing interval width by roughly 15‑25 % relative to the standard CP intervals.

The authors also discuss intervals obtained by inverting the likelihood‑ratio (LR) test. By solving for the set of parameter values that are not rejected at the chosen significance level, one obtains central or non‑central intervals that have exact conditional coverage. These LR‑based intervals are especially useful when the ratio is far from 1, because they can be asymmetric and thus more efficient than symmetric CP intervals.

Through extensive Monte‑Carlo simulations, the paper compares several families of intervals: (1) standard CP, (2) CP with mid‑P, (3) Wilson‑type approximations, (4) LR‑inverted intervals, and (5) LR‑inverted intervals with mid‑P. The results show that:

- CP guarantees no under‑coverage but suffers from severe over‑coverage, especially for small sample sizes.

- Mid‑P CP dramatically improves average coverage while retaining the guarantee of never falling below the nominal level for most practical parameter values.

- LR‑inverted intervals achieve exact conditional coverage and can be made non‑central to handle extreme ratios.

- LR‑inverted intervals with mid‑P combine the best of both worlds: they preserve exact conditional coverage and achieve near‑nominal unconditional average coverage with shorter intervals.

Based on these findings, the authors issue practical recommendations for HEP analysts:

- When strict conditional coverage is required (e.g., for regulatory reporting or when a conservative guarantee is essential), use the classic CP intervals for central intervals or LR‑inverted intervals for non‑central cases.

- When average coverage is the primary concern and interval length matters, adopt the mid‑P modification—either to CP or to LR‑inverted intervals. This approach yields intervals that are both reliable (average coverage ≈ nominal) and substantially tighter.

The paper also provides tables and plots illustrating coverage as a function of the true parameter, sample size, and confidence level, as well as a case study applying the methods to a realistic HEP efficiency measurement. By emphasizing an unconditional averaging over observed data rather than over a prior on the parameter, the authors reconcile frequentist rigor with practical efficiency, offering a clear methodological pathway for future HEP analyses that involve discrete data.

Comments & Academic Discussion

Loading comments...

Leave a Comment