Statistical methods for cosmological parameter selection and estimation

The estimation of cosmological parameters from precision observables is an important industry with crucial ramifications for particle physics. This article discusses the statistical methods presently used in cosmological data analysis, highlighting the main assumptions and uncertainties. The topics covered are parameter estimation, model selection, multi-model inference, and experimental design, all primarily from a Bayesian perspective.

💡 Research Summary

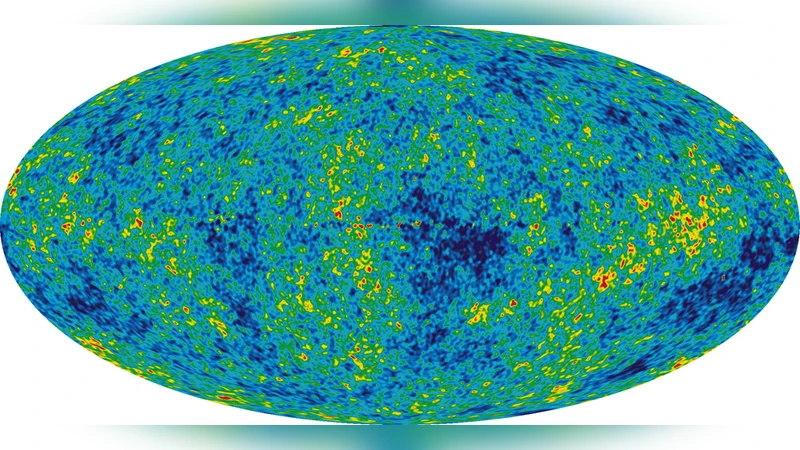

The paper provides a comprehensive review of the statistical techniques currently employed in cosmological data analysis, with a particular emphasis on Bayesian methodology. It begins by motivating the importance of precise cosmological parameter estimation for both particle physics and fundamental theory, noting that modern surveys such as the cosmic microwave background (CMB) experiments, large‑scale structure observations, and Type‑Ia supernova distance measurements have reached a level of precision that demands rigorous statistical treatment.

In the parameter‑estimation section, the authors lay out the full Bayesian framework: a likelihood function that encodes the probability of the data given a set of model parameters, and a prior distribution that incorporates external theoretical or experimental knowledge. They discuss how the posterior distribution, obtained via Bayes’ theorem, fully captures uncertainties and correlations among parameters. Because analytical solutions are rarely possible in the high‑dimensional spaces typical of cosmology, the paper devotes considerable space to Markov Chain Monte Carlo (MCMC) methods. Classic Metropolis‑Hastings and Gibbs sampling are described alongside more advanced algorithms such as Hamiltonian Monte Carlo (HMC) and the No‑U‑Turn Sampler (NUTS), which exploit gradient information to achieve higher efficiency. Convergence diagnostics—including Gelman‑Rubin statistics, effective sample size calculations, and trace‑plot inspection—are presented as essential tools for validating the sampling process.

The model‑selection chapter focuses on Bayesian evidence (the marginal likelihood) as the central quantity for comparing competing cosmological models. The authors explain that evidence integrates the likelihood over the entire prior volume, automatically penalizing overly complex models. They review numerical techniques for evidence calculation, notably Nested Sampling, thermodynamic integration, and importance sampling, and illustrate how Bayes factors derived from evidence ratios can be interpreted using Jeffreys’ scale (weak, moderate, strong, decisive). While acknowledging the continued use of information criteria such as AIC and BIC in the community, the paper argues that Bayesian evidence offers a more principled treatment of model complexity and prior information.

In the multi‑model inference section, the paper moves beyond selecting a single “best” model. It introduces Bayesian model averaging, where posterior model probabilities weight the parameter posteriors from each candidate model, thereby propagating model‑selection uncertainty into final parameter estimates. The authors discuss practical implementations, including the computation of model‑averaged predictions and the assessment of predictive variance across the model set.

The experimental‑design portion treats the problem of optimizing future surveys to maximize their scientific return. The Fisher information matrix is presented as a tool for forecasting parameter uncertainties given a proposed survey configuration. The authors then describe Bayesian optimal design, where the expected information gain (or equivalently, the expected reduction in posterior entropy) is maximized with respect to design variables such as sky coverage, redshift range, and instrumental noise. They illustrate how simulation‑based approaches can be used to explore the high‑dimensional design space and identify configurations that provide the most stringent constraints on key cosmological parameters.

Finally, the paper outlines several challenges that remain for Bayesian cosmology. Prior choice can be subjective, and sensitivity analyses are recommended to assess robustness. High‑dimensional integrations remain computationally demanding, motivating the development of parallelized algorithms, GPU acceleration, and variational Bayesian approximations. Systematic errors—instrumental calibration, astrophysical foregrounds, and data correlation structures—must be modeled hierarchically, often using Gaussian‑process priors or nuisance‑parameter marginalization. The authors suggest future directions such as machine‑learning‑driven prior construction, automatic differentiation for gradient‑based samplers, and greater standardization of data products across international collaborations.

In conclusion, the authors reaffirm that Bayesian statistics provide a coherent, transparent, and quantitatively rigorous framework for both parameter estimation and model selection in modern cosmology. By explicitly accounting for uncertainties at every stage—from prior specification through data likelihood to experimental design—Bayesian methods are poised to remain central to the next generation of precision cosmology.

Comments & Academic Discussion

Loading comments...

Leave a Comment