Data Preservation and Long Term Analysis in High Energy Physics

High energy physics data is a long term investment and contains the potential for physics results beyond the lifetime of a collaboration. Many existing experiments are concluding their physics programs, and looking at ways to preserve their data heritage. Preservation of high-energy physics data and data analysis structures is a challenge, and past experience has shown it can be difficult if adequate planning and resources are not provided. A study group has been formed to provide guidelines for such data preservation efforts in the future. Key areas to be investigated were identified at a workshop at DESY in January 2009, to be followed by a workshop at SLAC in May 2009. More information can be found at http://dphep.org

💡 Research Summary

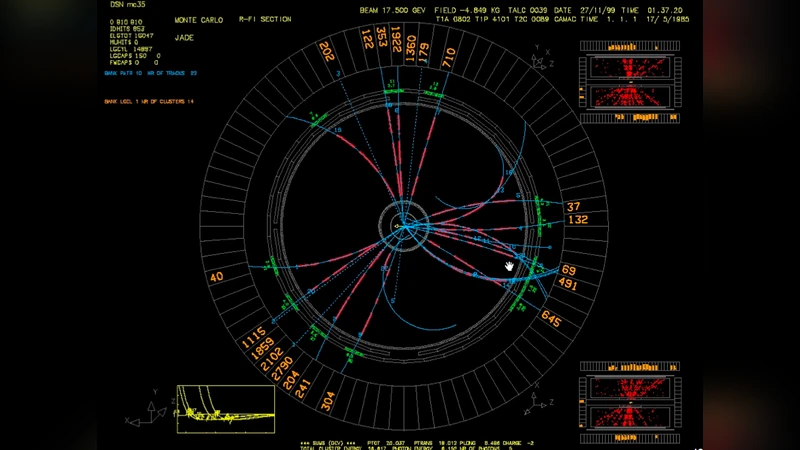

High‑energy physics (HEP) experiments represent multi‑decade investments in large‑scale accelerators, complex detectors, and massive data‑taking infrastructures. When an experiment ends its physics program, the raw and processed data, together with the software and documentation that enable their analysis, remain a valuable scientific asset. This paper examines the challenges of preserving such assets for long‑term use and proposes a set of guidelines derived from community workshops held at DESY (January 2009) and SLAC (May 2009).

The authors first motivate data preservation by citing historical examples where re‑analysis of legacy data with newer theoretical models or advanced statistical techniques yielded significant physics results. They argue that data should be treated as a reusable research object rather than a static archive.

Next, the scope of preservation is defined. It includes raw detector output, reconstructed event collections, derived analysis datasets, the full software stack (source code, libraries, compiler options), the execution environment, and comprehensive metadata describing detector conditions, calibration constants, and analysis workflows. Proper documentation links these components and ensures that future users can understand the provenance and meaning of the data.

Technical challenges are then addressed. Long‑term readability of data formats demands either the adoption of open, self‑describing standards or a robust conversion pipeline to migrate proprietary formats (e.g., ROOT) to future‑proof alternatives. Software dependencies are mitigated through virtualization and container technologies (Docker, Singularity), which capture the exact runtime environment. Storage of petabyte‑scale datasets requires hierarchical storage management (HSM) combined with cloud‑based tiering to balance cost and accessibility. Data integrity is maintained by regular checksum verification and multiple geographically dispersed replicas.

From an organizational perspective, the paper recommends establishing dedicated data‑preservation teams within each experiment, securing sustainable funding beyond the active data‑taking phase, and defining clear responsibilities for custodianship. International coordination through the DPHEP initiative is emphasized to develop common standards, share best practices, and avoid duplicated effort. Training programs are advocated to transfer knowledge to new generations of analysts, and to embed preservation considerations early in the experiment design lifecycle.

The workshop outcomes are summarized: participants produced a draft policy framework covering preservation planning, governance, metadata catalog design, access control, and a roadmap for standardization. Consensus emerged that early integration of preservation activities—such as systematic metadata capture, version‑controlled software repositories, and reproducible analysis pipelines—greatly reduces later effort and risk of knowledge loss.

In conclusion, preserving HEP data and its analysis ecosystem is a multifaceted challenge that spans technical, managerial, and financial domains. By adopting community‑driven guidelines, leveraging modern IT solutions (open formats, containers, cloud storage), and fostering international collaboration, the field can ensure that valuable experimental data remain accessible and scientifically productive for decades to come, enabling future discoveries that build upon the legacy of past experiments.

Comments & Academic Discussion

Loading comments...

Leave a Comment