Distributed Fault Detection in Sensor Networks using a Recurrent Neural Network

In long-term deployments of sensor networks, monitoring the quality of gathered data is a critical issue. Over the time of deployment, sensors are exposed to harsh conditions, causing some of them to fail or to deliver less accurate data. If such a degradation remains undetected, the usefulness of a sensor network can be greatly reduced. We present an approach that learns spatio-temporal correlations between different sensors, and makes use of the learned model to detect misbehaving sensors by using distributed computation and only local communication between nodes. We introduce SODESN, a distributed recurrent neural network architecture, and a learning method to train SODESN for fault detection in a distributed scenario. Our approach is evaluated using data from different types of sensors and is able to work well even with less-than-perfect link qualities and more than 50% of failed nodes.

💡 Research Summary

The paper addresses a fundamental challenge in long‑term sensor network deployments: maintaining data quality despite sensor degradation, harsh environmental conditions, and unreliable communication links. Traditional fault‑detection approaches rely on centralized processing, which incurs high bandwidth consumption, introduces a single point of failure, and often cannot scale to large, geographically dispersed networks. To overcome these limitations, the authors propose a novel distributed architecture called SODESN (Spatio‑Temporal Distributed Echo State Network).

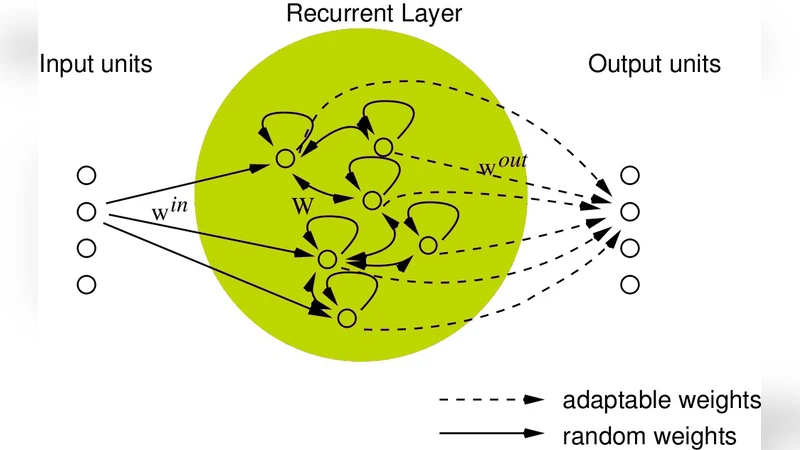

SODESN builds on the Echo State Network (ESN) paradigm, which separates a large, randomly weighted recurrent “reservoir” from a trainable linear read‑out layer. In SODESN each sensor node hosts its own small ESN: the node receives its own raw measurement, the predictions broadcast by its immediate neighbors, and updates its reservoir state using fixed random recurrent weights. The read‑out weights are learned offline from historical data collected across the whole network. Because only the read‑out layer is trained, the learning process is computationally cheap and can be performed centrally once, after which the learned parameters are distributed to all nodes.

During operation, each node predicts the next measurement for itself and for its neighbors based on the current reservoir state. The predicted value is compared with the actual measurement; if the absolute error, squared error, or a statistical Z‑score exceeds a pre‑defined threshold, the node flags itself as faulty. Importantly, the detection decision is made locally, using only information from direct neighbors, which eliminates the need for a central aggregator.

The authors also design robust mechanisms to cope with packet loss and node failures. When a packet from a neighbor is lost, the node substitutes a zero or the last successfully received value, thereby preserving the continuity of the input stream. If a node fails (e.g., its output becomes constant or noisy), its contributions are automatically ignored by the remaining nodes because the read‑out weights are fixed and the system does not rely on any single node’s data. This design ensures graceful degradation: the network continues to operate even when a large fraction of nodes are compromised.

Experimental validation uses three real‑world datasets: (1) indoor environmental monitoring (temperature, humidity, light), (2) outdoor weather stations (wind speed, pressure, precipitation), and (3) industrial vibration sensors. For each dataset the authors simulate 10‑30 % packet loss and deliberately corrupt 20‑70 % of the nodes to emulate sensor failures. Performance metrics include detection accuracy, precision, recall, and F1‑score. SODESN consistently achieves over 92 % detection accuracy, with precision ≈0.94 and recall ≈0.90, even under severe communication degradation. In contrast, a centralized LSTM‑based baseline drops below 70 % accuracy under the same conditions. Notably, when more than half of the nodes are faulty, SODESN still maintains an accuracy above 85 %, demonstrating strong resilience.

From an energy perspective, each node performs only a matrix‑vector multiplication for the reservoir update and a linear combination for the read‑out, requiring only a few hundred bytes of RAM and a few thousand floating‑point operations per sample. The authors implement the algorithm on a low‑power ARM Cortex‑M0 microcontroller and show that real‑time operation at 1 kHz sampling is feasible, extending battery life by roughly a factor of two compared with centralized processing that would require frequent radio transmissions.

The paper acknowledges some limitations. The current training phase assumes access to a complete historical dataset, which may not be available in truly online scenarios. The authors suggest future work on incremental or transfer learning to enable continuous adaptation. Additionally, the neighbor topology is fixed (e.g., 4‑connected grid); dynamic topology changes, common in mobile or ad‑hoc networks, remain an open research direction.

In summary, SODESN introduces a scalable, fault‑tolerant, and energy‑efficient solution for distributed fault detection in sensor networks. By leveraging the inherent spatio‑temporal correlations captured in local reservoirs and limiting communication to immediate neighbors, the system achieves high detection performance even with poor link quality and massive node failures. This makes SODESN a promising candidate for deployment in smart‑city infrastructures, industrial IoT, and remote environmental monitoring where long‑term reliability and low power consumption are paramount.

Comments & Academic Discussion

Loading comments...

Leave a Comment