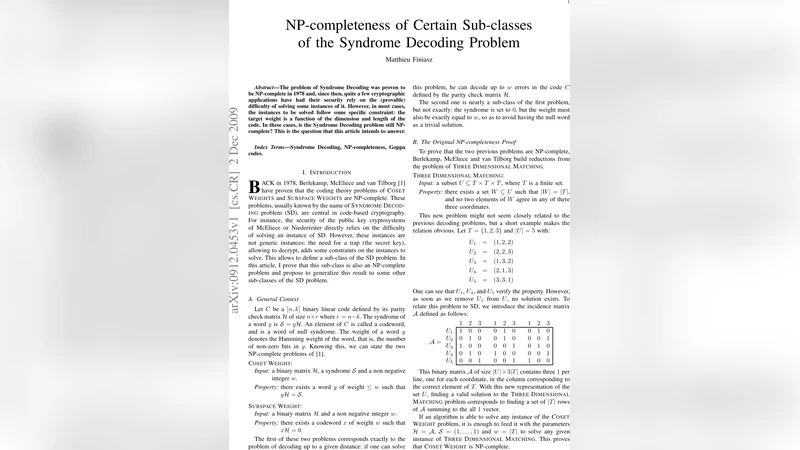

NP-completeness of Certain Sub-classes of the Syndrome Decoding Problem

The problem of Syndrome Decoding was proven to be NP-complete in 1978 and, since then, quite a few cryptographic applications have had their security rely on the (provable) difficulty of solving some instances of it. However, in most cases, the instances to be solved follow some specific constraint: the target weight is a function of the dimension and length of the code. In these cases, is the Syndrome Decoding problem still NP-complete? This is the question that this article intends to answer.

💡 Research Summary

The paper investigates whether the well‑known NP‑completeness of the Syndrome Decoding (SD) problem persists when the problem is restricted to instances that arise in practical code‑based cryptosystems, where the target Hamming weight is not an arbitrary integer but a function of the code length and dimension. The author first revisits the classic 1978 proof by Berlekamp, McEliece, and van Tilborg, which establishes NP‑completeness for two formulations: COSET‑WEIGHT (given a parity‑check matrix H, a syndrome S and an integer w, does there exist a vector y of weight ≤ w such that yH = S?) and SUBSPACE‑WEIGHT (given H and w, does there exist a non‑zero codeword of exact weight w?). Both formulations are central to decoding up to a given distance.

To show that these problems remain hard under additional constraints, the author uses a reduction from the well‑studied NP‑complete problem Three‑Dimensional Matching (TDM). An instance of TDM consists of a set U ⊆ T×T×T and asks whether one can select |T| triples that are pairwise disjoint in each coordinate. By constructing the incidence matrix A of U (a binary matrix with three 1’s per row, each placed in the column corresponding to the element of T appearing in that coordinate), the author shows that solving COSET‑WEIGHT on input H = A, syndrome S = (1,…,1) and w = |T| is equivalent to solving the original TDM instance. This reproduces the original NP‑completeness proof in a compact, intuitive way.

The paper then focuses on the specific constraints imposed by Goppa codes, which underlie the McEliece and Niederreiter cryptosystems. A binary Goppa code of length n = 2^m and dimension k = n – mt (t is the error‑correction capability) has a parity‑check matrix of size n × r with r = mt. Decoding such a code amounts to solving a COSET‑WEIGHT instance where the weight bound w equals t = n – k·log₂ n. The author defines a new problem, Goppa‑Parameterized Syndrome Decoding (GP‑SD), which asks exactly this question. By padding the incidence matrix A with zeros to match the dimensions of a Goppa parity‑check matrix, and by choosing a syndrome consisting of |T| ones followed by zeros, the reduction from TDM to GP‑SD becomes polynomial as long as |U| ≥ 8 (smaller instances can be solved by brute force). Consequently, GP‑SD is NP‑complete.

A second variant, Goppa‑Parameterized Subspace Weight (GP‑SW), asks for a codeword of the minimum distance of a Goppa code, i.e., a vector of weight 2t+1 whose syndrome is zero. The classic reduction from TDM to SUBSPACE‑WEIGHT uses a matrix B that is too large to keep the reduction polynomial under the Goppa constraints. The author therefore designs more compact matrices C₁ and C₂ (depending on the parity of |T|) that add only a single row and column to A, thereby forcing the required weight to be |T|+1. This construction yields a polynomial‑time reduction from TDM to SUBSPACE‑WEIGHT under the Goppa parameters, and by combining it with the previous padding technique the author shows that GP‑SW is also NP‑complete.

In the third part, the author abstracts the method into a generic framework for “parameterized” SD problems. Two new decision problems are introduced: Parameterized Syndrome Decoding (PSD) and Parameterized Subspace Weight (PSW), each defined by a function f(r,w) that determines the number of rows n of the parity‑check matrix as a polynomial function of the syndrome length r and the weight bound w. The paper states sufficient conditions (existence of auxiliary functions g, g′ and polynomials P, Q, together with a threshold λ) under which the reduction from TDM to PSD or PSW is polynomial, thus guaranteeing NP‑completeness. The Goppa case satisfies these conditions with concrete choices of g and λ.

The conclusion emphasizes that the newly defined Goppa‑specific variants of SD are provably NP‑complete, and that the compact reduction for SUBSPACE‑WEIGHT presented here can be adapted to other code families, provided their structural constraints meet the outlined criteria. This work therefore strengthens the theoretical foundations of code‑based cryptography: the hardness assumptions underlying schemes such as McEliece and Niederreiter remain valid even when the decoding problem is restricted to the exact parameters of the underlying code. The paper invites future researchers to apply the generic reduction framework to other families (e.g., LDPC, Reed‑Solomon) and to verify whether similar NP‑completeness results hold.

Comments & Academic Discussion

Loading comments...

Leave a Comment