Techniques for the generation of 3D Finite Element Meshes of human organs

This chapter aims at introducing and discussing the techniques for the generation of 3D Finite Element Meshes of human organs. The field of computer assisted surgery is more specifically addressed.

💡 Research Summary

This chapter provides a comprehensive overview of the state‑of‑the‑art techniques for generating three‑dimensional finite‑element (FE) meshes of human organs, with a particular emphasis on applications in computer‑assisted surgery (CAS). The authors organize the workflow into a sequence of well‑defined stages: image acquisition, preprocessing, segmentation, surface reconstruction, volume meshing, mesh quality improvement, and clinical integration.

In the acquisition stage, high‑resolution computed tomography (CT) and magnetic resonance imaging (MRI) are identified as the primary data sources. The chapter discusses modality‑specific challenges—CT excels at depicting bone and high‑contrast structures, whereas MRI provides superior soft‑tissue contrast but suffers from lower signal‑to‑noise ratio and intensity non‑uniformities. Preprocessing techniques such as anisotropic diffusion filtering, histogram equalization, and metal‑artifact reduction are recommended to homogenize intensity distributions before segmentation.

Segmentation is presented as the most critical bottleneck. Traditional thresholding and region‑growing methods are described, highlighting their dependence on user‑defined seed points and susceptibility to partial volume effects. The authors then shift focus to deep‑learning approaches, especially 3‑D U‑Net and V‑Net architectures, which have achieved Dice similarity coefficients exceeding 0.90 on publicly available organ‑segmentation benchmarks. Transfer learning and data‑augmentation strategies are emphasized as practical ways to overcome limited annotated datasets in clinical environments.

Following segmentation, surface reconstruction converts voxel‑wise labels into polygonal representations. The chapter compares three principal algorithms: Marching Cubes, Dual Contouring, and Level‑Set based methods. Marching Cubes offers speed and simplicity but often yields overly dense, jagged meshes. Dual Contouring preserves sharp features and volume fidelity, making it suitable for hard tissues such as bone. Level‑Set techniques handle complex topologies naturally but incur higher computational cost, limiting their use in real‑time pipelines. Post‑reconstruction smoothing (Laplacian, Taubin) and decimation are recommended to reduce noise while preserving anatomical fidelity.

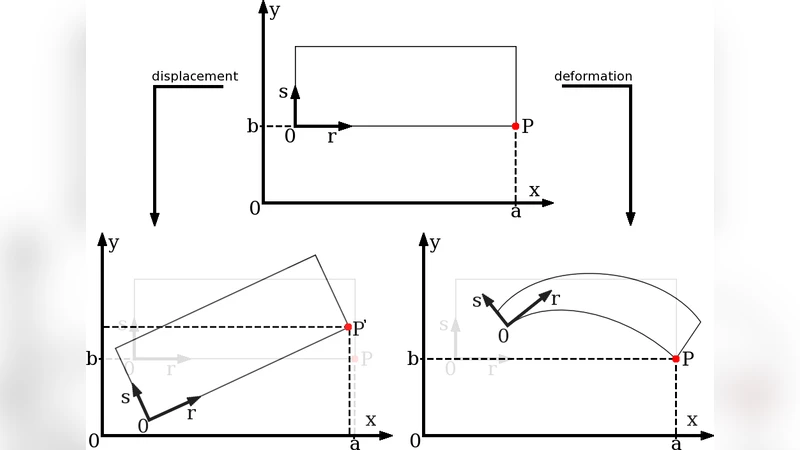

The transition from surface to volume mesh is explored through three dominant strategies: Delaunay tetrahedralization, Advancing Front, and Octree‑based adaptive meshing. Delaunay methods guarantee good element shape metrics (e.g., minimum dihedral angle) but require additional constraints to embed internal structures like vasculature. Advancing Front excels at preserving boundary conformity but can become memory‑intensive for large datasets. Octree‑based approaches enable spatially adaptive resolution, allocating fine elements to regions of surgical interest (e.g., tumor margins) while coarsening elsewhere, thereby balancing accuracy and computational load.

Mesh quality assessment is treated as a mandatory validation step. Quantitative metrics—including element distortion, aspect ratio, Jacobian determinant, and condition number—are defined, and automated pipelines for detecting and repairing low‑quality elements are described. The authors discuss smoothing techniques (Laplacian, Taubin) and optimization‑based improvement (Centroidal Voronoi Tessellation, spring‑relaxation) that systematically enhance element shape without compromising geometric fidelity. The relationship between mesh resolution and biomechanical property assignment (elastic modulus, Poisson’s ratio) is examined, emphasizing that overly coarse meshes can produce significant errors in predicted organ deformation, while excessively fine meshes dramatically increase solve time.

Clinical integration is illustrated with case studies involving patient‑specific liver resection planning, cardiac valve repair simulation, and neurosurgical navigation. In each scenario, the generated FE mesh serves as the computational backbone for pre‑operative planning, intra‑operative deformation tracking, and navigation guidance. Real‑time registration techniques—Iterative Closest Point (ICP), feature‑based matching, and biomechanical model updating—are described, together with GPU‑accelerated solvers that achieve sub‑second update rates suitable for intra‑operative use. The chapter also outlines workflow considerations such as data transfer, software interoperability (e.g., DICOM to mesh pipelines), and regulatory aspects of deploying patient‑specific models in the operating room.

Finally, the authors identify current limitations and future research directions. Key challenges include (1) achieving fully automated, high‑accuracy segmentation for organs with ambiguous boundaries, (2) managing the computational burden of high‑resolution meshes during real‑time simulation, and (3) ensuring robust model updating under noisy intra‑operative imaging. Prospective solutions involve hybrid frameworks that fuse deep‑learning segmentation with physics‑based refinement, adaptive mesh regeneration driven by deformation gradients, and cloud‑GPU infrastructures that provide scalable compute resources on demand. The chapter concludes with a roadmap that aligns methodological advances with clinical translation, aiming to make patient‑specific FE modeling a routine component of modern surgical practice.