Comments on "Particle Markov Chain Monte Carlo" by C. Andrieu, A. Doucet and R. Hollenstein

We merge in this note our two discussions about the Read Paper "Particle Markov chain Monte Carlo" (Andrieu, Doucet, and Holenstein, 2010) presented on October 16th 2009 at the Royal Statistical Society, appearing in the Journal of the Royal Statisti…

Authors: ** - C. Andrieu, A. Doucet, R. Hollenstein (원 논문) - 논평 저자: (논문에 명시되지 않음 – 논평은 원 논문에 대한 두 차례 토론을 통합) **

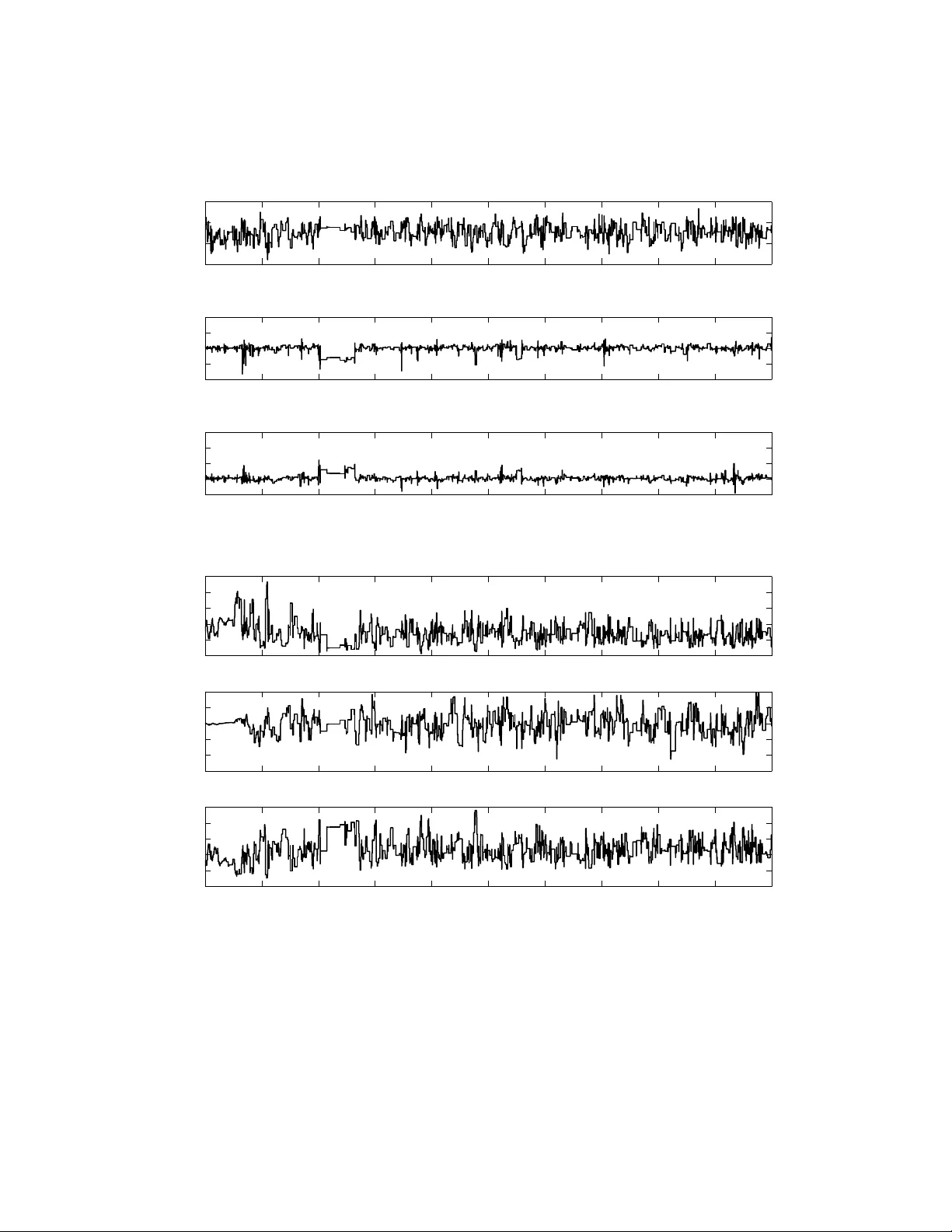

Commen ts on “ P article Mark o v Chain Mon te Carlo ” b y C. Andrieu, A. Doucet and R . Hollenstein J. Cornebise ∗ and G.W. P eters †‡ July 28, 2021 Abstract W e merge in this note our tw o discussions ab out the Read Paper “P article Mark o v c hain Mon te Carlo” ( Andrieu, Doucet, and Holenstein , 2010 ) presen ted on October 16 th 2009 at the Roy al Statistical So ciet y , app earing in the Journal of the Roy al Statistical So ciet y Series B. W e also presen t a more detailed v ersion of the ABC extension. 1 Intro duction The article Andrieu et al. ( 2010 ) is clearly going to ha v e significan t impact on scientific disciplines with a st rong in terface with computational statistics and non-linear state space mo dels. Our commen ts are based on practical exp erience with PMCMC implemen tation in latent pro cess multifactor SDE mo dels for commo dities ( P eters et al. , 2009 ), wireless comm unications ( Nev at et al. , 2009 ) and p opulation dynamics ( Hay es et al. , 2009 ), using Rao-Blac kwellised particle filters ( Doucet et al. , 2000 ) and adaptiv e MCMC ( Rob erts and Rosen thal , 2009 ). 2 Generic comments • F rom our implemen tations, ideal use cases consist of highly non-linear dynamic equa- tions for a small dimension d x of the state-space, large dimension d θ of the static parameter, an d poten tially large length T of the time series. In our cases d x w as 2 or 3, d θ up to 20, and T b et w een 100 and 400. ∗ Statistical and Applied Mathematical Sciences Institute, P .O. Box 14006, Research T riangle Park, NC 27709-4006, USA, jcornebise@samsi.info † Sc hool of Mathematics and Statistics, Universit y of New South W ales, Sydney , NSW, 2052, Australia, garethpeters@unsw.edu.au ‡ This material was based up on w ork supp orted b y the National Science F oundation under Agreemen t No. DMS-0635449. An y opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science F oundation. 1 • In PMH, non-adaptiv e MCMC prop osals for θ (e.g. tuned according to pre-simulati on c hains or burn-in iterations) w ould b e costly for large T , and requires to keep N fixed o v er the whole run of the Mark o v chain. Adaptiv e MCMC prop osals such as the Adaptiv e Metrop olis sampler ( Rob erts and Rosen thal , 2009 ), a v oid such issues and pro v ed particularly relev an t for large d θ and T , as can b e seen in Figure 2 . • The Particle Gibbs (PG) could p otentially stay frozen on a state x 1: T ( i ). Consider a state space mo del with state transition function almost linear in x n for some range of θ , from whic h y 1: T is considered to result, and strongly non-linear elsewhere. If the PG samples θ ( i ) in those regions of strong non-linearit y , the particle tree would lik ely coalesce on the tra jectory preserved b y the conditional SMC, leaving it with a high imp ortance w eigh t, main taining ( θ ( i + 1) , x 1: T ( i + 1)) = ( θ ( i ) , x 1: T ( i )) o v er sev eral iterations. Using PMH within PG w ould help escap e this region, esp ecially using PRC and adaptiv e SMC kernels, outlined in another commen t, to figh t the degeneracy of the filter and the high v ariance of ˆ p θ ( y 1: T ). 3 Adaptive Sequential Monte Ca rlo Our commen ts on adaptiv e SMC relate to Part icle marginal Metrop olis-Hastings (PMMH) whic h has acceptance probabilit y given in Equation (13) of the read pap er for prop osed state ( θ ∗ , X ∗ 1: T ), relying on the estimate ˆ p θ ∗ ( y 1: T ) = T Y n =1 1 N N X k =1 w n x ∗ ,k 1: n . Although a small N suffices to appro ximate the mo de of joint path space distribution, pro ducing a reasonable prop osal for x 1: T , it results in high v ariance estimates of ˆ p θ ∗ ( y 1: T ). W e study a p opulation dynamics example from ( Hay es et al. , 2009 , Mo del 3 excerpt), in volving a log-transformed theta-logistic state space model, see ( W ang , 2007 , Equation 3(a), 3(b)) for parameter settings. PMCMC p erformance dep ends on the trade-off b et ween degeneracy of the filter, N , and design of the SMC m utation kernel. Regarding the latter: • A Rao-Blac kwellised filter ( Doucet et al. , 2000 ) can impro v e acceptance rates, Nev at et al. (see 2009 ). • Adaptiv e mutation kernels, which in PMCMC, can b e considered as adaptive SMC prop osals, can reduce degeneracy on the path space, allo wing for higher dimensional state vect ors x n . Adaption can b e lo cal (within filter) or global (sampled Mark o v c hain history). Though curren tly particularly designed for ABC metho ds, the work of P eters et al. ( 2008 ) incorp orates in to the m utation kernel of SMC Samplers ( Del Moral et al. , 2006 ) the P artial Rejection Con trol (PRC) mec hanism of Liu ( 2001 ), which is also b eneficial for PMCMC. PRC adaption reduces degeneracy b y rejecting a particle m utation when its incremen tal imp ortance w eigh t is b elow a threshold c n . The PRC m utation kernel q ∗ θ ( x n | y n , x n − 1 ) = r ( c n , x n − 1 ) − 1 min 1 , W n − 1 ( x n − 1 ) w n ( x n − 1 , x n ) c n q θ ( x n | y n , x n − 1 ) , (1) 2 can also b e used in PMH, where q θ ( x n | y n , x n − 1 ) is the standard SMC prop osal, and r ( c n , x n − 1 ) = Z min 1 , W n − 1 ( x n − 1 ) w n ( x n − 1 , x n ) c n q θ ( x n | y n , x n − 1 ) dx n . (2) As presen ted in Peters et al. ( 2008 ), algorithmic c hoices for q ∗ θ ( x n | y n , x n − 1 ) can a v oid ev aluation of r ( c n , x n − 1 ). Cornebise ( 2009b ) extend this wo rk, dev eloping PRC for Auxiliary SMC samplers, also useful in PMH. Threshold c n can b e set adaptiv ely: lo cally either at each SMC mutatio n or Mark o v c hain iteration; or globally based on c hain acceptance rates. Additionally , c n can b e set adaptiv ely via quantile estimates of pre-PR C incremen tal weigh ts, see Peters et al. ( 2009 ). • Cornebise et al. ( 2008 ) state that adaptiv e SMC prop osals can b e designed by mini- mizing function-free risk theoretic criteria suc h as Kullbac k-Leibler div ergence b etw een a joint prop osal in a parametric family and a join t target. Cornebise ( 2009a , Chapter 5) and Cornebise et al. ( 2009 ) use a mixture of exp erts, adapting kernels of a mixture on distinct regions of the state-space separated b y a softmax partition. These results extend to PMCMC settings. 0 10 20 30 40 50 60 70 80 90 100 3.5 4 4.5 5 5.5 6 6.5 7 7.5 Population dynamics state space model with log transformed theta−logistic latent process. Generated latent state realisations Generated observations Figure 1: Sequence of sim ulated states and observ ations for the p opulation dy- namic log-transformed theta logistic model from W ang ( 2007 ), with static parame- ter θ = ( r , ζ , K ) under constraints K > 0, r < 2 . 69, ζ ∈ R . State transi- tion is f θ ( x n | x n − 1 ) = N x n ; x n − 1 + r 1 − (exp( x t − 1 ) /K ) ζ , 0 . 01 , and lo cal likelih o o d is g θ ( y n | x n ) = N ( y n ; x n , 0 . 04), for T = 100 timesteps. 3 0 1 2 3 4 5 6 7 8 9 10 x 10 4 4.4 4.5 4.6 4.7 x 2 Sample path for latent state value x 2 in the PMH via an SIR filter. Markov chain iteration i 0 1 2 3 4 5 6 7 8 9 10 x 10 4 6.7 6.75 6.8 6.85 6.9 x 37 Sample path for latent state value x 37 in the PMH via an SIR filter. Markov chain iteration i 0 1 2 3 4 5 6 7 8 9 10 x 10 4 6.75 6.8 6.85 6.9 6.95 x 93 Sample path for latent state value x 93 in the PMH via an SIR filter. Markov chain iteration i 0 1 2 3 4 5 6 7 8 9 10 x 10 4 0.8 1 1.2 1.4 1.6 Sample path for parameter r in the PMH via an adaptive Metropolis proposal. Markov chain iteration i r 0 1 2 3 4 5 6 7 8 9 10 x 10 4 885 890 895 900 905 910 Sample path for parameter K in the PMH via an adaptive Metropolis proposal. Markov chain iteration i K 0 1 2 3 4 5 6 7 8 9 10 x 10 4 0.2 0.4 0.6 0.8 1 1.2 Sample path for parameter ζ in the PMH via an adaptive Metropolis proposal. Markov chain iteration i ζ Figure 2: P ath of three sampled laten t states x 2 , x 37 , x 93 , and of the sampled parameters θ = ( r, ζ , L ), ov er 100 , 000 PMH iterations based on N = 200 particles using a simple SIR filter – the one dimensional state did not call for Rao-Blackw ellisation. Note also the effect the Adaptiv e MCMC prop osal for θ , set-up to start at iteration 5 , 000, particularly visible on the mixing of parameter K . The most noticeable prop ert y of the algorithm is the remark able mixing of the chai n, in spite of the high total dimension of the sampled state: each iteration in v olves a prop osal of ( X 1: T , θ ) of dimension 103. 4 0 10 20 30 40 50 60 70 80 90 100 −80 −60 −40 −20 0 20 40 Initialised PMH state for x 1:T true path x 1:T Initialised Path x 1:T 0 10 20 30 40 50 60 70 80 90 100 −80 −60 −40 −20 0 20 40 Average poster mean path estimate for X 1:T , PMH Markov chain iteration i=10, N=200 particles true path x 1:T Poster Mean Path estimate of E(X 1:T |y 1:T ) 0 10 20 30 40 50 60 70 80 90 100 3 4 5 6 7 Average poster mean path estimate for X 1:T , PMH Markov chain iteration i=20,000, N=200 particles true path x 1:T Poster Mean Path estimate of E(X 1:T |y 1:T ) Figure 3: Con vergence of the distribution of the path of laten t states x 1: T . Note the change of v ertical scale. Initializing PMH on a very unlik ely initial path do es not preven t the MMSE estimate of the latent states con v erging: as few as 10 PMH iterations already b egins to concen trate the sampled paths around the true path – assumed here to b e close to the mo de of the p osterior distribution thanks to the small observ ation noise –, with v ery satisfactory results after 20 , 000 iterations. 5 4 App ro ximate Ba y esian Computation and PMCMC F or in tractable joint likelihoo d p θ ( y 1: T | x 1: T ), we could design a SMC-ABC algorithm (see e.g. Peters et al. , 2008 ; Ratmann , 2010 , Chapter 1) for a fixed ABC tolerance , using the appro ximations ˆ p AB C θ ( y 1: T ) := 1 N N X k =1 1 S P S s =1 I ρ ( y k 1 ( s ) , y 1 ) < µ θ ( x k 1 ) q θ ( x k 1 | y 1 ) × T Y n =2 1 N N X k =1 1 S P S s =1 I ρ ( y k n ( s ) , y n ) < f θ ( x k n | x A k n − 1 n − 1 ) q θ ( x k n | y n , x A k n − 1 n − 1 ) or ˆ p AB C θ ( y 1: T ) := 1 N N X k =1 1 S P S s =1 N y k 1 ( s ); y 1 , 2 µ θ ( x k 1 ) q θ ( x k 1 | y 1 ) × T Y n =2 1 N N X k =1 1 S P S s =1 N y k n ( s ); y n , 2 f θ ( x k n | x A k n − 1 n − 1 ) q θ ( x k n | y n , x A k n − 1 n − 1 ) with ρ a distance on the observ ation space and y k n ( s ) ∼ g θ ( ·| x k n ) simulated observ ations. Ad- ditional degeneracy on the path space induced by ABC appro ximation should b e con trolled, e.g. with PR C ( P eters et al. , 2008 ), see Equation ( 1 ). More details on this algorithm are a v ailable in App endix A , whic h is not con tained in our commen t to JRSSB due to space restrictions. A Algo rithmic details of ABC filtering within PMCMC Here we expand on the commen t w e made ab ov e in whic h w e appro ximated the lo cal like- liho o d g θ ( y n | x n ) of the SMC-based filtering part of the PMCMC algorithm. b y the ABC appro ximation g AB C θ ( y n | x n , y n (1 : S ) , ) := 1 S S X s =1 π θ ( y n ( s ) | x n , y n , ) (3) where p ossible c hoices for π θ are π I θ ( y n ( s ) | x n , y n , ) := I ( ρ ( y n ( s ) , y n ) < ) or π N θ ( y n ( s ) | x n , y n , ) := N y n ( s ); y n , 2 with ρ a distance on the observ ation space and y n ( s ) ∼ g θ ( ·| x n ) sim ulated observ ations – assumed here to b e univ ariate for sak e of brevity , but generalisation to multiv ariate setting and summary statistics is straightfor w ard. W e note it is critical in the filtering context to ensure that the particle system under appro ximation do es not collapse in to uniformly n ull incremen tal w eigh ts w n X k 1: n = 0 whic h 6 Algorithm 1 SMC-ABC-PRC filtering algorithm targeting p θ ( x 1: T | y 1: T ) as required in Step 2(b) of the PMMH of ( Andrieu et al. , 2010 , Section 2.4.2). Replaces the SMC algorithm pre- sen ted in ( Andrieu et al. , 2010 , Section 2.2.1). Appro ximation g AB C θ is defined in Equation ( 3 ) and function r in Equation ( 2 ). Step 1: Initialize and c 1 Step 2: A t time n = 1, (a) for k = 1 , . . . , N (i) sample X k 1 ∼ q θ ( ·| y 1 ) (ii) sample Y k 1 ( s ) ∼ g θ ( ·| X k 1 ) for s = 1 , . . . , S (iii) compute the incremental weig h t ˜ w 1 ( X k 1 ) := µ θ ( X k 1 ) g AB C θ ( y 1 | X k 1 , Y k 1 (1 : S ) , ) q θ ( X k 1 | y 1 ) , (iv) with probabilit y 1 − p k 1 = 1 − min { 1 , ˜ w 1 ( X k 1 ) /c 1 } , reject X k 1 and go to (i) (v) otherwise, accept X k 1 and set w 1 ( X k 1 ) = ˜ w 1 ( X k 1 ) r ( c 1 ) /p k 1 (b) normalise the weigh ts W k 1 := w 1 ( X k 1 ) / P N m =1 w 1 ( X m 1 ). Step 3: A t times n = 2 , . . . , T , (a) p ossibly adapt c n online (b) for k = 1 , . . . , N (i) sample A k n − 1 ∼ F ( ·| W n − 1 ), (ii) sample X k n ∼ q θ ( ·| y n , X A k n − 1 n − 1 ) and set X k 1: n = ( X A k n − 1 n − 1 , X k n ), and (iii) sample Y k n ( s ) ∼ g θ ( ·| X k n ) for s = 1 , . . . , S (iv) compute the incremental weigh t ˜ w n ( X k 1: n ) := f θ ( X k n | X A k n − 1 n − 1 ) g AB C θ ( y n | X k n , Y k n (1 : S ) , ) q θ ( X k n | y n , X A k n − 1 n − 1 ) , (v) with probability 1 − p k n = 1 − min { 1 , ˜ w n ( X k 1: n ) /c n } , reject X k n and go to (ii) (vi) otherwise, accept X k n and set w n ( X k 1: n ) = ˜ w n ( X k 1: n ) r ( c n , X A k n − 1 n − 1 ) /p k n (c) normalise the weigh ts W k n := w n ( X k 1: n ) / P N m =1 w n ( X m 1: n ). 7 ma y o ccur for π I θ at any stage of the filtering during eac h PMCMC iteration, esp ecially for small tollerances . The PRC m utation kernel q ∗ θ ( x n | y n , x n − 1 ) defined in Equation ( 1 ) is critical to ov ercome b oth this collapse and the additional degeneracy on the path space in troduced by the ABC appro ximation. The algorithm presen ted in McKinley et al. ( 2009 , Sections 3.4 and 3.5.1) is a sp ecial case of the SMC samplers PRC-ABC algorithm of P eters et al. ( 2008 ) in whic h the PRC rejection threshold c n = 0, the mutation k ernel is global and resampling is p erformed at each stage of the filter, which av oids the computation of the normalizing constant r ( c n , x n − 1 ) defined in Equation ( 2 ). W e further note that the w ork of Cornebise ( 2009a ) casts the SMC sampler PR C algorithm ( P eters et al. , 2008 ) with rejection of the ancestor index A k n − 1 – here in Step 3.(a).(v) – in to an Auxiliary SMC sampler framew ork. The com bination of these tw o concepts recov ers a generalized v ersion of McKinley et al. ( 2009 ), see Algorithm 1 , whic h has adv ant age in the PMCMC setting of allo wing for adaptation of the threshold c n . References Andrieu, C., A. Doucet, and R. Holenstein (2010). Particle Marko v c hain Mon te Carlo metho ds. J. R. Statis. So c. B 72 (2), 1–33. Cornebise, J. (2009a). A daptive Se quential Monte Carlo Metho ds . Ph. D. thesis, Univ ersit y Pierre and Marie Curie – Paris 6. Cornebise, J. (2009b). Auxiliary SMC samplers with applications to PR C and ABC. W orking pap er, Stastistical and Applied Mathematical Sciences Institute. Cornebise, J., E. Moulines, and J. Olsson (2008). Adaptiv e methods for sequential imp ortance sampling with appli cation to state space mo dels. Statistics and Computing 18 (4), 461–480. Cornebise, J., E. Moulines, and J. Olsson (2009). Adaptiv e sequen tial Monte Carlo b y means of mixture of exp erts. W orking pap er, T elecom ParisT ec h. Del Moral, P ., A. Doucet, and A. Jasra (2006). Sequential Mon te Carlo samplers. Journal of the R oyal Statistic al So ciety: Series B (Statistic al Metho dolo gy) 68 (3), 411–436. Doucet, A., N. de F reitas, K. Murph y , and S. Russell (2000). Rao-Blac kw ellised particle filtering for dynamic Ba y esian net w orks. In Pr o c e e dings of the Sixte enth Confer enc e on Unc ertainty in Artificial Intel ligenc e , pp. 176–183. Ha y es, K., G. Hosac k, and G. P eters (2009). Searchin g for the all ee effect in laten t state space mo dels via adaptive particle Mark o v chain Mon te Carlo. W orking pap er, Departmen t of Mathematics and Statistics, Universi t y of New South W ales. Liu, J. (2001). Monte Carlo Str ate gies in Scientific Computing . New Y ork: Springer. McKinley , T., A. Co ok, and R. Deardon (2009). Inference in epidemic mo dels without lik eliho o ds. The International Journal of Biostatistics 5 (1), 24. 8 Nev at, I., G. P eters, and A. Doucet (2009). Channel tracking for relay net w orks via adaptive particle MCMC. W orking pap er, Univ ersit y of New South W ales. P eters, G., M. Briers, P . Shev c henk o, and A. Doucet (2009). Online calibration and filtering for multi factor commo dit y mo dels with seasonality: incorporating futures and options con tracts. W orking pap er, Department of Mathematics and Statistics, Univ ersit y of New South W ales. P eters, G., Y. F an, and S. Sisson (2008). On sequential Mon te Carlo, partial rejection con trol and approximate Ba yesian computation. T echnical rep ort, Departmen t of Mathematics and Statistics, Universit y of New South W ales. P eters, G., Y. F an, and S. Sisson (2009). Lik elihoo d-free Ba yesian inference for α -stable mo dels. T ec hnical rep ort, Departmen t of Mathematics and Statistics, Universit y of New South W ales. Ratmann, O. (2010). Appr oximate Bayesian Computation under mo del unc ertainty, with an applic ation to sto chastic pr o c esses of network evolution . Ph. D. Thesis, to app ear, Imp erial College. Rob erts, G. and J. Rosenthal (2009). Examples of adaptiv e MCMC. Journal of Computa- tional and Gr aphic al Statistics 18 (2), 349–367. W ang, G. (2007). On the laten t state estimation of nonlinear p opulation dynamics using ba y esian and non-Bay esian state-space mo dels. Ec olo gic al Mo del ling 200 (3-4), 521–528. 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment