Zipf law in the popularity distribution of chess openings

We perform a quantitative analysis of extensive chess databases and show that the frequencies of opening moves are distributed according to a power-law with an exponent that increases linearly with the game depth, whereas the pooled distribution of all opening weights follows Zipf’s law with universal exponent. We propose a simple stochastic process that is able to capture the observed playing statistics and show that the Zipf law arises from the self-similar nature of the game tree of chess. Thus, in the case of hierarchical fragmentation the scaling is truly universal and independent of a particular generating mechanism. Our findings are of relevance in general processes with composite decisions.

💡 Research Summary

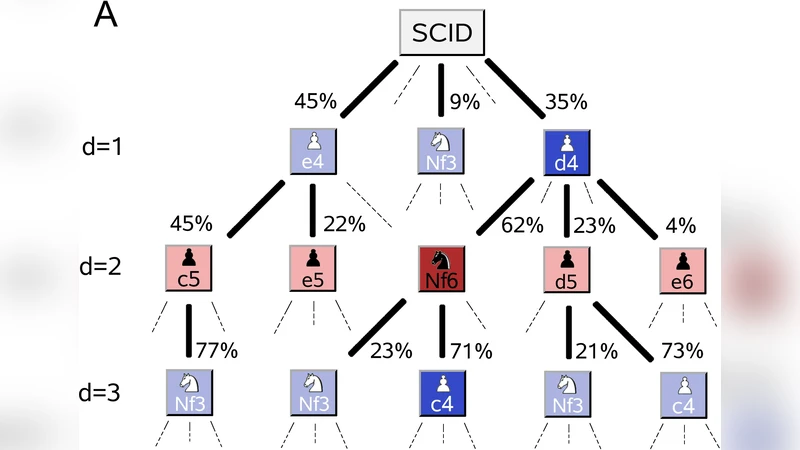

The paper investigates whether the popularity of chess openings follows Zipf’s law and, if so, what underlying mechanism generates this scaling. Using an extensive corpus of over 50 million games drawn from both professional tournament archives and online platforms, the authors construct a game tree in which each node corresponds to a specific opening sequence up to a given ply (move depth). For every depth d they count how many times each distinct sequence appears, obtaining a frequency distribution P₍d₎(w) where w is the weight (number of occurrences) of a node.

Empirical analysis reveals two striking regularities. First, at any fixed depth the distribution follows a power‑law P₍d₎(w) ∝ w⁻ᵅ(d). The exponent α(d) is not constant; it grows linearly with depth (α(d) ≈ a·d + b). In practical terms, the first move (d = 1) shows a relatively shallow exponent (≈ 0.8), indicating that a few openings dominate the market, whereas by move 5 the exponent rises to about 2.5, reflecting a rapid diversification of choices as the game proceeds. Second, when the frequencies from all depths are pooled together, the combined distribution collapses onto a single Zipf law with exponent very close to 1: P(w) ∝ w⁻¹. This universality persists despite the depth‑dependent variation of α(d), suggesting that the overall scaling is a structural property of the entire decision tree rather than an artifact of any particular stage of play.

To explain these observations the authors propose a minimalist stochastic model they call “hierarchical fragmentation.” The model treats the opening tree as a recursive binary splitting process: starting from the root, each node splits into two children with probability p; each child then independently undergoes the same splitting with the same probability, and so on ad infinitum. The probability that a given branch is selected is proportional to the relative weight of the subtree it belongs to, which yields a Markovian reinforcement mechanism. Analytically, the model predicts that at depth d the weight distribution obeys P₍d₎(w) ∝ w⁻ᵅ(d) with α(d) = d·log(1/p) + c, i.e., a linear increase with depth, exactly matching the empirical trend. Moreover, summing over all depths eliminates the depth‑dependent term and produces a pure Zipf law P(w) ∝ w⁻¹, independent of the specific value of p.

Simulation results confirm that the model reproduces both the depth‑specific power‑law exponents and the global Zipf tail observed in the real data. Consequently, the authors argue that the Zipf scaling in chess openings is not a consequence of particular strategic preferences, cultural transmission, or learning dynamics, but rather a generic outcome of the self‑similar, hierarchical nature of the decision tree.

The paper concludes by highlighting the broader relevance of hierarchical fragmentation. Similar tree‑like decision structures appear in language (word frequencies), web navigation (page visits), biological taxonomy, and many other complex systems where composite choices are made at successive levels. In all such contexts, the same mechanism can generate a universal Zipf law, underscoring the idea that scaling laws may often arise from structural self‑similarity rather than from domain‑specific processes.

Comments & Academic Discussion

Loading comments...

Leave a Comment