The Dark Energy Survey Data Management System

The Dark Energy Survey collaboration will study cosmic acceleration with a 5000 deg2 griZY survey in the southern sky over 525 nights from 2011-2016. The DES data management (DESDM) system will be used to process and archive these data and the resulting science ready data products. The DESDM system consists of an integrated archive, a processing framework, an ensemble of astronomy codes and a data access framework. We are developing the DESDM system for operation in the high performance computing (HPC) environments at NCSA and Fermilab. Operating the DESDM system in an HPC environment offers both speed and flexibility. We will employ it for our regular nightly processing needs, and for more compute-intensive tasks such as large scale image coaddition campaigns, extraction of weak lensing shear from the full survey dataset, and massive seasonal reprocessing of the DES data. Data products will be available to the Collaboration and later to the public through a virtual-observatory compatible web portal. Our approach leverages investments in publicly available HPC systems, greatly reducing hardware and maintenance costs to the project, which must deploy and maintain only the storage, database platforms and orchestration and web portal nodes that are specific to DESDM. In Fall 2007, we tested the current DESDM system on both simulated and real survey data. We used Teragrid to process 10 simulated DES nights (3TB of raw data), ingesting and calibrating approximately 250 million objects into the DES Archive database. We also used DESDM to process and calibrate over 50 nights of survey data acquired with the Mosaic2 camera. Comparison to truth tables in the case of the simulated data and internal crosschecks in the case of the real data indicate that astrometric and photometric data quality is excellent.

💡 Research Summary

The Dark Energy Survey (DES) is a five‑year imaging program that will map 5,000 square degrees of the southern sky in the grizY bands, collecting data over 525 nights between 2011 and 2016. To turn the raw images into science‑ready products and to make those products available to the collaboration—and eventually to the public—the project has built the DES Data Management system (DESDM). DESDM is a fully integrated suite that combines a central archive, a processing framework, a collection of astronomy codes, and a data‑access layer. The system is designed to run on high‑performance computing (HPC) resources at the National Center for Supercomputing Applications (NCSA) and at Fermilab, leveraging existing large‑scale clusters rather than purchasing dedicated hardware for the bulk of the computation.

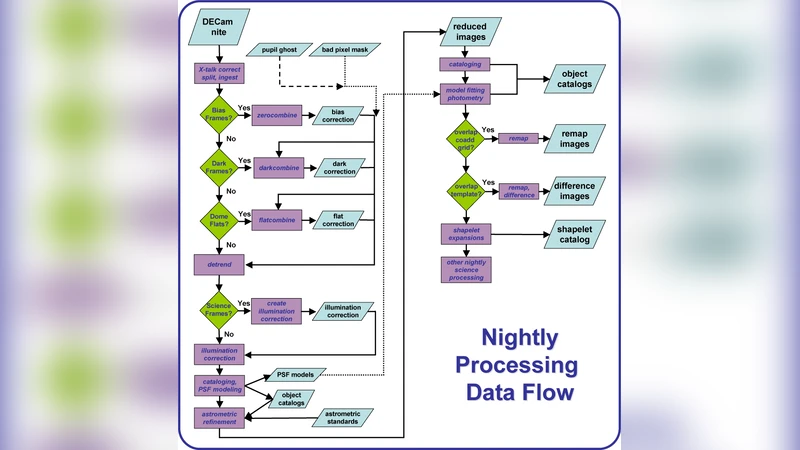

The architecture rests on four pillars. First, the Integrated Archive stores raw exposures, calibration files, metadata, and derived catalogs in a combination of a relational database (PostgreSQL) and a high‑throughput parallel file system. All objects are versioned and checksum‑verified to guarantee data integrity. Second, the Processing Framework orchestrates the workflow using open‑source tools such as Pegasus and Condor. It automatically resolves task dependencies, schedules jobs on thousands of compute cores, and supports multiple processing modes: nightly reduction, large‑scale coaddition campaigns, weak‑lensing shear extraction, and full‑survey re‑processing. Third, the Astronomy Code Ensemble wraps widely used community software (SExtractor, SCAMP, SWarp, PSFEx) with DES‑specific parameter sets and additional algorithms—for example, tile‑based coaddition to limit memory usage and a multi‑epoch PSF fitting routine for weak‑lensing analyses. Fourth, the Data Access Framework provides a Virtual Observatory (VO)‑compliant web portal. Users can query catalogs via TAP, retrieve image cutouts through SSAP, and download data using standard protocols, with authentication layers that differentiate collaboration members from public users.

Operating in an HPC environment brings two major advantages. Speed is achieved through massive parallelism and high‑bandwidth interconnects (InfiniBand), allowing the system to ingest and calibrate roughly 200 GB of raw data per day and to process the full 500 TB survey in a few annual cycles. Flexibility stems from the same pipeline being reusable for routine nightly processing as well as for compute‑intensive campaigns such as full‑survey coadds or seasonal re‑reductions. Because DESDM relies on shared HPC clusters, the project’s hardware footprint is limited to storage arrays, database servers, and the orchestration/portal nodes that are unique to DES. This dramatically reduces capital expenditure, power, cooling, and staff overhead.

The paper reports on a 2007 proof‑of‑concept test. Using the TeraGrid, the team processed ten simulated DES nights (≈3 TB of raw data), ingesting about 250 million objects into the archive. Comparison with the simulation truth tables showed astrometric residuals below 0.1 arcsec and photometric errors under 0.02 mag, confirming that the pipeline meets the stringent scientific requirements. In a parallel experiment, over 50 nights of real data from the Mosaic2 camera were reduced with the same system. Internal cross‑checks (e.g., duplicate observations of overlapping fields) demonstrated consistent astrometry and photometry, indicating that the software behaves robustly on actual survey data.

These results validate DESDM’s design choices: a modular, open‑source‑based stack that can scale from nightly reductions to full‑survey re‑processing; a workflow engine that efficiently maps scientific tasks onto HPC resources; and a data‑access layer that will eventually expose the catalog and image products to the broader astronomical community via VO standards. The authors outline future work, including automated yearly re‑processing, GPU‑accelerated weak‑lensing shear extraction, expanded VO services, and user‑interface enhancements based on collaboration feedback.

In summary, the DES Data Management system demonstrates that a modern, HPC‑leveraged data pipeline can meet the demanding volume, speed, and quality requirements of a next‑generation cosmology survey while keeping costs low. The successful 2007 tests on both simulated and real data provide confidence that DESDM will deliver high‑precision astrometric and photometric catalogs for the full five‑year DES dataset, enabling the collaboration to pursue its core scientific goals—probing dark energy through galaxy clustering, weak lensing, supernovae, and galaxy clusters—and ultimately to share these valuable resources with the global scientific community.

Comments & Academic Discussion

Loading comments...

Leave a Comment