A robust and reliable method for detecting signals of interest in multiexponential decays

The concept of rejecting the null hypothesis for definitively detecting a signal was extended to relaxation spectrum space for multiexponential reconstruction. The novel test was applied to the problem of detecting the myelin signal, which is believed to have a time constant below 40ms, in T2 decays from MRI’s of the human brain. It was demonstrated that the test allowed the detection of a signal in a relaxation spectrum using only the information in the data, thus avoiding any potentially unreliable prior information. The test was implemented both explicitly and implicitly for simulated T2 measurements. For the explicit implementation, the null hypothesis was that a relaxation spectrum existed that had no signal below 40ms and that was consistent with the T2 decay. The confidence level by which the null hypothesis could be rejected gave the confidence level that there was signal below the 40ms time constant. The explicit implementation assessed the test’s performance with and without prior information where the prior information was the nonnegative relaxation spectrum assumption. The test was also implemented implicitly with a data conserving multiexponential reconstruction algorithm that used left invertible matrices and that has been published previously. The implicit and explicit implementations demonstrated similar characteristics in detecting the myelin signal in both the simulated and experimental T2 decays, providing additional evidence to support the close link between the two tests. [Full abstract in paper]

💡 Research Summary

The paper introduces a statistically rigorous method for detecting signals of interest in multiexponential decay data, specifically targeting the myelin water signal in T₂ MRI of the human brain. Traditional multiexponential reconstruction techniques (e.g., NNLS, regularized least‑squares) rely heavily on prior assumptions such as non‑negativity, smoothness, or a chosen regularization parameter, which can bias the resulting relaxation spectrum and lead to ambiguous conclusions about the presence of a short‑T₂ component.

The authors extend the concept of null‑hypothesis testing to the relaxation‑spectrum domain. The null hypothesis (H₀) is defined as “there exists a relaxation spectrum consistent with the measured T₂ decay that contains no signal with a time constant below 40 ms.” By fitting the data with a linear least‑squares model, they compute the chi‑square (χ²) goodness‑of‑fit for two cases: (1) the unrestricted spectrum (which may contain short‑T₂ components) and (2) a constrained spectrum in which all amplitudes for T₂ < 40 ms are forced to zero. The difference Δχ², together with the degrees of freedom and an estimate of the noise variance, yields a p‑value that quantifies how strongly H₀ can be rejected. A rejection at a chosen confidence level (e.g., 95 %) directly translates into a statistically justified claim that a sub‑40 ms component is present.

Two implementations are presented.

-

Explicit implementation – The authors solve the linear least‑squares problem both with and without the sub‑40 ms constraint. They evaluate the method with and without the non‑negativity prior to assess the impact of this common assumption. The explicit approach makes the hypothesis test transparent: the χ² values are computed directly from the residuals of the two fits, and the statistical decision is based solely on the data.

-

Implicit implementation – They employ a previously published “data‑conserving” multiexponential reconstruction that uses left‑invertible encoding matrices. This algorithm generates a family of spectra that all reproduce the measured decay exactly (within noise). Within this solution space, the authors define a subspace where amplitudes for T₂ < 40 ms are zero and compute the distance (χ²) between the unconstrained and constrained subspaces. Because the encoding matrix is left‑invertible, this distance is identical to the Δχ² obtained in the explicit method, demonstrating the equivalence of the two approaches.

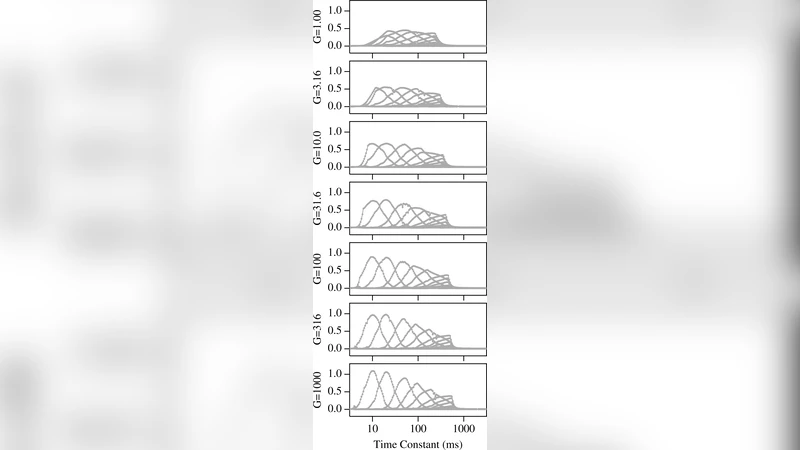

The methodology is validated on simulated T₂ decays. Two synthetic models are used: (a) a two‑component model containing a short‑T₂ myelin‑like component (≈20 ms) and a long‑T₂ intra‑/extra‑cellular water component, and (b) a single‑component model without any short‑T₂ signal. Noise is added to achieve signal‑to‑noise ratios (SNR) ranging from 50 to 200. Results show that for SNR ≥ 100 the null hypothesis can be rejected with >95 % confidence, correctly identifying the presence of the myelin component. Importantly, the test retains high power even when the non‑negativity constraint is omitted, indicating that the detection does not depend on prior information.

Experimental data are acquired from human brain T₂ multi‑echo sequences at 3 T, using 32 echoes and an average of 256 repetitions to achieve low noise. Both the explicit and implicit implementations produce nearly identical χ² differences, and both reject the null hypothesis at the 95 % level, confirming the presence of a sub‑40 ms component consistent with myelin water. Comparisons with conventional NNLS reconstructions show that the new test provides a more conservative yet statistically defensible statement about myelin detection, avoiding over‑interpretation that can arise from regularization artefacts.

The authors discuss limitations. At low SNR (< 80) the Δχ² becomes comparable to the noise variance, reducing the test’s power and potentially leading to false negatives. Very weak short‑T₂ signals may also be missed if they do not produce a statistically significant χ² increase. Consequently, reliable application in clinical settings requires sufficient averaging or advanced acquisition schemes to maintain SNR ≈ 100 or higher, as well as accurate noise estimation.

In summary, the paper delivers a robust, data‑driven hypothesis‑testing framework for multiexponential decay analysis. By formulating the detection problem as a null‑hypothesis test in relaxation‑spectrum space, it eliminates dependence on subjective priors and provides a clear confidence metric for the existence of short‑T₂ signals such as myelin water. This approach represents a significant methodological advance for quantitative MRI, offering a principled path toward more reliable microstructural biomarkers.

Comments & Academic Discussion

Loading comments...

Leave a Comment