A parallel gravitational N-body kernel

We describe source code level parallelization for the {\tt kira} direct gravitational $N$-body integrator, the workhorse of the {\tt starlab} production environment for simulating dense stellar systems. The parallelization strategy, called ``j-parallelization’’, involves the partition of the computational domain by distributing all particles in the system among the available processors. Partial forces on the particles to be advanced are calculated in parallel by their parent processors, and are then summed in a final global operation. Once total forces are obtained, the computing elements proceed to the computation of their particle trajectories. We report the results of timing measurements on four different parallel computers, and compare them with theoretical predictions. The computers employ either a high-speed interconnect, a NUMA architecture to minimize the communication overhead or are distributed in a grid. The code scales well in the domain tested, which ranges from 1024 - 65536 stars on 1 - 128 processors, providing satisfactory speedup. Running the production environment on a grid becomes inefficient for more than 60 processors distributed across three sites.

💡 Research Summary

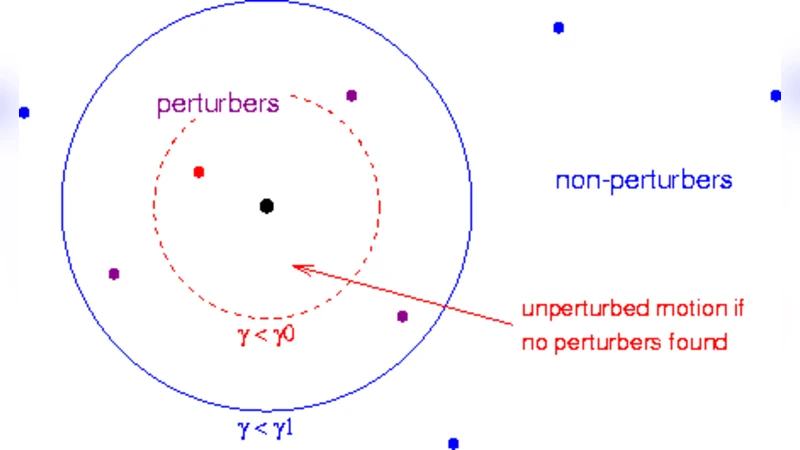

The paper presents a source‑level parallelization of the kira direct gravitational N‑body integrator, which is the core of the starlab environment used for simulating dense stellar systems. The authors introduce a strategy called “j‑parallelization” that partitions the computational domain by distributing all particles among the available processors rather than dividing the spatial domain. Each processor holds a subset of particles (the “j‑particles”) and computes the partial gravitational forces exerted by all other particles on its local subset. These partial forces are stored locally and then summed across all processors in a single global reduction operation (implemented with MPI_Allreduce). Once the total forces are obtained, the standard Hermite fourth‑order integration steps of kira are applied to advance particle trajectories.

Key implementation details include: (1) packing particle attributes (mass, position, velocity, etc.) into compact structures to minimize communication volume; (2) using non‑blocking MPI calls to overlap communication with force computation, thereby reducing idle time; (3) exploiting NUMA‑aware memory placement on shared‑memory nodes to keep local data accesses fast; and (4) combining OpenMP threading within each node with MPI across nodes to form a hybrid parallel model. The authors also discuss load‑balancing considerations, noting that an even distribution of particles generally yields good balance because the force calculation cost is roughly proportional to the number of particles each processor owns.

Performance was evaluated on four distinct parallel platforms: (a) a high‑speed interconnect cluster (Myrinet/InfiniBand), (b) a large‑memory NUMA server, (c) a low‑cost Ethernet‑based grid spanning three geographic sites, and (d) a mixed‑architecture hybrid system. Simulations were run with particle counts ranging from 1 024 to 65 536, and the number of processors was varied from 1 to 128. On the high‑speed interconnect and NUMA systems, the code achieved near‑linear scaling up to 64–128 processors, maintaining parallel efficiencies above 80 %. In contrast, the Ethernet grid showed satisfactory speedup only up to about 60 processors; beyond that, communication latency and limited bandwidth caused the efficiency to drop below 30 %. These results confirm that the algorithm’s scalability is strongly tied to the underlying communication fabric.

The authors develop a theoretical performance model where the computational work scales as O(N²/P) and the global reduction cost scales as O(N·log P). Measured runtimes match the model within a few percent, validating the assumptions about computation‑to‑communication ratios. The model also predicts that, for future runs on several hundred or thousand processors, further reductions in communication overhead (e.g., through hierarchical reductions or topology‑aware routing) will be essential.

In conclusion, the j‑parallelization approach successfully transforms the sequential kira integrator into a scalable parallel application suitable for production runs on modern clusters. It delivers substantial speedups for simulations with up to 65 536 particles on up to 128 processors, provided the interconnect is sufficiently fast. The paper highlights the limitations of grid‑based deployments for large processor counts and suggests future work such as integrating GPU accelerators, implementing adaptive particle partitioning, and developing smarter communication scheduling for wide‑area networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment