Sparse and Dense Encoding in Layered Associative Network of Spiking Neurons

A synfire chain is a simple neural network model which can propagate stable synchronous spikes called a pulse packet and widely researched. However how synfire chains coexist in one network remains to be elucidated. We have studied the activity of a layered associative network of Leaky Integrate-and-Fire neurons in which connection we embed memory patterns by the Hebbian Learning. We analyzed their activity by the Fokker-Planck method. In our previous report, when a half of neurons belongs to each memory pattern (memory pattern rate $F=0.5$), the temporal profiles of the network activity is split into temporally clustered groups called sublattices under certain input conditions. In this study, we show that when the network is sparsely connected ($F<0.5$), synchronous firings of the memory pattern are promoted. On the contrary, the densely connected network ($F>0.5$) inhibit synchronous firings. The sparseness and denseness also effect the basin of attraction and the storage capacity of the embedded memory patterns. We show that the sparsely(densely) connected networks enlarge(shrink) the basion of attraction and increase(decrease) the storage capacity.

💡 Research Summary

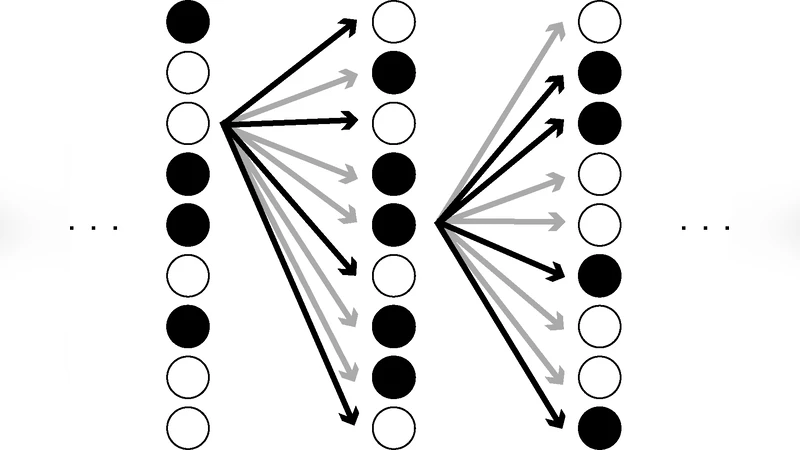

This paper investigates how the sparsity or density of memory encoding influences synchronous spiking activity, basin of attraction, and storage capacity in a layered associative network of leaky integrate‑and‑fire (LIF) neurons. The authors construct a feed‑forward network composed of several layers, each containing a large population of LIF units. Memory patterns are embedded using a Hebbian learning rule, and the proportion of neurons belonging to a given pattern is denoted by the pattern rate F. When F = 0.5, previous work reported the emergence of “sublattices,” i.e., temporally clustered groups of neurons that fire together under certain input conditions.

To explore the effect of varying F, the authors apply the Fokker‑Planck formalism to derive the probability density of membrane potentials and the resulting firing rates for each subpopulation. This analytical approach allows precise quantification of pulse‑packet shape, propagation speed, and temporal dispersion across layers.

Key findings are as follows:

-

Sparse encoding (F < 0.5) – Fewer neurons participate in each pattern, which makes the effective synaptic weight per participating neuron larger. Consequently, neurons belonging to the same pattern reach threshold almost simultaneously, producing a narrow, high‑amplitude pulse packet. The network exhibits strong synchrony, and the sublattice structure becomes more pronounced. Sparse encoding also enlarges the basin of attraction: even when the initial input is moderately corrupted, the dynamics reliably converge to the stored pattern. Moreover, interference between patterns is reduced, leading to a higher storage capacity.

-

Dense encoding (F > 0.5) – Many neurons belong to each pattern, diluting individual synaptic contributions. The membrane potential rises more gradually, resulting in broader, lower‑amplitude pulse packets and weaker synchrony. The sublattice phenomenon is suppressed, and activity spreads more uniformly across the population. The basin of attraction shrinks because small deviations in the input are amplified, making the network more sensitive to noise. Inter‑pattern interference increases, thereby decreasing the number of patterns that can be stored without error.

The authors compare these results with classic Hopfield‑type associative memory models, noting that the benefits of sparse coding for capacity are reproduced in a biologically plausible spiking framework. The study demonstrates that by tuning the pattern rate F, one can deliberately shape the dynamical regime of a spiking associative network—promoting either robust, synchronized transmission (sparse) or more distributed, noise‑tolerant processing (dense).

Implications extend to neuroscience: cortical circuits may dynamically adjust sparsity depending on task demands, thereby balancing speed of transmission against robustness. The paper also suggests design principles for neuromorphic hardware, where controlling the proportion of active synapses could optimize memory performance and energy consumption.

In summary, the work provides a comprehensive analytical and numerical analysis of how sparse versus dense encoding modulates synchronous firing, attractor stability, and memory capacity in layered spiking networks, offering both theoretical insight and practical guidance for future neural‑computing systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment