Delineating cosmic expansion history with recent supernova data: A Bayesian model-independent approach

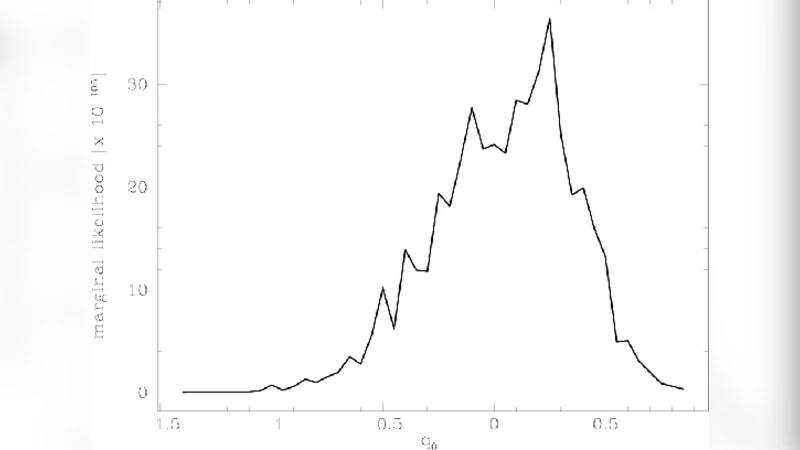

Marginal likelihoods for the cosmic expansion rates are evaluated using the recent `Constitution’ data of 397 supernovas, thereby updating the results in some previous works. Even when beginning with a very strong prior probability that favors an accelerated expansion, we end up with a marginal likelihood for the deceleration parameter $q_0$ peaked around zero in the spatially flat case. This is in agreement with some other analysis of the Constitution data. It is also found that the new data significantly constrains the cosmic expansion rates, when compared to the previous analysis. Here again we adopt the model-independent approach in which the scale factor is expanded into a Taylor series in time about the present epoch; for practical purposes, it is truncated to polynomials of various orders, in different trials. Though one cannot regard the polynomials thus obtained as models, in this paper we evaluate the total likelihoods (Bayesian evidences) for them to find the order of the polynomial having the largest likelihood. Analysis using the Constitution data shows that the largest likelihood occurs for the fourth order polynomial and is of value $\approx 0.77 \times 10^{-102}$. It is argued that this value, which we call the likelihood for the model-independent approach, may be used to calibrate the performance of realistic models.

💡 Research Summary

The paper presents a Bayesian, model‑independent reconstruction of the cosmic expansion history using the latest “Constitution” compilation of 397 Type Ia supernovae (SNe Ia). Instead of assuming a specific cosmological model (such as ΛCDM), the authors expand the scale factor a(t) in a Taylor series around the present cosmic time t₀, writing

a(t)=1+H₀Δt−(q₀H₀²/2)Δt²+(j₀H₀³/6)Δt³−… ,

where Δt = t−t₀, H₀ is the current Hubble parameter, q₀ the deceleration parameter, j₀ the jerk, etc. For practical analysis the series is truncated at various orders (second, third, fourth, etc.), each truncation defining a distinct polynomial “model”. Although these polynomials are not physical models per se, the authors treat each as a hypothesis and compute its Bayesian evidence (the marginal likelihood) by integrating the product of a likelihood function (derived from the supernova distance‑modulus residuals) and prior probability distributions over the parameter space.

The priors are taken from earlier work: Gaussian distributions centred on previously estimated values with realistic covariances. Notably, the prior for q₀ is deliberately chosen to strongly favour acceleration (negative q₀). The likelihood is constructed from the χ² statistic of the supernova data, assuming Gaussian measurement errors and including the full covariance matrix supplied with the Constitution sample.

Key findings:

- Evidence ranking – The fourth‑order polynomial yields the highest Bayesian evidence, Z≈0.77×10⁻¹⁰². The third‑order and second‑order polynomials have lower evidences (≈0.55×10⁻¹⁰² and ≈0.30×10⁻¹⁰² respectively). Higher‑order (≥5) truncations see a decline in Z, indicating over‑fitting. Thus, the data support up to four free coefficients (H₀, q₀, j₀, s₀) but not more.

- Deceleration parameter – Despite the strong acceleration‑biased prior, the posterior marginal likelihood for q₀ peaks near zero and is nearly symmetric about q₀=0. This suggests the Constitution data do not compel a strongly accelerating universe, aligning with other recent analyses that find the evidence for acceleration to be weaker than previously thought.

- Constraining power of the new data – Compared with earlier analyses based on the “Gold” supernova set, the Constitution compilation dramatically narrows the posterior distributions of the expansion coefficients, especially q₀ and j₀, demonstrating the improved constraining power of the larger, more homogeneous sample.

The authors argue that the maximal evidence obtained for the fourth‑order polynomial can serve as a benchmark for evaluating concrete cosmological models. Any physical model that yields a Bayesian evidence larger than ≈0.77×10⁻¹⁰² would be considered superior to this model‑independent reconstruction in explaining the supernova data.

Methodologically, the paper discusses several subtleties: the convergence of the time‑based Taylor series (especially at higher redshifts), the choice of Δt as the expansion variable versus redshift‑based expansions, and the impact of systematic uncertainties in supernova standardisation. They suggest that future work should incorporate additional distance probes (e.g., baryon acoustic oscillations, cosmic chronometers, gravitational‑wave standard sirens) to further test the robustness of the model‑independent approach.

In summary, the study demonstrates that a Bayesian, series‑expansion method can extract the expansion history directly from supernova observations without committing to a specific dark‑energy model. The fourth‑order expansion provides the best balance between flexibility and parsimony, and its evidence value offers a quantitative yardstick for assessing the performance of more conventional cosmological models.

Comments & Academic Discussion

Loading comments...

Leave a Comment