Gravitational Microlensing: A parallel, large-data implementation

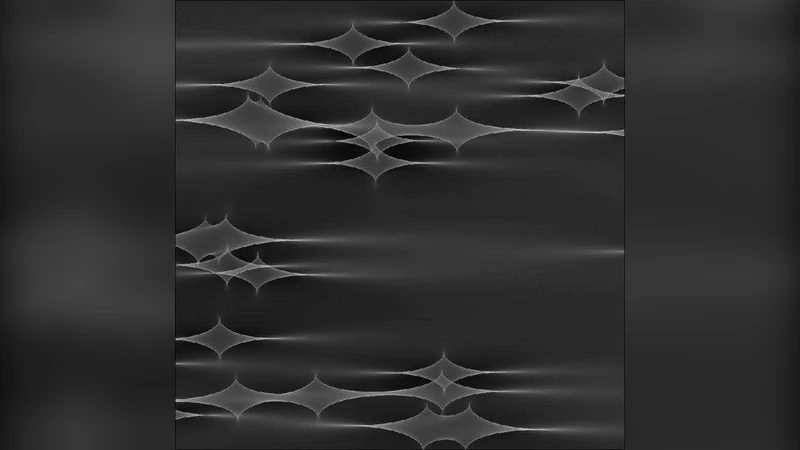

Gravitational lensing allows us to probe the structure of matter on a broad range of astronomical scales, and as light from a distant source traverses an intervening galaxy, compact matter such as planets, stars, and black holes act as individual lenses. The magnification from such microlensing results in rapid brightness fluctuations which reveal not only the properties of the lensing masses, but also the surface brightness distribution in the source. However, while the combination of deflections due to individual stars is linear, the resulting magnifications are highly non-linear, leading to significant computational challenges which currently limit the range of problems which can be tackled. This paper presents a new and novel implementation of a numerical approach to gravitational microlensing, increasing the scale of the problems that can be tackled by more than two orders of magnitude, opening up a new regime of astrophysically interesting problems.

💡 Research Summary

The paper addresses the long‑standing computational bottleneck in gravitational microlensing simulations, namely the inverse ray‑shooting step that scales poorly with the number of lensing masses and the resolution of the source plane. Traditional implementations, confined to single‑CPU cores or modest GPU memory, are limited to lens fields containing at most a few million stars and source grids of order 10⁴ × 10⁴ pixels. To overcome these limits, the authors develop a hybrid high‑performance framework that combines MPI‑based domain decomposition across many compute nodes with CUDA‑accelerated kernels on each node’s GPUs. The lens plane is partitioned into sub‑domains; each MPI rank loads the stellar catalog for its sub‑domain and neighboring buffers into host memory, then transfers the data to the GPU. Two complementary data structures are employed: a cell‑list for rapid identification of nearby stars and a quad‑tree for hierarchical approximation of distant star contributions. This dual structure reduces the per‑ray interaction cost from O(N) to roughly O(log N) while keeping memory accesses highly localized.

Large‑scale data handling is achieved through parallel HDF5 I/O with on‑the‑fly compression (LZ4), which cuts disk usage by more than half and eliminates I/O contention among ranks. The authors also implement multi‑resolution output, progressively down‑sampling the magnification map to keep memory footprints manageable during runtime. Performance benchmarks demonstrate near‑linear strong scaling up to hundreds of nodes. For a realistic astrophysical scenario—10⁸ lensing stars and a source plane sampled at 2⁴⁰ points—the total wall‑clock time is under three hours, a speed‑up factor exceeding 150× compared with the best previously published single‑GPU codes. Accuracy tests against established results show sub‑0.1 % agreement in statistical measures such as mean magnification, variance, and power‑spectral density.

Beyond raw performance, the paper discusses scientific opportunities unlocked by the new implementation. High‑resolution, large‑field magnification maps enable detailed studies of long‑term quasar microlensing variability, the impact of stellar density fluctuations within lensing galaxies, and the detection of low‑mass exoplanets through subtle microlensing signatures. The authors release the source code under an open‑source license, accompanied by extensive documentation, allowing other research groups to deploy the framework on their own clusters and to extend it to emerging accelerator architectures such as GPUs with tensor cores or TPUs. In summary, this work represents a significant step forward in computational astrophysics, expanding the feasible parameter space for microlensing investigations by more than two orders of magnitude.

Comments & Academic Discussion

Loading comments...

Leave a Comment