State Information in Bayesian Games

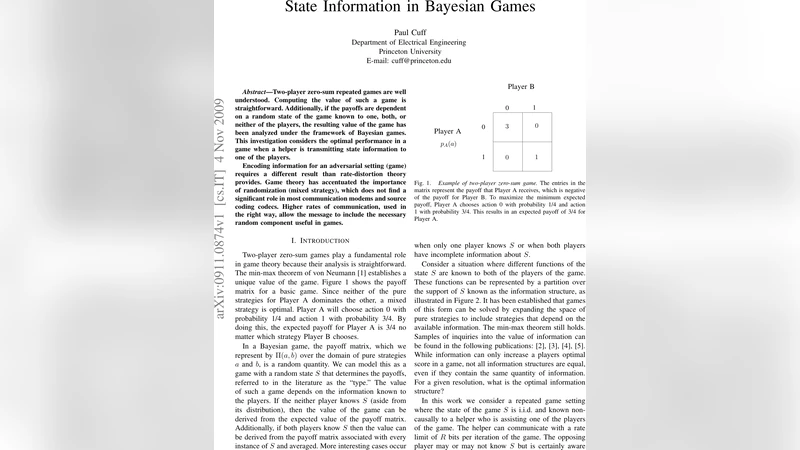

Two-player zero-sum repeated games are well understood. Computing the value of such a game is straightforward. Additionally, if the payoffs are dependent on a random state of the game known to one, both, or neither of the players, the resulting value of the game has been analyzed under the framework of Bayesian games. This investigation considers the optimal performance in a game when a helper is transmitting state information to one of the players. Encoding information for an adversarial setting (game) requires a different result than rate-distortion theory provides. Game theory has accentuated the importance of randomization (mixed strategy), which does not find a significant role in most communication modems and source coding codecs. Higher rates of communication, used in the right way, allow the message to include the necessary random component useful in games.

💡 Research Summary

The paper investigates a novel communication problem that arises when a helper (or “coach”) transmits information about a random state of a zero‑sum repeated game to only one of the two players. While zero‑sum repeated games and Bayesian games are well‑studied, the literature has largely focused on cases where the state is known to both players, to none, or where the state is exogenously revealed without any communication constraints. This work departs from that tradition by explicitly modeling a rate‑limited channel through which the helper can send a message to a single player. The central question is: how should the helper encode the state so that the receiving player can use it most effectively in an adversarial setting?

The authors begin by reviewing the standard formulation of zero‑sum repeated games and the Bayesian extension in which a random state (S) is drawn from a known distribution (P(S)). In a classical Bayesian game, the value of the game depends on which players observe (S). The novelty here is the introduction of a communication link with capacity (R) bits per stage that carries a message (M). The message must simultaneously convey (i) a compressed representation (\hat S) of the state, and (ii) a source of randomness (Z) that the receiver can use to randomize his actions (i.e., to implement a mixed strategy). This dual requirement distinguishes the problem from ordinary rate‑distortion theory, where the goal is merely to minimize expected distortion of the reconstructed source.

To capture the joint role of information and randomness, the authors define an “information‑transmission complex” consisting of the pair ((I(S;M), H(Z|S,M))). The first component measures how much of the state’s entropy is conveyed, while the second quantifies the amount of additional randomness that the message supplies beyond what is already known from the state. They prove that the game value (V(R)) is a monotone, concave function of this complex: higher mutual information and more residual randomness both raise the achievable payoff for the informed player (and lower it for the opponent). In the limit of unlimited rate, the helper can transmit the full state and a perfectly uniform random seed, reproducing the classic complete‑information value of the game.

When the rate is constrained, a trade‑off emerges. The paper introduces a “game‑oriented rate‑distortion function” (R(D,\rho)), where (D) is the expected distortion of the state estimate and (\rho) is a measure of randomness deficiency. Unlike the traditional rate‑distortion function (R(D)), the new function must satisfy

\

Comments & Academic Discussion

Loading comments...

Leave a Comment