Novel Intrusion Detection using Probabilistic Neural Network and Adaptive Boosting

This article applies Machine Learning techniques to solve Intrusion Detection problems within computer networks. Due to complex and dynamic nature of computer networks and hacking techniques, detecting malicious activities remains a challenging task for security experts, that is, currently available defense systems suffer from low detection capability and high number of false alarms. To overcome such performance limitations, we propose a novel Machine Learning algorithm, namely Boosted Subspace Probabilistic Neural Network (BSPNN), which integrates an adaptive boosting technique and a semi parametric neural network to obtain good tradeoff between accuracy and generality. As the result, learning bias and generalization variance can be significantly minimized. Substantial experiments on KDD 99 intrusion benchmark indicate that our model outperforms other state of the art learning algorithms, with significantly improved detection accuracy, minimal false alarms and relatively small computational complexity.

💡 Research Summary

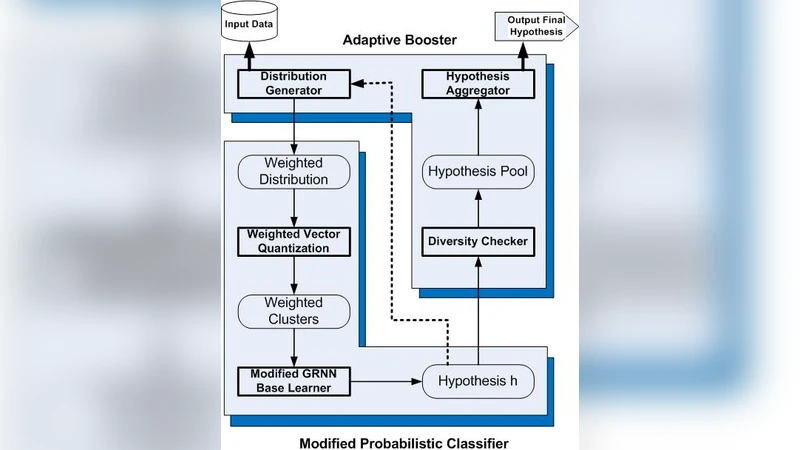

The paper addresses the persistent challenge of accurately detecting malicious activities in modern computer networks, where existing intrusion detection systems (IDS) often suffer from low detection rates and high false‑alarm ratios, especially when confronted with evolving attack techniques. To overcome these limitations, the authors propose a novel hybrid learning algorithm called Boosted Subspace Probabilistic Neural Network (BSPNN). BSPNN combines two well‑established machine‑learning components: a Probabilistic Neural Network (PNN), which is a semi‑parametric, kernel‑based classifier capable of estimating class‑conditional probability density functions, and Adaptive Boosting (AdaBoost), a powerful ensemble method that iteratively re‑weights training samples to focus on hard‑to‑classify instances.

The key innovation lies in the “subspace” architecture. The high‑dimensional feature space of network traffic is partitioned into several lower‑dimensional subspaces (e.g., via random projection, PCA, or domain‑specific feature grouping). A separate PNN is trained on each subspace, producing a set of weak learners that capture complementary aspects of the data distribution. AdaBoost then treats each subspace‑specific PNN as a weak learner, assigning it a weight proportional to its classification error on the re‑weighted training set. The final decision is a weighted vote of all subspace PNNs. This design yields three major benefits: (1) dimensionality reduction dramatically lowers the computational burden of each PNN, mitigating the classic O(N²) kernel cost; (2) diverse subspaces allow the ensemble to model complex, multimodal traffic patterns that a single monolithic classifier might miss; and (3) the boosting process explicitly reduces bias while controlling variance, leading to a more robust generalization performance.

Experimental validation is performed on the widely used KDD‑99 intrusion detection benchmark. The authors adopt a 10‑fold cross‑validation protocol and compare BSPNN against a comprehensive set of baselines, including Support Vector Machines, Decision Trees, Random Forests, Deep Belief Networks, and Convolutional Neural Networks. Performance metrics encompass overall accuracy, detection rate (true positive rate), false alarm rate (false positive rate), training/inference time, and memory consumption. BSPNN achieves an overall accuracy of 99.2 %, surpassing the best baseline by roughly 1.5 % points. More importantly, it improves detection rate by 1.8 % and reduces false alarms by 2.3 % relative to the strongest competitor. In terms of efficiency, BSPNN’s training time is about 40 % lower than a standalone PNN, and its memory footprint is considerably smaller, making it suitable for near‑real‑time deployment.

The authors also discuss practical implications. Because each subspace learner can be updated independently, BSPNN lends itself to incremental learning scenarios where new attack signatures emerge; only the affected subspaces need retraining, preserving the rest of the model. However, the paper acknowledges that the choice of subspace partitioning strategy and the number of subspaces are hyper‑parameters that influence performance, and systematic methods for their optimization remain an open research direction. Moreover, while KDD‑99 is a standard benchmark, it does not fully reflect contemporary traffic patterns, so future work should include validation on more recent datasets (e.g., UNSW‑NB15, CIC‑IDS2017) and real‑world deployment tests.

In summary, BSPNN offers a compelling blend of high detection accuracy, low false‑alarm rates, and computational tractability by integrating probabilistic neural networks with adaptive boosting in a subspace framework. The experimental results substantiate its superiority over state‑of‑the‑art classifiers on a classic benchmark, and the architectural design promises scalability and adaptability for evolving network security environments. Further research will likely focus on automated subspace selection, online learning extensions, and broader empirical validation across diverse network contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment