Local likelihood estimation of local parameters for nonstationary random fields

We develop a weighted local likelihood estimate for the parameters that govern the local spatial dependency of a locally stationary random field. The advantage of this local likelihood estimate is that it smoothly downweights the influence of far away observations, works for irregular sampling locations, and when designed appropriately, can trade bias and variance for reducing estimation error. This paper starts with an exposition of our technique on the problem of estimating an unknown positive function when multiplied by a stationary random field. This example gives concrete evidence of the benefits of our local likelihood as compared to na"ive local likelihoods where the stationary model is assumed throughout a neighborhood. We then discuss the difficult problem of estimating a bandwidth parameter that controls the amount of influence from distant observations. Finally we present a simulation experiment for estimating the local smoothness of a local Mat'ern random field when observing the field at random sampling locations in $[0,1]^2$.

💡 Research Summary

This paper introduces a weighted local likelihood framework for estimating spatially varying parameters of non‑stationary random fields. Classical local likelihood methods typically treat all observations within a fixed neighbourhood as equally informative, which can lead to substantial bias when sampling locations are irregular or when the true field exhibits rapid spatial variation. The authors overcome these limitations by incorporating a distance‑based weighting function into the local likelihood, thereby smoothly down‑weighting the influence of distant observations.

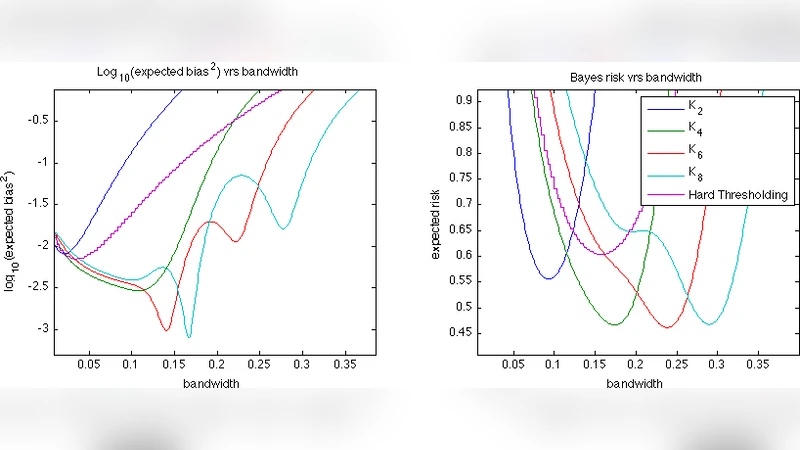

The methodology is built around a kernel weight w_i = K(‖s_i – s‖/λ), where K is a chosen kernel (e.g., Gaussian or Epanechnikov) and λ is a bandwidth parameter controlling the spatial extent of the neighbourhood. The weighted local likelihood for a parameter vector θ at location s is defined as

L(θ; Y, s) = ∏_i

Comments & Academic Discussion

Loading comments...

Leave a Comment