Distinguishing Cause and Effect via Second Order Exponential Models

We propose a method to infer causal structures containing both discrete and continuous variables. The idea is to select causal hypotheses for which the conditional density of every variable, given its causes, becomes smooth. We define a family of smo…

Authors: Dominik Janzing, Xiaohai Sun, Bernhard Schoelkopf

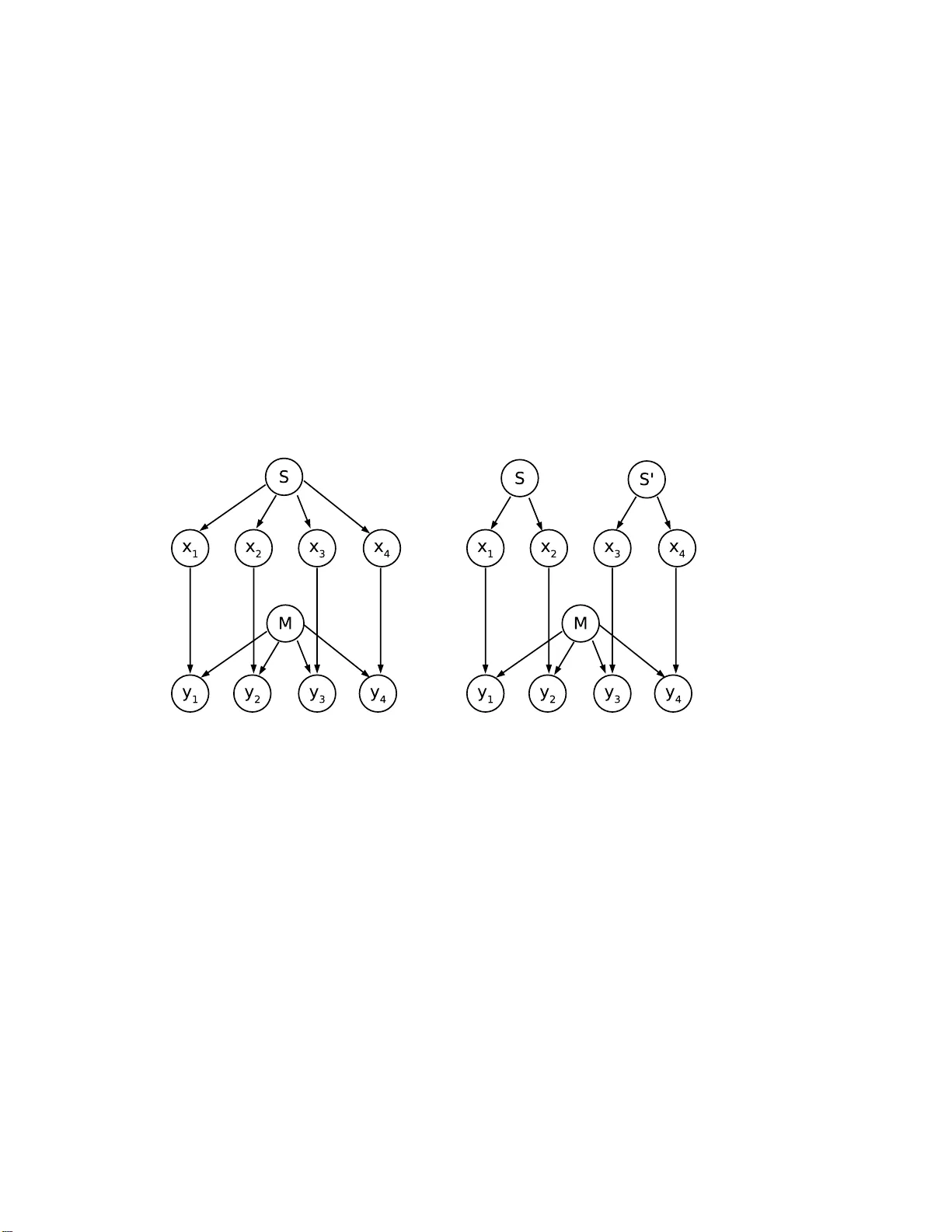

Distinguishing Cause and Effect via Second Order Exp onen tial Mo dels Dominik Janzing ∗ , Xiaohai Sun, and Bernhard Sc h¨ olk opf Max Planck Institute for Biolo gical Cyb er netics 72076 T¨ ubingen, Germany 29 Octob er 2009 Abstract W e prop ose a metho d to infer causal structures containing both dis- crete and co nt inu ous v ar iables. The idea is to select caus al hypothes es for which the conditiona l density of ev ery v ar iable, given its caus e s, be- comes s mo oth. W e define a family o f smo oth densities and conditio na l densities by second order exp onential mo dels, i.e., by maximizing con- ditional ent ropy sub ject to first and second statistical moments. If some of the v aria ble s take only v alues in pr op er subsets o f R n , these conditionals can induce different families of joint distributions e ven for Marko v -equiv alent gra phs . W e cons ide r the ca se o f one binary and one r e al-v alued v ariable where the method ca n distinguish b etw een ca use a nd effect. Using this example, we describ e that sometimes a ca usal hypo thesis must b e rejected b ecause P ( effect | cause ) and P ( cause ) share algor ithmic in- formation (which is un typical if they a re chosen indep endently). This wa y , our metho d is in the same spirit as faithfulness-based causa l in- ference b ecause it also rejects non-ge ner ic mutual a djustmen ts among D AG-parameters . 1 In tro duction Finding causal stru ctures that generated the statistical dep endences among observ ed v ariables has attracted increasing in terest in mac hin e learning. Al- though there is in p rinciple no metho d for reliably iden tifying causal struc- tures if no randomized studies are feasible, the seminal w ork of S pirtes et ∗ email: dominik.janzing@tueb in gen.mpg.de 1 al. [1] and Pea r l [2] made it clear th at un der reasonable assumptions it is p ossible to deriv e causal information fr om pu r ely observ ational data. The formal language of the conv en tional approac hes is a graph ical m o del, where the random v ariables are the no des of a directed acyclic graph (D A G) and an arr ow fr om v ariable X to Y indicates that th ere is a dir ect causal influence from X to Y . The definition of “direct causal effect” from X to Y refers to a hyp othetical interv en tion where all v ariables in the mo del except from X and Y are adjusted to fi xed v alues and one observ es whether th e distribution of Y c hanges while X is adjusted to different v alues. As clarified in detail in [2], the change of the d istribution of Y in suc h an interv en tion can b e deriv ed from the join t distribution of all r elev ant v ariables after the causal D A G is giv en. The essen tial p ostulate that connects stati stics to causalit y is the s o- called causal Marko v condition stating that every v ariable is conditionally indep end en t of its non-effects, giv en its direct causes [2]. If the join t distribu- tion of X 1 , . . . , X n has a d ensit y p ( x 1 , . . . , x n ) with resp ect to some pro d uct measure µ (wh ic h we assu me throughout the pap er), the latter factorizes [3] in to p ( x 1 , . . . , x n ) = Π n j =1 p ( x j | pa j ) , (1) where pa j is the set of all v alues of the paren ts of X j with resp ect to the true causal graph. Th e conditional dens ities p ( x j | pa j ) will b e called Markov kernels . They r epresen t the mec hanism that generate the statistical d ep en- dences. A large class of known causal inference algorithms (lik e, for instance, PC, IC , F CI, see [4, 2]) are based on the causal faithfulness principle wh ic h reads: among all graphs that render the join t d istribution Marko vian, prefer those structures that allo w only the observe d conditional d ep endences. In other words, faithfulness is b ased on the assumption th at all the observe d indep end ences are du e to the causal structure rather than b eing a result of sp ecific adjustments of parameters. On e of the main limitations of this t y p e of indep endence-based causal inference is th at there are typical ly a large n u m b er of D A Gs that indu ce the same s et of indep end ences. Rules for the selection of hyp otheses within these Markov e quivalenc e classes are therefore desirable. Before we d escrib e our metho d , w e b riefly ske tc h some metho ds from the literat ure. [5] ha v e obs erv ed that linear causal relationships b et ween non-Gaussian d istributed rand om v ariables ind uce join t measures whic h re- quire non-linear cause-effect relations for the wrong causal d irections. Their causal inference p rinciple [6] for linear non-Gaussian acyclic mo dels (short: 2 LiNGAM), is based on indep endent comp onen t analysis. 1 It selects causal h yp otheses for whic h almost linear cause-effect relations are sufficient when- ev er suc h hypotheses are p ossible for a give n d istr ibution. 2 [8] generalized this idea to the case w here every v ariable is a p ossibly (non-linear) fun ction of its direct causes up to some additiv e n oise term th at is indep endent of the causes (see also [9] and [10]). Under this assu m ption, different causal struc- tures in duce, in the generic case, d ifferen t classes of join t distributions ev en if th e causal graph s b elong to the same equiv alence class. [11, 12] generalized the mo del class to the case where ev ery fun ction is additionally s u b jected to non-linear distortion compared to the mo dels of [8]. Ho wev er, all these algo- rithms only work for r eal-v alued v ariables and th e generalizati on to discrete v ariables is not straightfo rw ard. Here we describ e a metho d (first prop osed in our conference pap er [13]) that can deal with com bin ations of discrete and con tinuous v ariables, it ev en b enefits fr om such a combinatio n . More precisely , it requ ires that at least one of the v ariables is d iscrete or attai ns only v alues in a p r op er subset of R n . W e define a p arametric family of conditionals that induce differen t families of j oin t distribu tions for different causal directions. The under lyin g idea is related to an ob s erv ation of [14] stating that the same join t distrib ution of com binations of discrete and con tinuous v ariables ma y ha ve descriptions in terms of simple Marko v k ernels for one D AG b ut require more complex ones for other D A Gs. In contrast to [14], w e d efine families of Marko v k ernels that are deriv ed fr om a u nique pr inciple, regardless of whether the v ariables are discrete or contin uous. T o describ e our idea, assu me that X is a binary v ariable and Y r eal- v alued and that we obser ve th e join t distr ib ution sho wn in Fig. 1: Let p ( y ) b e a b imo dal mixture of t wo Gauss ians suc h that b oth p ( y | x = 0) and p ( y | x = 1) are Gaussians with the same w idth bu t d ifferen t mean. Then it is natur al to assume th at X is the cause and Y the effect b ecause c hanging the v alue of X then w ould simp ly shif t the mean of Y . F or the conv erse mo del Y → X , bimo d alit y of Y remains unexplained. Moreov er, it s eems unlik ely , that conditioning on the effect X separates the t wo mo des of p ( y ) ev en though X is not causally r esp onsib le for the bimo dalit y . T o show that ther e are also join t distributions where Y → X is more natural, assume that p ( y ) is Gaussian and the supp orts of p ( y | x = 0) and p ( y | x = 1) are ( −∞ , y 0 ] an d [ y 0 , ∞ ), resp ectiv ely , as sh o wn in Fig. 2 . One 1 It w as implemented in Matlab by P . O . Hoy er, av ailable at http://w ww.cs.helsinki.fi/group/neuroinf/lingam/ . 2 Apart from this, it has b een shown that the linearit y assumption helps also for causal inference in the presence of latent v ariables (see, e.g. [7]). 3 Figure 1: Left: Joint density p ( x, y ) of a real-v alued v ariable X and a binary v ariable X s ug gesting a mo del X → Y , b ecause the influence o f X on Y then only consists o f shifting the mean of the gaus sian. The causal hypo thesis Y → X is less likely: only spe c ific choices of p ( x | y ) would separate the bimo da l Gaussia n mixture p ( y ) (right) into tw o separ ate mo des. It requires an even more sp ecific conditiona l p ( x | y ) to make the c o mp o nents gaussian. Figure 2: Joint densit y p ( x, y ) of a real-v alued ra ndom v ariable Y and a bina ry v ariable X . The mar g inal distribution p ( y ) is Gaussian. The ca usal hypothesis Y → X is plaus ible: the co nditional p ( x | y ) co rresp o nds to setting x = 1 for all y ab ove a certa in threshold. W e r eject the conv erse hypothesis X → Y b e cause p ( y | x ) and p ( x ) share alg orithmic information: giv e n p ( y | x ), only sp e cific choices of p ( x ) r epro duce the Gaussia n p ( y ), wherea s generic choices of p ( x ) would yield “o dd” densities of the type on the rig ht . 4 can easily think of a causal mec hanism w hose output x is 1 f or all inp uts y ab o ve a certai n threshold y 0 , and 0 otherwise. Assuming X → Y , we w ould requir e a m ec hanism that generates outputs y from inputs x according to p ( y | x ). Giv en t his mec hanism , there is only one d istribution p ( x ) of inputs for which p ( y ) is Gaussian. Hence, the generation of the observe d join t distribution requires m utual adjustmen ts of parameters for this causal mo del. In S ection 4 we will d escrib e m ore formal argumen ts that supp ort this w ay of reasoning. It is based on ideas in [15] and [16] to dr a w causal con- clusions not only from statistic al dep endences. Instead, also algorithmic information can indicate causal directions. 2 Causal inference using second order exp onent ial mo dels Here w e defin e a parametric family of Mark ov kernels p ( x j | pa j ) that describ e a simple w a y h o w X j is influenced b y its parents P A j . Without loss of generalit y , w e w ill only consid er complete acyclic graphs, i.e., the paren ts of X j are given by X 1 , . . . , X j − 1 (the general case is imp licitly includ ed by setting the corresp onding parameters to zero). The domains T j of X j are subsets of R d j with in teger Hausd orff dimen- sion [17] ˜ d j , i.e., w e exclude f r actal subsets. In Sections 3 and 5 we will consider, for instance, interv als in R , circles in R 2 , and countable sub sets of R . W e defin e p ( x 1 ) := exp α T 1 x 1 + x T 1 β 11 x 1 − z 1 p ( x j | x 1 , . . . , x j − 1 ) := exp α T j x j + x T j X i ≤ j β j i x i − z j ( x 1 , . . . , x j − 1 ) , (2) with v ector-v alued parameters α j and matrix-v alued p arameters β j i . The log-partitio n fu nctions z j are giv en by z ( x 1 , . . . , x j − 1 ) := log Z T j exp α T j x j + x T j X i ≤ j β j i x i dµ j ( x j ) , where the reference measure µ j is giv en b y the pro duct of th e Hausdorff measures [17] of the corresp onding dimensions ˜ d j , and only parameters are allo w ed that yield normalizable densities. The term “Hausdorff measure” only formalizes the n atural intuition of a vo lu me of sufficien tly we ll-b eha ved 5 subsets of R n : F or a circle, for instance, it is giv en by the arc length, for coun table s ubsets it is just the counting measure. F or ev ery reordering π of v ariables, the second order conditionals d efine a family of j oint distributions P π . The k ey observ ation on wh ic h our metho d relies is that P π and P π ′ need not to coincide if some of the v ariables X j ha ve domains T j that are pr op er su bsets of R n (if, f or instance, all X j can attain all v alues in R , then P π is the set of all non-degenerate n -v ariate Gaussians for all π and w e cannot giv e p reference to an y causal ordering). Our inference ru le r eads: if there are causal orders π for wh ic h the ob - serv ed d ensit y p is in P π , p refer them to ord erings ˜ π for which p 6∈ P ˜ π . T o apply this idea to finite data where p is not av ailable, we prefer the orderings π f or w hic h th e Kullback- Leibler distance b et ween the empirical distribution and p is min imized, i.e., the lik eliho o d of the d ata is maximized. In S ection 5, we discuss exp erimen ts with just t wo v ariables X, Y . W e ha ve s everal cases wh ere X is binary and Y real- v alued, and one example where X is tw o-dimensional and atta in s v alues on a circle and Y is real- v alued. T h is shows th at also causal structures con taining only con tin uous v ariables can b e dealt with b y our metho d when some of the domains are restricted. W e n o w describ e th e algorithm. Second order mo del causal inference 1. Give n an m × n matrix of observ ations x ( i ) j . 2. Let X 1 , . . . , X n b e an ordering π of the v ariables. 3. In the j th step, compute p ( x j | x 1 , . . . , x j − 1 ) by minimizing the condi- tional in verse log- likelihoo d L π j ( α, β ) := − α T j x j − x T j X i ≤ j β j i x i + z ( x 1 , . . . , x j − 1 ) , with z j ( x 1 , . . . , x j ) := − log Z T j exp( α T x j + x T j X i ≤ j β j i x i ) dµ j ( x 1 ) . T o compute the partition fun ction n um er ically , we discretize and b ound the domain to a fi nite set of p oin ts. 4. Comp u te th e corresp ond ing joint densit y p π ( x 1 , . . . , x n ) and its total log-lik elihoo d L π := n X j =1 L π j 6 5. Select the causal orderings for wh ic h L π is minimal. This can b e a unique ordering or a set of orderings b ecause not all orderings induce differen t families of join t distributions and b ecause v alues L π and L π ′ are considered equal if th eir difference is b elo w a certain thresh old. F or a pr eliminary justification of the approac h, w e recall that condition- als of this kind o ccur from maximizing the conditional Shann on en tropy S ( X j | X 1 , . . . , X j − 1 ) sub ject to P ( X 1 , . . . , X j − 1 ) and s u b ject to the giv en first and second momen ts [18], for more details s ee also [19]: E ( X j ) = c j (3) E ( X T j X i ) = d ij , (4) where E ( Z ) denotes the exp ected v alue of a v ariable Z . F or m u lti-dimens ional X j , the c j are vecto r s and the d ij are m atrices. Bilinea r constrain ts are the simplest constrain ts for whic h the ent rop y maximizatio n yields inte r actions b et ween the v ariables X j (apart fr om this, linear constrain ts w ould not yield normalizable d en sities for unb ounded domains). In this sens e, second order mo dels generate the simp lest non-trivial family of cond itional densi- ties with in a hierarch y of exp onenti al mo dels [20] that are giv en b y en tropy maximization sub j ect to higher order momen ts. [19] provi des a thermo dyn amic justification of second order mo dels. The pap er d escrib es mo d els of interac tin g p h y s ical systems, wh ere the join t d is- tribution is giv en by fi rst maximizing the en tropy of the c ause -system and then the cond itional ent r op y of the effe ct -system, given the distrib ution of the cause. Both en tropy maximizations are sub j ect to energy constrain ts. If w e assume th at the p h ysical energy is a p olynomial of second order in the relev ant observ ables (wh ic h is not unusual in physics), w e obtain exactly the second order mod els in tro du ced here. 3 Iden tifiabilit y results for sp ecial cases Here we describ e examples that sho w how the restriction of the domains to prop er sub s ets of R can mak e the mo dels iden tifiab le. A case with v ector- v alued v ariables has already b een describ ed in [13], where w e ha ve considered the causal r elation b etw een the da y in th e year and the a v erage temp erature of the d ay . The former tak es v alues on a circle in R 2 , the latter is real-v alued. Second order mo d els fr om d a y to temp erature induce seasonal oscillations of the av erage temp er atur e according to a sine fu nction, wh ic h w as closer to the tru th than th e second order mo del f rom temp erature to day in the y ear. 7 Ho we ver, in the follo win g examples we will restrict the atten tion to one- dimensional v ariables. 3.1 One binary and one real-v alued v ariable A simple case where cause and effect is iden tifiable in our mod el class is already giv en b y the motiv ating example with a bin ary v ariable X and a v ariable Y that can attain all v alues in R . Second order mo del for X → Y Using b oth equations (2), w e obtain p ( x = 1) = γ p ( y | x = j ) = 1 √ 2 π ρ e − ( y − ν j ) 2 2 ρ 2 , (5) with parameters γ , ν 0 , ν 1 , ρ . Both distributions p ( y | x = j ) for j = 0 , 1 are ob viously Gaussians w ith equal width and d ifferen t mean, i.e., p ( y ) is a mixture of t wo Gauss ians (see Fig. 1, left). Second order mo del for Y → X W e obtain p ( y ) = 1 √ 2 π σ e − ( y − ν ) 2 2 σ 2 p ( x = 1 | y ) = 1 2 1 + tanh( αy + β ) , (6) with parameters ν, σ, α, β , where w e hav e used e a 1 + e a = 1 e − a + 1 = 1 2 (1 + tanh( a/ 2)) . (7) A t ypical joint d istribution for the m o del Y → X is sho wn in Fig. 3. Since mixtures of tw o different Gaussians can n ev er yield a Gaussian as marginal distribu tion p ( y ), the only joint distrib ution that is contai ned in the mo del classes for b oth dir ections is a pro du ct distribution of a Gaussian p ( y ) and an arbitrary binary d istribution p ( x ). T his shows that the mo d els are iden tifiable except for the trivial case of indep endence. F urthermore, eqs. (5) and (6) show that our metho d is indeed consisten t with the in tuitiv e arguments w e ga v e for the examples in the in tro duction: the Gaussian mixture in Fig. 1 is a second order mo del for X → Y and the example with thresholding y (Fig. 2) can b e appro ximated b y second order mo dels for Y → X via the limit α → ∞ in eq. (6). 8 Figure 3: Joint density p ( x, y ) for binary X and real-v alue d Y induced by a seco nd order mo del Y → X . Her e w e hav e chosen a relatively steep sigmoid function for p ( x = 1 | y ), which leads to a steep decr ease at the rig ht of the left mo de and the left of the right mo de. An infinitely steep sigmoid function yields sharp thres holding as in Fig. 2. 3.2 More t han three binary v ariables W e first simplify equation (2) for the case that all v ariables X 1 , . . . , X n are binary . W riting an j := x 1 , . . . , x j − 1 for the ancestors of x j , w e obtain p ( x j = 1 | an j ) = exp( α j + β j j + P i 0. Due to the err or b ounds (18) and (17) we ha v e e := k f ( ˆ θ ) − ˆ η k ≤ k D f ( θ ) k + δ ( k λ θ ) c + 1 ( k ν η ) c asymptotically with probabilit y 1 − ǫ for any desir ed ǫ > 0. Since η is a generic v alue, the amoun t of information r equired to sp ecify it u p to an ac- curacy e gro ws asymptotically with − log 2 e (up to some negligible constan t). 19 On th e other hand , ˆ η and f ( ˆ θ ) s hare at least this amoun t of information b ecause they also coincide up to an accuracy e . Hence, I ( f ( ˆ θ ) : ˆ η ) + ≥ − log 2 e . Asymptotically , − log 2 e grows w ith c log k . Hence the m u tual in f ormation b et ween ˆ η and f ( ˆ θ ) is asymptotically larger than − cd log 2 k b its for ev ery c < 1 / 2. Hence we h a ve I ( x 1 , . . . , x k : x k +1 , . . . , x 2 k , y k +1 , . . . , y 2 k ) + ≥ I ( f ( ˆ θ ) : ˆ η ) ≥ cd log 2 k . The first inequalit y follo ws b ecause I ( a : b ) + ≥ I (˜ a : ˜ b ) whenev er K ( ˜ a | a ) + = K ( ˜ b | b ) + = 0 (cf. Th eorem I I.7 in [26]). Here K ( f ( ˆ θ ) | x 1 , . . . , x k ) + = 0 b ecause f ( ˆ θ ) is computed from th e k observ ed x -v alues b y the ab o ve esti- mation pro cedu re and the application of f . Lik ewise, ˆ η is der ived from the observ ed ( x, y )-pairs. The case for d < ˜ d is sh o wn similarly . W e estimate η and θ and sho w that th ey s hare algorithmic information b ecause θ is a simple f unction of η . ✷ No w we present our main theorem stating that second ord er mo dels b et ween one binary and one real-v alued v ariables induce join t distribu tions whose non-c ausal marginals and conditionals are algorithmically d ep end ent in the sense of Theorem 1: Theorem 2 (Justification of second order mo del inference) L et X b e a binary variable and Y r e al-value d and the density of p ( x, y ) b e given by a se c ond or der mo del fr om Y to X for some generic values of the p ar ameters ν, σ, α, β in e q. (6), left and right. Then the c ausal hyp othesis X → Y c ontr adicts the algorithm ic Markov c ondition. This is b e c ause the x -values sample d fr om p ( x ) c ontain algorithmic information ab out the ( x, y ) - p airs obtaine d after changing the “input” distribution p ( x ) (se e Fig 4, right) and ke eping p ( y | x ) . Likewise, if p ( x, y ) admits a se c ond or der mo del fr om X to Y with gene ric values γ , ν 0 , ν 1 , ρ (se e e q. (5), then Y → X must b e r eje cte d. The amount of the shar e d algorithmic information gr ows at le ast lo ga- rithmic al ly in the sample size. 20 The remainder of th is section is dev oted to the pro of of T heorem 2 and a Lemma that is requir ed for this pur p ose. T o sho w that the conditions of Theorem 1 are met, we determine th e parameter v ectors θ , η of the non- causal conditionals, s ho w that they satisfy a fu n ctional relation and that the Fisher in formation matrices are nonsingular. T o prov e the latter statemen t, w e will use the follo wing result: Lemma 1 L et p θ ( x ) for al l θ ∈ I ⊂ R d b e a differ entiable f amily of c ontin- uous p ositive definite densities on a pr ob ability sp ac e Ω ⊂ R m with r esp e ct to the r efer enc e me asur e µ . Assume ther e ar e d p oints x 1 , x 2 , . . . , x d such that the matrix A ( θ ) define d by A ( θ ) := ( ∇ p θ ( x 1 ) , . . . , ∇ p θ ( x d )) , or the matrix ˜ A ( θ ) := ( ∇ log p θ ( x 1 ) , . . . , ∇ log p θ ( x d )) is non-singular. Then the Fisher information matrix F θ is non-singular. Pro of: th e Fisher information matrix can b e rewritten as ( F θ ) ij = Z Ω 1 p θ ( x ) ∂ p θ ( x ) ∂ θ i ∂ p θ ( x ) ∂ θ j dµ ( x ) . Hence, F θ = Z Ω 1 p θ ( x ) ( ∇ p θ ( x ))( ∇ p θ ( x )) T dµ ( x ) = Z Ω p θ ( x )( ∇ log p θ ( x ))( ∇ log p θ ( x )) T dµ ( x ) . It th us is the w eighte d integ r al o ver all rank one matrices ( ∇ p θ ( x ))( ∇ p θ ( x )) T . A t the same time, it can also b e w ritten as a w eigh ted in tegral ov er all ( ∇ log p θ ( x ))( ∇ log p θ ( x )) T . Note that for an y v ector-v alued con tinuous fu nction v and strictly p ositiv e scalar function q , the image of th e matrix Z q ( x ) v ( x ) v ( x ) T dµ ( x ) is giv en by th e s p an of all v ( x ). F θ th u s is th e s p an o v er all {∇ p θ ( x ) } x and, at the same time, the s p an o v er all {∇ log p θ ( x ) } x . ✷ 21 W e are n o w able to pro ve the m ain theorem: Pro of (of T heorem 2): First consider the case wh ere p ( x, y ) has a second order mo del fr om Y to X . T o apply Th eorem 1 we ha v e to show th at G θ , η is non-singular. W e can use Lemma 1 ev en though it is not explicitly stated for c onditional d ensities b ecause we can apply the latter to the join t d ensit y p η ( x ) := p θ ( x ) p η ( y | x ) for x := ( x, y ) and fi xed θ . T hen ∇ log p η ( x, y ) = ∇ log p η ( y | x ) , and ∇ p η ( x, y ) = p θ ( x ) ∇ p η ( y | x ) , i.e., it is sufficient to c heck whether the gradients of the c onditiona l or its logarithm span a ˜ d -dimensional space. W e hav e p σ , ν,α,β ( x = 1 , y ) = 1 2 σ √ 2 π (1 + tanh( αy + β )) e − ( y − ν ) 2 2 σ 2 = e − ( y − ν ) 2 2 σ 2 σ √ 2 π (1 + e 2 αy +2 β ) , where w e hav e used eq. (7). T his yields p σ , ν,α,β ( x = 1) = 1 σ √ 2 π Z e − ( y − ν ) 2 2 σ 2 1 + e 2 αy +2 β dy . (19) In tr o ducing the parameter v ector η := ( σ, ν , α, β ) we obtain p η ( y | x = 1) = 1 p η ( x = 1) e − ( y − ν ) 2 2 σ 2 σ √ 2 π (1 + e 2 αy +2 β ) , where the inpu t d istribution p ( x ) still is formally parameterized by η and will b e written in terms of one r elev ant p arameter θ b elo w. In th e app endix w e pr o vide 4 p oints y 1 , . . . , y 4 and a v alue η = η 0 for whic h the v ectors ∇ p η ( y j | x = 1) are linearly indep endent. Hence G η,θ is n on-singular for η 0 and all θ . All entries of G θ , η are analytical fu n ctions in ev ery comp onent of η b ecause they are uniformly conv erging in tegrals o v er analytical functions. Hence, regularit y of G η,θ for one η already sho ws regularity for generic η . No w we p arameterize p ( x ) by an one-dimensional p arameter θ := p η ( x = 1) = g ( η ) , where p η ( x ) is giv en b y the integ ral in eq. (19). T his defines the family of densities p θ ( x ) via p θ ( x = 1) := θ . 22 Hence F θ is one-dimens ional. It is clearly non -sin gular for generic θ b ecause ∂ p θ ( x = 1) ∂ θ 6 = 0 . Using K ( g ) + = 0, Theorem 1 sho ws that the x v alues sampled from p θ ( x ) share algorithmic information with the ( x, y )-pairs samp led from p θ ′ ( x ) p η ( y | x ). No w consider th e case that there is a second order mo del from X to Y . Hence p θ ( y ) = 1 ρ √ 2 π (1 − γ ) e − ( y − ν 0 ) 2 2 ρ 2 + γ e − ( y − ν 1 ) 2 2 ρ 2 , with the parameter v ector θ = ( γ , ν 0 , ν 1 , ρ ). Note that w e now app ly Theo- rem 1 with exchanging the role of X and Y . T o show that F θ is n on-singular w e compute ∇ p θ ( y ) and fin d p oints y 1 , . . . , y 4 and a v alue θ su ch that the corresp ondin g gradients are linearly in d ep endent (see App endix 7). Hence F θ is nonsingular due to Lemma 1. As ab ov e, this also holds for generic θ . F or the cond itional den sit y of X give n Y , only a fu nction of θ is relev ant (as ab ov e) bu t we start by w riting it fi rst in terms of θ a n d redu ce the parameter space later to th e r elev ant p art: p γ ,ν 0 ,ν 1 ,ρ ( x = 1 | y ) = γ e − ( y − ν 1 ) 2 2 ρ 2 (1 − γ ) e − ( y − ν 0 ) 2 2 ρ 2 + γ e − ( y − ν 1 ) 2 2 ρ 2 − 1 . In tr o ducing α := 1 ρ 2 ( ν 0 − ν 1 ) (20) and β := 1 − γ γ exp ν 2 1 − ν 2 0 2 ρ 2 , (21) the conditional is of th e f orm p γ ,α,β ( x = 1 | y ) = 1 β e αy + 1 . W e defin e η := ( α, β ) and c heck that G η,θ is non-singular. F or doing so, w e compute ∇ p η ( x = 1 | y ) and fi nd v alues η 0 and y 1 , . . . , y 2 , y 3 suc h that the gradien ts are linearly indep endent (App endix). Hence G η,θ is non-singular for one η 0 and all θ and thus also for generic pairs η , θ . The function g is giv en b y g ( γ , ν 0 , ν 1 , ρ ) := ( α, β ) with α and β as in eqs. (20) and (21), wh ic h satisfies K ( g ) + = 0. This shows that the y -v alues sampled from p θ ( y ) sh are algorithmic information with the ( x, y )-pairs sampled from p θ ′ ( y ) p η ( x | y ) by Theorem 1. ✷ 23 5 Exp erimen ts W e condu cted 8 exp erimen ts with real-wo r ld data for whic h the causal s tr uc- ture is known. In all cases w e h ad pairs of v ariables where one is the cause and one the effect. Ev en though there ma y also b e hidd en common causes, prior kn owledge strongly suggests that a significant p art of the dep end en ces are d ue to an arro w from one v ariable to the other. Th e selection of datasets w as b ased on the follo w ing criteria: W e hav e c hosen sev eral examples where one v ariable is bin ary and the other one is either con tinuous or discrete with a wide r an ge, b ecause this is the case where iden tifiabilit y b ecomes most ob vious (see Sub s ection 3.1). T o demonstrate that w e ha ve id en tifiabilit y for v arious typ es of v alue sets w e hav e also included an example with a v ari- able of angular-t y p e and example with p ositiv e v ariables. T he restriction to p ositiv e v alues, h o wev er, only leads to significantl y d ifferen t distributions for differen t causal directions if there is enough prob ab ility close to the b oun d- ary . Otherw ise, the second order mo dels yield almost biv ariate Gaussians and the direction is not identifiable. Most examples of the data b ase “cause effect pairs” in the NIPS 2008 causalit y comp etition [27] are of this t yp e, except for the examples with “altitude”. Our algorithm constructs the d omains by bin n ing the observe d v alues in to interv als of equal length instead of asking for the range as additional input. If the d ifferen ces of the loglik eliho o d s are too small, our algorithm will not decide for either of the causal directions. W e ha ve set the treshold to | L → − L ← | ≤ 1 10000 L → + L ← 2 . The c h oice of this thr eshold, h o we v er, is the result of our limited num b er of exp eriments. Our theory in Section 4 only states the follo win g: if the true distribution p erfe ctly coincides with a s econd ord er mo d el in one direction but n ot the other, the latter one has to b e rejected b ecause this causal struc- ture would require u nlik ely adjustment s. F or the case where the distribu tion is on ly close to a second ord er mo del it is hard to analyze ho w close it should b e to ju stify our causal conclusion. The answer to this question is left to the future. Meteorological data Exp eriment No. 1 consider s the altitude and a ve rage temp erature of 675 lo- cations in Germany [28]. Th e statistica l dep endence b et ween b oth v ariables is very ob vious fr om scatter plots and one observ es an almost linear decrease 24 of the temp erature with increasing altitude. The fact that a s ignifi can t part of the p oints are close to altitude 0 (i.e., the m in imal v alue) is imp ortant f or iden tifiability of the causal direction b ecause the r estriction of the domain to p ositiv e v alues can only b e relev ant in this case. Exp eriment No. 2 stud ies the r elation b etw een altitude and pr ecipitation of 4748 lo cations in Germany [28]. Here b oth v ariables are p ositiv e-v alued, whic h also leads to differen t mo dels in the t wo directions. In exp erim ent No. 3, w e were giv en the d aily temp erature av erages of 9162 consecutiv e da ys b et we en 1979 and 2004 in F urt w angen, German y [29]. The seasonal cycl e lea d s to a strong statistical dep en dence b et ween the v ariable day in the year (represen ted as a p oint on the unit circle S 1 ⊂ R 2 ) and tempe rature , where the form er should b e considered as the cause since it d escrib es the p osition of the earth on its orb it around the su n. Human categorization Our exp erimen ts No. 4 and No . 5 co n sider tw o dataset s from th e same psyc h ologica l exp erim ent on human categ orization. The sub ject s are sh o wn artificially generated f aces th at in terp olate b et ween male an d female faces [30]. T he interp olation corr ep onds to switching a parameter b et ween 1 and 15 (in inte ger steps). The s ub jects are ask ed to decide whether the face is male ( answer =0) or female ( answer =1). Th e exp erimenta list has c hosen parameter v alues according to a un iform d istribution on { 1 , . . . , 15 } . No. 4 studies the relation b et w een parameter and answer . Since the exp erimenta list c hose un iform distribu tion o ver { 1 , . . . , 15 } and the dep en- dence of the probabilit y f or answer = 1 is close to a sigmoid fu nction, the empirical distribu tion is here v ery close to the second ord er mo del corre- sp ond in g to the correct causal structure parameter → a nswer . Our exp eriment No. 5 stud ies the relation b etw een the resp on s e time and the parameter v alues. Since the resp onse time is minimal for b oth extremes in the parameter v alues, we ha ve strongly non-linear interac tions that cannot b e captured b y second-order mo dels. It is therefore not su rprising that th ere is no d ecision in th is case. Census data Exp eriments No. 6 and No. 7 co n sider census data from 35.326 person s in the USA [31]. In No. 6, the r elation b et wee n age and marital status is studied. The latter take s the t wo v alues 0 f or nev er married and 1 for married, divo rced, or w ido wed. No. 7 considers the r elation b et we en gender 25 and income. Here w e assume that the gender is almost randomized b y nature and there w e th us exp ect no confoundin g to an y observ able v ariable. Constituen ts of wine Exp eriment No.8 consid ers the concen tration of proline in wine from t wo differen t cultiv ars. W e assume that the binary v ariable culti var is the cause, even though one cannot exclude that the proline lev el (if relev ant for the taste) d irectly in fluenced the d ecision of the cultiv ar to c ho ose this sort of wine. List of results The results are shown in the b elo w table. The ground truth is alw ays that v ariable 1 influences v ariable 2, i.e., we ha ve one wrong result and n o decision in t wo cases. No. v ariable 1, d omain v ariable 2, v alue set L → L ← result 1 altitude , R + temperat ure , R 3.3697 3.43 66 → 2 altitude , R + precipit ation , R + 3.5885 3.63 43 → 3 day of the year , S 1 temperat ure , R 5.7448 5.75 27 → 4 paramete r , { 1,. . . ,15 } answer , { 0 , 1 } 4.1143 3.11 50 → 5 paramete r , { 1,. . . ,15 } time , R + 3.9873 3.98 73 ? 6 age , R + marrital status , { 0 , 1 } 4.9918 4.9920 ? 7 sex , { 0 , 1 } income , R + 3.8770 3.87 58 ← 8 cultivar , { 0 , 1 } proline , R + 3.9209 3.94 96 → 6 Discussion and relations to indep endence-based causal inference In section 4 we ha ve sho w n for a sp ecial case that the mo del X → Y must b e r ejected if there is a s econd order mo del fr om Y to X b ecause it required sp ecific mutual adjustment s of p ( x ) and p ( y | x ) to admit suc h a mo d el. W e ha ve already mentioned that this is the same idea as rejecting unfaithfu l distributions. Indeed, [1 5 ] argued that the Mark o v kernels in unf aithful distributions share algo r ithmic inform ation. Hence algorithmic information theory pro vides a unifying framew ork for indep en dence-based approac h es and those that imp ose constrain ts on the shap es of conditional den sities. The follo wing example mak es this link even closer b ecause it shows that in some situations the same constrain ts on a joint distribution ma y app ear 26 Figure 5: Tw o Lay ers in the causal chain. If the co mpo nent s ar e o nly influenced by hor izontally adjacent ones from the lay er ab ove, the Ma rko v condition further simplifies the forw ard time co nditional. as indep endenc e constrain ts from one p oint of view and as constraints on th e shap e of cond itionals from an other p ersp ectiv e. Consider the causal c hain X 1 → X 2 → · · · → X n , (22) where ev ery X j is a vect or of d imension d . Structures of th is kind o ccur, for instance, if X j represent s the state of some system at time t and th e dynamics is generated by a first order Mark o v pro cess. Due to the causal Mark o v condition the join t distribution factorize s into p ( x 1 ) p ( x 2 | x 1 ) · · · p ( x n | p n − 1 ) , but no constrain ts are imp osed on the conditionals p ( x j | x j − 1 ). Assume no w we consider eac h comp onen t X ( i ) j of la y er j as a v ariable in its own righ t and th u s obtain a causal structur e b et we en ˜ n := nd v ariables. Assuming that n o comp onen t X ( i ) j is infl uenced by comp onents of the same la y er , p ( x j | x j − 1 ) m us t b e of the form p ( x j | x j − 1 ) = Π d i =1 p ( x ( i ) j | x j − 1 ) . (23) Moreo v er, if w e assume that ev ery X ( i ) j is only infl uenced by some of the v ariables in th e previous la yer, the conditional further simplifies in to p ( x j | x j − 1 ) = Π d i =1 p ( x ( i ) j | pa j i ) , where pa j i denote the v alues of P A ij , i.e., the parents of X ( i ) j (Fig. 5). Hence, 27 the fi ne-structure of the causal graph imp oses constraints on p ( x j | x j − 1 ) that are not imp osed b y the coarse-grained structur e. Assume we are giv en data from the ab o ve time series, b u t it is not known whether the true causal structure reads X n → X n − 1 → · · · → X 1 , or the one in (22). When resolving the v ectors in their comp onent s , we ha ve to reject the latte r hyp othesis b ecause th e indep end ences X ( i ) j ⊥ ⊥ X ( i ′ ) j | X j − 1 (24) w ould violate f aithfulness. F rom the coarse-grained p ersp ectiv e, w e can only reject th e causal hyp othesis b y imp osing an appr opriate s implicit y pr in ciple of conditionals. Th en w e conclude that (22 ) is more likely to b e true b ecause the Mark o v k ern els are simpler b ecause they satisfy eq. (24). Finding further useful simplicit y constraints has to b e left to the future. 7 App endix 7.1 Matrix for p ( y | x ) W e h a ve : log p σ , ν,α,β ( y | x = 1) = − log σ − log √ 2 π − ( y − ν ) 2 2 σ 2 − log e 2 αy +2 β + 1 − log p σ , ν,α,β ( x = 1) . Hence ∂ log p η ( y | x =1) ∂ ν = − y − ν σ 2 − ∂ log p η ( x =1) ∂ ν =: h 1 ( y ) ∂ log p η ( y | x =1) ∂ σ = − 1 σ + ( y − ν ) 2 σ 3 − ∂ log p η ( x =1) ∂ σ =: h 2 ( y ) ∂ log p η ( y | x =1) ∂ α = − 2 y e 2 αy + 2 β e 2 αy + 2 β +1 − ∂ log p η ( x =1) ∂ α =: h 3 ( y ) ∂ log p η ( y | x =1) ∂ β = − 2 e 2 αy + 2 β e 2 αy + 2 β +1 − ∂ log p η ( x =1) ∂ β =: h 4 ( y ) , In tu itiv ely , it is qu ite eviden t that the f u nctions h j are linearly indep end en t for generic η b ecause h 1 con tains linear terms in y , h 2 is a p olynomial of degree t wo in y , h 3 con tains y and an expression with an exp onent ial fu nc- tion in the denominator, h 3 con tains only the exp onen tial expression in the denominator. W e can thus fin d p oin ts y 1 , . . . , y 4 suc h th at the row ve ctors ( h j ( y 1 ) , h j ( y 2 ) , h j ( y 3 ) , h j ( y 4 )) are lin early indep end en t. Instead of pr o ving 28 this directly (wh ic h would in volv e deriv ativ es of the logarithms of marginals), w e u s e the follo wing indirect argument : Cho ose 5 v alues y ′ 0 , . . . , y ′ 4 and con- sider the ro ws h j ( y ′ 0 ) , h j ( y ′ 1 ) . . . , h j ( y ′ 4 ) j = 1 , . . . , 4 . (25) Consider the pro ject ion of R 5 on to the quotient space R 5 / R (1 , 1 , 1 , 1 , 1) and represent the images of the vect ors (25) by h j ( y ′ 1 ) − h j ( y ′ 0 ) , h j ( y ′ 2 ) − h j ( y ′ 0 ) , . . . , h j ( y ′ 4 ) − h j ( y ′ 0 ) j = 1 , . . . , 4 . Chec k that these 4 ve ctors are linearly indep end en t (whic h can fortunately b e done w ithout computing the deriv ativ es of log σ , ν,α,β p ( x = 1)), h ence the ro w vec tors (25) are in dep end en t, to o. W e can th u s select 4 v alues y 1 , . . . , y 4 from y ′ 0 , . . . , y ′ 4 suc h that the rows ( h j ( y 1 ) , . . . , h j ( y 4 )) are ind ep end ent. W e ha ve numerically c hec ked this for ν = σ = α = β = 1 , y ′ j = j . 7.2 Matrix for p ( y ) The coefficients of ∇ p θ ( y ) read: ∂ p θ ( y ) ∂ γ = 1 √ 2 π ρ − e − ( y − ν 0 ) 2 2 ρ 2 + e − ( y − ν 1 ) 2 2 ρ 2 ∂ p θ ( y ) ∂ ν 0 = 1 √ 2 π ρ (1 − γ )( y − ν 0 ) ρ 2 e − ( y − ν 0 ) 2 2 ρ 2 ∂ p θ ( y ) ∂ ν 1 = 1 √ 2 π ρ γ ( y − ν 1 ) ρ 2 e − ( y − ν 1 ) 2 2 ρ 2 ∂ p θ ( y ) ∂ ρ = 1 √ 2 π ρ (1 − γ ) ( y − ν 0 ) 2 ρ 3 − 1 ρ e ( y − ν 0 ) 2 2 ρ 2 + γ ( y − ν 1 ) 2 ρ 3 − 1 ρ e − ( y − ν 1 ) 2 2 ρ 2 . The v ectors are linearly indep endent for the p oin ts y j = j for j = 1 , . . . , 4 with γ = 1 / 2 , ν 0 = 0 , ν 1 = 1 , ρ = 1. 7.3 Matrix for p ( x | y ) In tr o ducing the function h with h y ( η ) := β e αy + 1 29 w e hav e p η ( x = 1 | y ) = 1 /h y ( η ) and th us ∇ p η ( x = 1 | y ) = − 1 h 2 y ( η ) ∇ h y ( η ) , with ∂ h y ( η ) ∂ α = y β e αy and ∂ h y ( η ) ∂ β = e αy . Since the functions h 1 ( y ) := y β e αy and h 2 ( y ) := e αy are linearly indep endent for generic α, β , w e can ob viously find v alues y 1 , y 2 suc h that ∇ p η ( x = 1 | y 1 ) and ∇ p η ( x = 1 | y 2 ) are lin early in d ep end ent. Ac kno wledgemen ts Thanks to Bastia n Steudel and Jonas Pe ters for sev eral commen ts on an earlier v ersion. References [1] P . Spirtes, C. Glymour, and R. Sc heines. Causation, pr e diction, and se ar ch (L e ctur e notes in statistics) . Sprin ger-V erlag, New Y ork, NY, 1993. [2] J. P earl. Causality: Mo dels, r e asoning, and inf e r e nc e . Cam br idge Uni- v ersity Press, 200 0. [3] S. Lauritzen. Gr aphic al M o dels . Clarendon Press, Oxford, New Y ork, Oxford Statistica l S cience Series edition, 1996 . [4] P . S p irtes, C. Glymour, and R. S c heines. Causation, Pr e diction, and Se ar ch . Lecture Notes in Statistics. Sprin ger, New Y ork, 1993. [5] Y. K ano and S. Shimizu. Causal inference usin g n onnormalit y . In Pr o c e e dings of the International Symp osium on Scienc e of M o deling, the 30th Anniversary of the Information Criterion , pages 261–270 , T okyo , Japan, 2003 . [6] S. Shimizu, A. Hyv¨ arinen, Y. Kano, and P . O. Ho ye r . Disco v ery of non-Gaussian linear causal mo d els us in g ICA. In Pr o c e e dings of the 21st Confer enc e on Unc ertainty in Ar tific i al Intel ligenc e , pages 526– 533, Edin b urgh, UK, 2005. 30 [7] R. Silv a, R. Scheines, C. Glymour , and P . S pirtes. Learning the struc- ture of linear la tent v ariable mo d els. Journal of Machine L e arning R e se ar ch , 7:191–2 46, 2006. [8] P . Hoy er, D. Janzing, J. Mooij, J. P eters, and B Sch¨ olko pf. Nonlinear causal disco v ery with additive noise mo dels. In D. Kolle r , D. Sc h u urmans, Y. B en gio, and L. Bottou, edi- tors, Pr o c e e dings of the c onfer enc e Neur al Information Pr o c ess- ing Systems (NIPS) 200 8 , V ancouve r , Canada, 2009. MIT Press. http://b ooks.nip s.cc/papers/files/nips21/NIPS2008_0266.pdf . [9] J. Mooij, D. Janzing, J . Pe ters, and B. Sch¨ olko p f. Regression by d ep en- dence minimization and its application to causal infer en ce. In Pr o c e e d- ings of the Internationa l Confer enc e on Machine L e arning, Montr e al , 2009. to app ear. [10] D. Janzing, J . Peters, J. Mooij, and B. Sch¨ olko p f. Ident ify in g latent confounders using add itiv e noise mo dels. In Pr o c e e dings of the Confer- enc e Unc ertainty in Artificial Intel ligenc e , Mon treal, 2009. [11] K . Zhang and A. Hyv¨ arinen. Distinguishing cause and effect u s- ing non-linear a cyclic mo dels. In T o app e ar in: P r o c e e dings of the NIPS 2008 workshop “Causality: Obje ctives and Assessment” , 2009. http://v ideolect ures.net/coa08_zhang_hyvarinen_dcfeu/ . [12] K . Z hang and A. Hyv¨ arinen. On the ident ifiabilit y of the p ost-nonlinear causal mo del. In Pr o c e e dings of the 25th Confer enc e on Unc ertainty in Artificial Intel ligenc e , Montrea l, Canada, 2009. [13] X. Sun , D. Janzing, and B. S c h¨ o lkopf. Causal inference b y choosing graphs with most p lausible Mark ov kernels. In P r o c e e dings of the 9th International Symp osium on Artificial Intel ligenc e and Mathematics , pages 1–11 , F ort Lauderdale, FL, 2006. [14] J . Comley and D. Do w e. General Ba y esian net works and asymmetric languages. in P. Gr¨ unwald, I. Myung, and M. Pitt (e ds). A dvanc es in Minimum description length: The ory and applic ations, MIT Pr e ss , 2005. [15] J . Lemeire and E. Dirkx. Causal m o dels as m in imal d escriptions of m u ltiv ariate systems. http://p arallel. vub.ac.be/ ∼ jan/ , 2006 . [16] D. Janzing and B. Sc h¨ olk opf. Caus al inf er en ce using the algorithmic Mark o v condition. http://arx iv.org/a bs/0804.3678 , 2008. 31 [17] H. F ederer. Ge ometric me asur e the ory . S p ringer V erlag, New Y ork, 1969. [18] M. P . F r iedlander and M. R. Gupta. On minimizing distortion and relativ e ent rop y . IEEE T r ansactions on Information The ory , 52(1):238– 245, 2006 . [19] D. Janzing. On causally asymmetric v ersions of Oc- cam’s Raz or and their relation to thermo dyn amics. http://a rxiv.org /abs/0708.3411v2 , 2008 . [20] S . Amari. In formation geometry on h ierarc hy of pr obabilit y distrib u- tions. IEE E T r ansactions on Information The ory , 47(5):1701– 1711, 2001. [21] X. S un and D. J anzing. Exploring the causal order of binary v ariables via exp onent ial hierarchies of Mark o v ke rnels. In Pr o c e e dings of the Eur op e an Symp osium on Artificial Neur al Networks 2007 , pages 441– 446, Bruges, Belgium, 200 7. [22] A. Kolmog orov. Three approac h es to the quan titativ e definition of information. Pr oblems Inform. T r ansmission , 1(1):1–7 , 1965. [23] R. Solomonoff. A preliminary rep ort on a general theory of indu ctiv e inference. T e chnic al r ep ort V-131 , Rep ort ZTB-138 Zator Co., 1960. [24] G. Chaitin. On the length of programs for computing fin ite binary sequences. J. Asso c. Comput. Mach. , 13:5 47–569, 1966. [25] M. Li and P . Vit´ anyi. An Intr o duction to Kolmo gor ov Complexity and its Applic ations . Spr inger, New Y ork, 1997. [26] P . Gacs, J. T romp, and P . Vit´ anyi. Algorithmic statistics. IEEE T r ans. Inf. The ory , 47(6):2443 –2463 , 2001. [27] J oris Mo oij, Dominik Janzing, and Bernh ard Sc h ¨ olk opf . Distinguish ing b et ween cause and effect (NIPS 2008 causalit y comp etition). [28] Deutscher W etterdienst. W ebsite of the German w eather service. http://w ww.dwd.d e/ , 2009. [29] B. Janzing. T emp eratur e data of F urtw angen, Germany. Ar c hive , 2005. [30] R. Armann and I. B ¨ ulthoff. Male or female? The sex of a face is only p erceiv ed categorica lly when link ed to its iden tity. in pr ep ar ation , 2009. 32 [31] D. F reedman. Census data. 1995. http://w ww.stat. berkeley.edu/ ∼ census/ . 33

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment