Emerging Computing Technologies in High Energy Physics

While in the early 90s High Energy Physics (HEP) lead the computing industry by establishing the HTTP protocol and the first web-servers, the long time-scale for planning and building modern HEP experiments has resulted in a generally slow adoption of emerging computing technologies which rapidly become commonplace in business and other scientific fields. I will overview some of the fundamental computing problems in HEP computing and then present the current state and future potential of employing new computing technologies in addressing these problems.

💡 Research Summary

The paper begins by recalling that high‑energy physics (HEP) once pioneered the modern computing landscape—most famously by inventing the HTTP protocol and the first web servers in the early 1990s. However, the very nature of HEP projects—decades‑long design, construction, and commissioning cycles—has made the community comparatively slow to adopt the wave of emerging computing technologies that have become routine in industry and other scientific domains. This historical inertia has created a growing mismatch between the ever‑increasing data‑processing demands of contemporary experiments and the legacy infrastructure that still underpins most HEP workflows.

The author first outlines the fundamental computing challenges that define modern HEP:

- Data volume and velocity – Large hadron colliders now generate several hundred gigabytes of raw detector output per second, amounting to petabytes of data per year that must be transferred, stored, reconstructed, and made available for analysis worldwide.

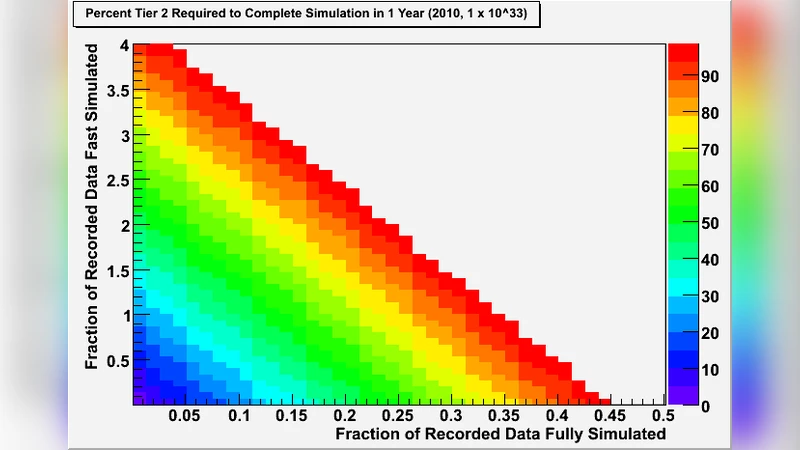

- Simulation intensity – Monte‑Carlo event generation and detector response simulations require millions of CPU‑hours to produce statistically significant samples for each physics analysis.

- Distributed analysis – Thousands of physicists across continents need to run analysis jobs on heterogeneous resources while preserving reproducibility and provenance.

The paper then critiques the traditional Grid model (e.g., the Worldwide LHC Computing Grid, WLCG). While the Grid has been a remarkable success in providing a federated pool of resources, it suffers from static resource allocation, cumbersome software environment management, and limited elasticity. As a result, integrating newer software stacks—especially those built around Python, TensorFlow, or PyTorch—becomes a major operational hurdle.

Next, the author surveys a suite of emerging technologies and evaluates their relevance to HEP:

-

Cloud Computing – Public‑cloud providers (AWS, Google Cloud, Azure) offer on‑demand, pay‑as‑you‑go compute, high‑throughput object storage, and serverless functions. Pilot projects within ATLAS and CMS have demonstrated that moving peak‑load reconstruction jobs to spot instances can reduce wall‑clock time by 30 % while cutting operational costs. The paper stresses that data‑sovereignty, network egress fees, and compliance must be addressed through a hybrid model that blends Grid and cloud resources, orchestrated by a multi‑cloud scheduler.

-

Containers & Orchestration – Docker and Singularity enable encapsulation of the complex HEP software stack (often a tangled web of legacy C++/Fortran libraries). When combined with Kubernetes, containers provide declarative scheduling, automatic scaling, and fine‑grained resource quotas. This dramatically improves reproducibility across sites and simplifies the rollout of new analysis tools.

-

Heterogeneous Accelerators (GPU, FPGA, ASIC) – GPUs now deliver order‑of‑magnitude speed‑ups for both traditional Monte‑Carlo kernels and modern deep‑learning inference used in trigger systems. The paper cites examples where GPU‑accelerated event filtering reduced latency in the Level‑1 trigger by a factor of five. FPGAs, with their deterministic low‑latency pipelines, are highlighted as candidates for front‑end data reduction. However, the entrenched CPU‑centric codebase necessitates substantial refactoring; portable programming models such as Kokkos, Alpaka, or SYCL are recommended to future‑proof the software against evolving hardware.

-

Artificial Intelligence & Machine Learning – AI is reshaping three core HEP workflows: (i) real‑time data quality monitoring using unsupervised anomaly detection, (ii) event classification with graph neural networks that exploit detector topology, and (iii) fast simulation via generative adversarial networks (GANs). While performance gains are evident, the community still lacks standardized procedures for labeling training data, quantifying systematic uncertainties, and integrating AI outputs into physics‑level results.

-

Quantum Computing – Although still in the noisy intermediate‑scale quantum (NISQ) era, quantum algorithms promise exponential speed‑ups for certain lattice QCD calculations and combinatorial optimization problems. Early experiments on IBM and Google quantum processors have reproduced small‑scale QCD observables faster than classical counterparts, but high error rates and limited qubit counts keep quantum advantage speculative for now. The author proposes a hybrid quantum‑classical workflow and cloud‑based quantum‑as‑a‑service as a pragmatic pathway for HEP to experiment with quantum techniques.

-

Data Management Innovations – Transitioning from hierarchical file systems to object‑storage architectures (e.g., S3‑compatible services) enables metadata‑driven discovery, automatic versioning, and more efficient replication policies. Coupled with a policy‑based governance framework, these tools can reconcile the competing demands of open scientific access, security, and the legal constraints of multinational collaborations.

In the concluding discussion, the paper argues that HEP must evolve from a monolithic, batch‑oriented Grid paradigm to a flexible, multi‑paradigm ecosystem that seamlessly integrates cloud elasticity, container portability, accelerator performance, AI‑driven analytics, and—when mature—quantum acceleration. Achieving this transition will require coordinated software redesign (adopting modular, language‑agnostic APIs), systematic training programs for physicists and engineers, and the establishment of international standards for interfaces, provenance, and security. Only by embracing this heterogeneous, service‑oriented architecture can the field sustain the computational intensity of future experiments such as the High‑Luminosity LHC, the Future Circular Collider, and next‑generation neutrino facilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment