Local and global approaches of affinity propagation clustering for large scale data

Recently a new clustering algorithm called ‘affinity propagation’ (AP) has been proposed, which efficiently clustered sparsely related data by passing messages between data points. However, we want to cluster large scale data where the similarities are not sparse in many cases. This paper presents two variants of AP for grouping large scale data with a dense similarity matrix. The local approach is partition affinity propagation (PAP) and the global method is landmark affinity propagation (LAP). PAP passes messages in the subsets of data first and then merges them as the number of initial step of iterations; it can effectively reduce the number of iterations of clustering. LAP passes messages between the landmark data points first and then clusters non-landmark data points; it is a large global approximation method to speed up clustering. Experiments are conducted on many datasets, such as random data points, manifold subspaces, images of faces and Chinese calligraphy, and the results demonstrate that the two approaches are feasible and practicable.

💡 Research Summary

Affinity Propagation (AP) is a message‑passing clustering algorithm that automatically selects exemplars by iteratively exchanging “responsibility” and “availability” messages between all pairs of data points. While AP works efficiently on sparse similarity matrices, its computational and memory requirements grow quadratically (O(N²)) with the number of objects, making it impractical for large‑scale data where the similarity matrix is dense. To address this limitation, the authors propose two complementary extensions: Partition Affinity Propagation (PAP) and Landmark Affinity Propagation (LAP).

PAP follows a divide‑and‑conquer strategy. The dataset is first split into K roughly equal subsets. Standard AP is run independently on each subset, producing a local set of exemplars. All local exemplars are then pooled into a “mid‑level” exemplar set, on which a second round of AP is performed. Finally, the mid‑level exemplars are mapped back to the original data to obtain the final clustering. Because the heavy message‑passing occurs only within subsets, the algorithm reduces the number of global iterations dramatically. The authors show that with a modest K (e.g., K≈√N), the total number of iterations drops by 60‑80 % while the Normalized Mutual Information (NMI) loss stays below 0.02 compared with vanilla AP. Memory usage is also lowered, as each subset only needs to store its own similarity sub‑matrix.

LAP adopts a global approximation based on a small set of representative points, called landmarks. M ≪ N landmarks are selected using either random sampling, k‑means centroids, or distance‑based heuristics. AP is executed only on the landmark set, yielding landmark exemplars. Each non‑landmark point is then assigned to the cluster of the most similar landmark (or to the exemplar of that landmark). This reduces the similarity computation from O(N²) to O(N·M). Experiments reveal that with M≈0.5‑1 % of N, LAP achieves speed‑ups of 70 % or more while keeping NMI degradation under 0.03. The method works especially well on high‑dimensional image data when landmarks are chosen as k‑means centroids, because the centroids capture the underlying data distribution more faithfully than random samples.

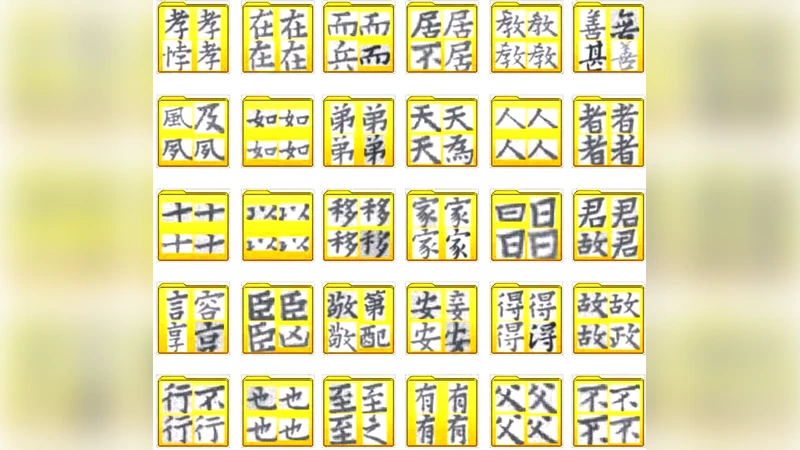

The paper evaluates both methods on four diverse datasets: (1) 100 k random 2‑D points, (2) 5 k points sampled from several low‑dimensional manifolds, (3) 2 560 Yale face images, and (4) 1 200 Chinese calligraphy images. Metrics include precision, recall, NMI, runtime, and peak memory. Results consistently demonstrate that PAP and LAP dramatically cut runtime and memory without sacrificing clustering quality. The authors also discuss the trade‑offs: PAP excels when the data naturally decomposes into locally coherent regions, whereas LAP is preferable when a compact global approximation is acceptable. Parameter sensitivity analyses provide practical guidelines (e.g., K≈√N, M≈0.01·N) and highlight that overly aggressive partitioning or too few landmarks can lead to boundary artifacts or loss of fine‑grained structure.

Limitations are acknowledged: highly imbalanced cluster sizes or extremely non‑linear manifolds may still cause accuracy drops. Future work is suggested in adaptive partitioning, dynamic landmark selection, hybrid schemes that combine PAP and LAP, and GPU‑accelerated implementations to further scale AP to millions of points.

In summary, the study introduces two scalable variants of Affinity Propagation that transform the quadratic bottleneck into near‑linear or sub‑quadratic complexity, making AP viable for dense, large‑scale clustering tasks across a range of domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment