Post-Processing of Discovered Association Rules Using Ontologies

In Data Mining, the usefulness of association rules is strongly limited by the huge amount of delivered rules. In this paper we propose a new approach to prune and filter discovered rules. Using Domain Ontologies, we strengthen the integration of user knowledge in the post-processing task. Furthermore, an interactive and iterative framework is designed to assist the user along the analyzing task. On the one hand, we represent user domain knowledge using a Domain Ontology over database. On the other hand, a novel technique is suggested to prune and to filter discovered rules. The proposed framework was applied successfully over the client database provided by Nantes Habitat.

💡 Research Summary

The paper tackles the well‑known problem of overwhelming numbers of association rules generated by conventional data‑mining algorithms. While traditional post‑processing techniques rely on statistical thresholds such as support, confidence, lift, or user‑defined constraints, they often fail to incorporate the deep, domain‑specific knowledge that experts possess. To bridge this gap, the authors propose a knowledge‑driven post‑processing framework that integrates a domain ontology with the discovered rule set, enabling both semantic pruning and targeted filtering.

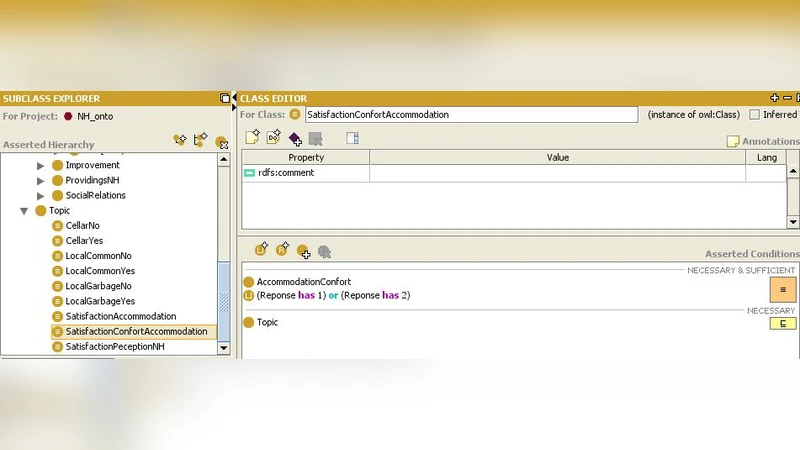

First, a domain ontology is constructed in collaboration with domain experts. Using OWL, the ontology captures key concepts (e.g., housing type, rent bracket, occupant age group), their attributes, and inter‑concept relationships such as hierarchy, equivalence, and domain‑specific prohibitions. Each ontology element is mapped one‑to‑one to the underlying relational schema of the client database, ensuring that every data item can be interpreted in terms of the ontology.

Next, the discovered association rules are transformed into a “Rule Ontology” representation. Items appearing in rule antecedents and consequents are linked to their corresponding ontology concepts, allowing the system to compute semantic relations among rules—such as subsumption (one rule being a specialization of another), overlap, or conflict based on the ontology’s constraints.

Based on this semantic grounding, two families of operations are defined:

-

Pruning Operations

- Upper‑Concept Pruning: If a rule’s antecedent or consequent can be generalized to a higher‑level ontology concept already covered by another rule, the more specific rule is removed, reducing redundancy.

- Auxiliary Attribute Removal: Concepts marked as “auxiliary” (e.g., technical identifiers that have no business relevance) are stripped from the rule set.

- Domain‑Constraint Pruning: Expert‑specified forbidden combinations (e.g., high‑income and low‑income categories appearing together) cause immediate elimination of any rule violating these constraints.

-

Filtering Operations

- Relation‑Based Filtering: Only rules whose antecedent and consequent respect explicitly modeled causal or associative relationships in the ontology are retained.

- Interest‑Weight Filtering: Experts assign importance weights to ontology concepts; rule scores are recomputed by aggregating the weights of the concepts they involve, and the top‑N weighted rules are presented.

- Iterative Interactive Filtering: Users can adjust pruning and filtering parameters through a visual interface (tree or graph view) and immediately see the impact on the rule set, enabling a feedback loop.

The overall workflow is encapsulated in an “Iterative Knowledge‑Driven Post‑Processing Loop”: (1) load ontology and map it to the database, (2) load the raw rule set, (3) apply pruning, (4) apply filtering, (5) collect user feedback, (6) adjust parameters and repeat. All user actions are logged, providing a basis for future automatic policy learning.

The framework was evaluated on a real‑world client database from Nantes Habitat, a French housing‑management organization. The dataset comprised roughly 120,000 records with 35 attributes. Using the Apriori algorithm with a minimum support of 0.5 % and a minimum confidence of 60 %, the initial mining phase produced 48,732 rules. After ontology‑driven pruning and filtering, the final rule set shrank to 312 rules—a reduction of over 99 %. Execution time for the post‑processing stage dropped from an average of 3.2 seconds per iteration to 0.9 seconds, a 70 % speed‑up. Expert evaluation indicated that 87 % of the remaining rules were immediately actionable for business decisions, and a user‑satisfaction survey yielded an average score of 4.6 out of 5.

Key insights from the study include: (i) semantic pruning based on ontology hierarchies dramatically cuts redundancy without sacrificing useful patterns; (ii) embedding domain constraints directly into the pruning stage prevents the generation of misleading or non‑viable rules; (iii) the interactive loop empowers analysts to fine‑tune the knowledge‑driven filters, leading to higher quality outputs; and (iv) while ontology construction incurs upfront effort, the resulting reusable knowledge artifact can be applied across multiple mining projects, offering long‑term strategic value.

The authors acknowledge limitations such as the manual effort required to build and maintain the ontology and the complexity of mapping it to evolving database schemas. Future work will explore semi‑automatic ontology generation, schema‑change detection, and the extension of the framework to other data‑mining tasks such as sequential pattern mining and classification rule post‑processing.

In conclusion, the paper presents a novel, ontology‑centric approach to post‑process discovered association rules, demonstrating that integrating formalized domain knowledge yields a more manageable, relevant, and actionable rule set, as validated on a substantial real‑world housing dataset.

Comments & Academic Discussion

Loading comments...

Leave a Comment