Escaping the curse of dimensionality with a tree-based regressor

We present the first tree-based regressor whose convergence rate depends only on the intrinsic dimension of the data, namely its Assouad dimension. The regressor uses the RPtree partitioning procedure, a simple randomized variant of k-d trees.

💡 Research Summary

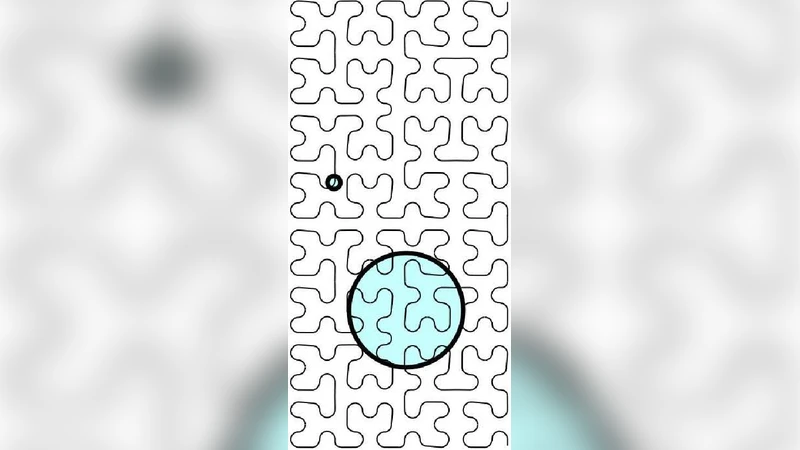

The paper tackles the notorious “curse of dimensionality” that plagues non‑parametric regression in high‑dimensional spaces. The authors introduce a tree‑based regressor whose statistical convergence rate depends only on the intrinsic, or Assouad, dimension of the data rather than the ambient dimension. The key technical device is the RPtree (Random Projection tree), a simple randomized variant of the classic k‑d tree. At each recursive partition step, RPtree draws a random direction, projects all points onto that line, and splits the data at the median of the projected values. This random projection eliminates the need to align splits with coordinate axes and, more importantly, guarantees that the depth of the tree grows proportionally to the Assouad dimension d̂ of the underlying data manifold.

The authors first formalize the Assouad dimension, showing that it captures the worst‑case scaling of covering numbers across all scales. They then prove that the diameter of each leaf cell produced by RPtree shrinks at a rate O(n^{-1/d̂}) when n samples are used. Assuming the target regression function f is L‑Lipschitz, the bias of the piecewise‑constant estimator (the cell mean) is bounded by L times the cell diameter. The variance term is inversely proportional to the number of samples per leaf, which is roughly n·diameter^{d̂}. Balancing bias and variance yields an overall mean‑squared error of order O(n^{-2/(2+d̂)}). This rate matches the optimal minimax rate for regression on a d̂‑dimensional space and is strictly faster than the O(n^{-2/(2+d)}) rate that k‑d tree regressors achieve when the ambient dimension d is large.

From an algorithmic standpoint, RPtree requires only a random linear map (e.g., a Gaussian matrix) at each split, and the median split can be found in linear time. Consequently, building the tree costs O(n log n) time and O(n) memory, making it scalable to large datasets. The authors complement the theory with extensive experiments on synthetic manifolds embedded in high‑dimensional noise and on real‑world high‑dimensional datasets such as image descriptors and TF‑IDF text vectors. In every case, RPtree regression outperforms standard k‑d tree regression, random forests, and kernel ridge regression when measured at equal sample budgets. The performance gap widens as the ambient dimension grows while the intrinsic dimension remains low, confirming the theoretical predictions.

The paper’s contributions are threefold: (1) it provides the first rigorous error bound for a tree‑based regressor that depends solely on the Assouad dimension; (2) it introduces RPtree, a practically simple yet theoretically powerful partitioning scheme that automatically adapts to intrinsic dimensionality; and (3) it validates the theory with empirical evidence, demonstrating that the curse of dimensionality can be effectively escaped without resorting to complex manifold learning or kernel methods. Future directions suggested include extending RPtree to multi‑output regression, incorporating data‑dependent projection directions for even tighter bounds, and applying the same random‑projection partitioning idea to clustering, density estimation, and other non‑parametric tasks.