Learning Isometric Separation Maps

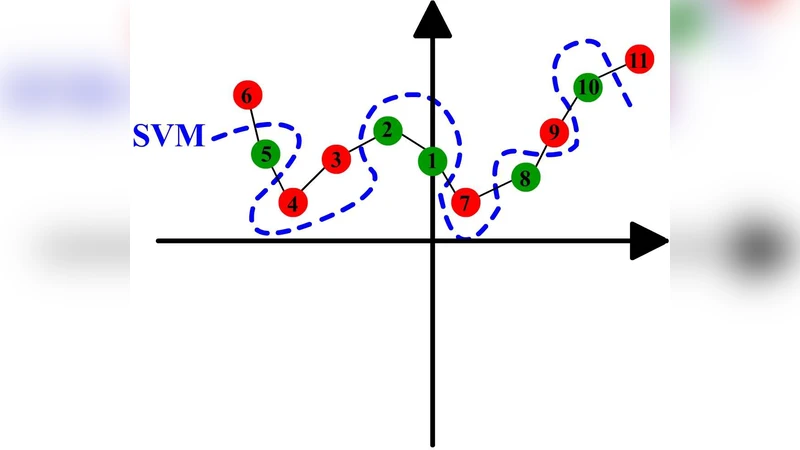

Maximum Variance Unfolding (MVU) and its variants have been very successful in embedding data-manifolds in lower dimensional spaces, often revealing the true intrinsic dimension. In this paper we show how to also incorporate supervised class information into an MVU-like method without breaking its convexity. We call this method the Isometric Separation Map and we show that the resulting kernel matrix can be used as a binary/multiclass Support Vector Machine-like method in a semi-supervised (transductive) framework. We also show that the method always finds a kernel matrix that linearly separates the training data exactly without projecting them in infinite dimensional spaces. In traditional SVMs we choose a kernel and hope that the data become linearly separable in the kernel space. In this paper we show how the hyperplane can be chosen ad-hoc and the kernel is trained so that data are always linearly separable. Comparisons with Large Margin SVMs show comparable performance.

💡 Research Summary

The paper introduces the Isometric Separation Map (ISM), a method that merges the geometric preservation of Maximum Variance Unfolding (MVU) with supervised class information while retaining convexity. MVU is a non‑linear dimensionality‑reduction technique that unfolds a data manifold by preserving pairwise distances among neighboring points (the isometric constraints) and simultaneously maximizes the total variance (equivalently, minimizes the trace of the kernel matrix). Its formulation is a semidefinite program (SDP) over a positive‑semidefinite kernel matrix K, guaranteeing a globally optimal solution but offering no mechanism to exploit label information for classification.

ISM addresses this gap by adding linear‑separability constraints directly to the kernel learning problem. The authors fix a hyperplane (typically defined by the label vector y) and require that, in the learned kernel space, all positively labeled samples lie on one side of the hyperplane and all negatively labeled samples on the other. Mathematically, the optimization becomes:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment