Grid Computing in the Collider Detector at Fermilab (CDF) scientific experiment

The computing model for the Collider Detector at Fermilab (CDF) scientific experiment has evolved since the beginning of the experiment. Initially CDF computing was comprised of dedicated resources located in computer farms around the world. With the wide spread acceptance of grid computing in High Energy Physics, CDF computing has migrated to using grid computing extensively. CDF uses computing grids around the world. Each computing grid has required different solutions. The use of portals as interfaces to the collaboration computing resources has proven to be an extremely useful technique allowing the CDF physicists transparently migrate from using dedicated computer farm to using computing located in grid farms often away from Fermilab. Grid computing at CDF continues to evolve as the grid standards and practices change.

💡 Research Summary

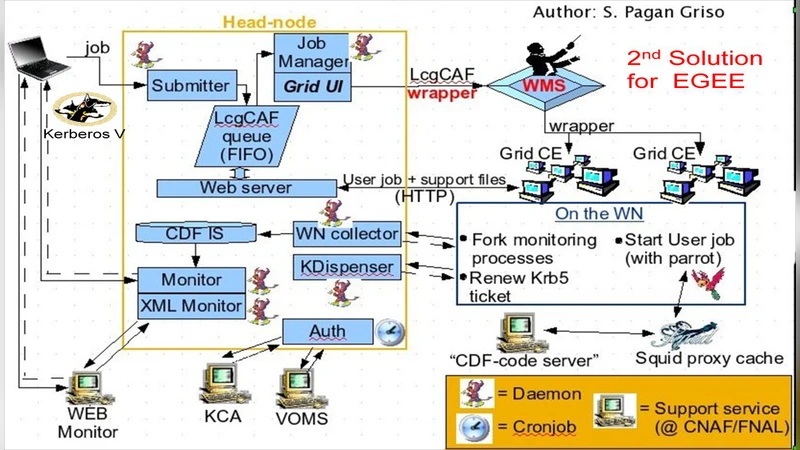

The Collider Detector at Fermilab (CDF) experiment began with a traditional computing model based on dedicated farms located at Fermilab and at partner institutions worldwide. As the volume of collision data grew, the limitations of this model became evident: resource fragmentation, high operational overhead, and difficulty scaling to meet the increasing processing demands. In response, CDF adopted the grid‑computing paradigm that had become standard across high‑energy physics. The transition was not straightforward because each participating grid (e.g., OSG, gLite, Globus‑based sites) employed different authentication mechanisms, job schedulers, and data‑transfer protocols. To hide this heterogeneity from physicists, CDF developed a portal layer that abstracts the underlying middleware. The portal provides a unified web interface and API for user authentication, job submission, data location discovery, and result retrieval. Behind the scenes it translates these calls into the native commands required by each grid, allowing scientists to run analyses with the same scripts they used on local farms.

Data management was also re‑engineered. CDF introduced a replica‑catalog and caching system that automatically replicates frequently accessed datasets to multiple grid sites and selects the nearest copy for a given job. This reduces network bandwidth consumption and dramatically shortens queue times. The portal‑driven approach has yielded measurable benefits: overall CPU utilization has increased by a factor of two to three, job throughput has risen, and the cost of maintaining dedicated hardware has dropped.

Because grid standards continue to evolve, CDF’s architecture is designed for incremental upgrades. Recent enhancements include support for token‑based OAuth authentication, containerized execution environments using Docker and Singularity, and optional integration with commercial cloud providers such as AWS and Google Cloud Platform. These additions preserve compatibility with existing grid resources while extending flexibility and scalability.

Looking forward, CDF plans to adopt a hybrid grid‑cloud model that will enable on‑demand provisioning of additional compute power, tighter coupling of real‑time data quality monitoring, and more automated workflow orchestration. The experience gained from CDF’s migration demonstrates that a portal‑centric, grid‑based infrastructure can provide transparent, efficient, and future‑proof computing for large‑scale scientific collaborations.

Comments & Academic Discussion

Loading comments...

Leave a Comment