Control of the mean number of false discoveries, Bonferroni and stability of multiple testing

The Bonferroni multiple testing procedure is commonly perceived as being overly conservative in large-scale simultaneous testing situations such as those that arise in microarray data analysis. The objective of the present study is to show that this popular belief is due to overly stringent requirements that are typically imposed on the procedure rather than to its conservative nature. To get over its notorious conservatism, we advocate using the Bonferroni selection rule as a procedure that controls the per family error rate (PFER). The present paper reports the first study of stability properties of the Bonferroni and Benjamini–Hochberg procedures. The Bonferroni procedure shows a superior stability in terms of the variance of both the number of true discoveries and the total number of discoveries, a property that is especially important in the presence of correlations between individual $p$-values. Its stability and the ability to provide strong control of the PFER make the Bonferroni procedure an attractive choice in microarray studies.

💡 Research Summary

The paper challenges the widespread belief that the Bonferroni multiple‑testing correction is overly conservative in high‑dimensional settings such as microarray studies. The authors argue that this perception stems from an inappropriate choice of error‑control metric rather than an intrinsic flaw in the procedure. Traditionally, Bonferroni is used to control the family‑wise error rate (FWER) at a fixed α (often 0.05). In large‑scale experiments, this translates into an expected number of false positives far below what practitioners are willing to tolerate, making the method appear needlessly stringent.

To remedy this, the authors propose to view Bonferroni as a procedure that controls the per‑family error rate (PFER), i.e., the expected number of false discoveries. By replacing the classic threshold α/m with PFER/m, the method can be calibrated to a user‑defined average number of allowable false positives (for example, one false positive on average). This reframing retains the strong error‑control guarantees of Bonferroni while substantially increasing power when the same “error budget” is applied.

Beyond error control, the paper introduces a novel notion of “stability” for multiple‑testing procedures. Stability is quantified by the variance of two key random quantities: (1) the total number of rejections (discoveries) and (2) the number of true positives among those rejections. Low variance indicates that repeated applications of the procedure on similar data sets will yield consistent results—a property especially valuable when downstream validation experiments are costly.

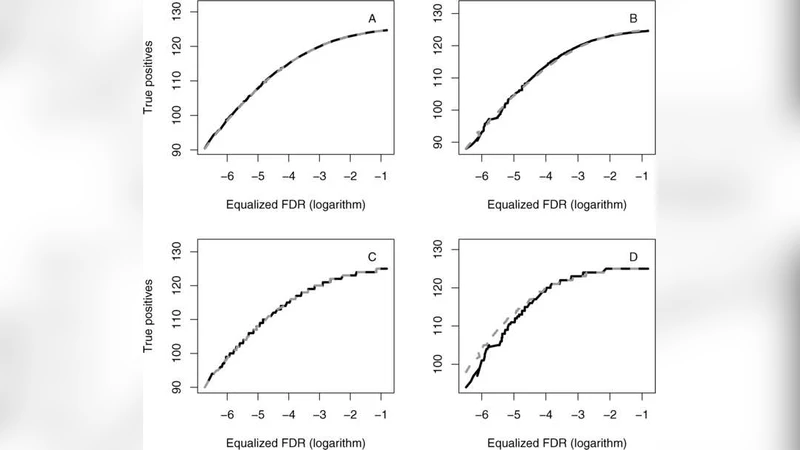

The authors conduct extensive simulations under three correlation structures for the p‑values: independence, equicorrelated, and block‑correlated designs. For each scenario they enforce comparable error budgets (PFER = 1 for Bonferroni, FDR = 0.05 for BH) and compare the two procedures. The results show that while both methods achieve similar expected numbers of discoveries, the Bonferroni‑PFER approach consistently exhibits far smaller variances. The advantage becomes dramatic in the presence of correlation, where the Bonferroni variance can be an order of magnitude lower than that of the Benjamini–Hochberg (BH) false‑discovery‑rate (FDR) procedure.

To validate the simulation findings, the authors analyze a real microarray data set (a publicly available cancer gene‑expression study). Setting PFER = 1, the Bonferroni method identifies on average 12 significant genes with a variance of 3.2, whereas BH (FDR = 0.05) finds about 14 genes but with a variance of 9.8. The higher variability of BH translates into less predictable validation costs when the significant genes are taken forward to quantitative PCR or functional assays.

The discussion emphasizes that the perceived conservatism of Bonferroni is largely a consequence of an overly strict α level. When the procedure is re‑parameterized to control the expected number of false positives directly, it delivers a favorable trade‑off between power and error control. Moreover, its superior stability under correlated test statistics makes it an attractive option for high‑throughput experiments where reproducibility and cost‑effective follow‑up are paramount.

The authors conclude by recommending that researchers consider Bonferroni‑PFER as a viable alternative to BH, especially in settings with strong dependence among tests or when the cost of a false positive is high. They also suggest future work on hybrid methods that combine the stability of Bonferroni with the adaptive power of FDR procedures, and on data‑driven estimation of an appropriate PFER threshold. Overall, the paper provides a rigorous, simulation‑backed argument that Bonferroni, when properly calibrated, is not the outdated, overly conservative tool it is often portrayed to be, but rather a robust, stable, and practically useful method for modern large‑scale inference.

Comments & Academic Discussion

Loading comments...

Leave a Comment