An Efficient Secure Multimodal Biometric Fusion Using Palmprint and Face Image

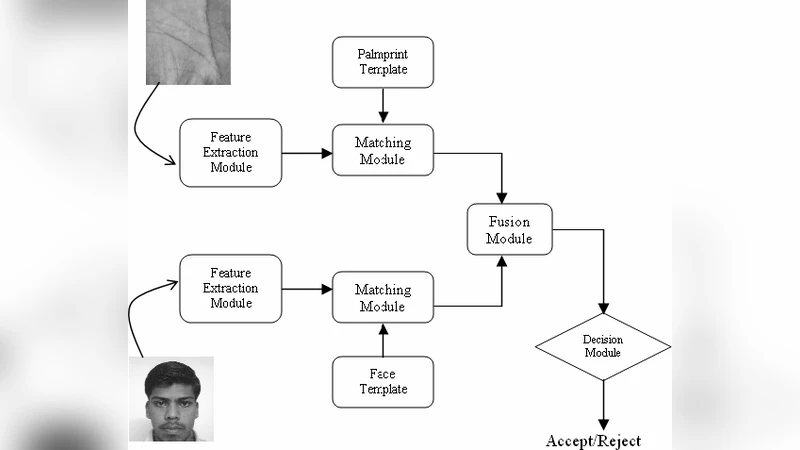

Biometrics based personal identification is regarded as an effective method for automatically recognizing, with a high confidence a person’s identity. A multimodal biometric systems consolidate the evidence presented by multiple biometric sources and typically better recognition performance compare to system based on a single biometric modality. This paper proposes an authentication method for a multimodal biometric system identification using two traits i.e. face and palmprint. The proposed system is designed for application where the training data contains a face and palmprint. Integrating the palmprint and face features increases robustness of the person authentication. The final decision is made by fusion at matching score level architecture in which features vectors are created independently for query measures and are then compared to the enrolment template, which are stored during database preparation. Multimodal biometric system is developed through fusion of face and palmprint recognition.

💡 Research Summary

The paper presents a multimodal biometric authentication system that combines facial and palm‑print images to achieve higher security and recognition accuracy than single‑modality approaches. The authors begin by outlining the limitations of conventional unimodal systems, which are vulnerable to variations in illumination, pose, and skin conditions, and argue that integrating multiple biometric traits can mitigate these weaknesses. After reviewing related work on face and palm‑print recognition as well as various fusion strategies (feature‑level, score‑level, decision‑level), the authors focus on a score‑level fusion architecture due to its implementation simplicity and flexibility.

The proposed system consists of five main stages: (1) preprocessing, where facial landmarks are detected and a region of interest (ROI) is extracted from the palm image; (2) feature extraction, in which the face is represented using a combination of Principal Component Analysis (Eigenfaces) and Linear Discriminant Analysis (Fisherfaces) to capture both global appearance and discriminative information, while the palm‑print is processed with Gabor filters and wavelet decomposition to encode line patterns and texture; (3) matching, where each modality generates a similarity score based on cosine similarity or Euclidean distance; (4) score normalization and weighted fusion, where Min‑Max scaling maps both scores to a common

Comments & Academic Discussion

Loading comments...

Leave a Comment