Energy-Efficient Scheduling of HPC Applications in Cloud Computing Environments

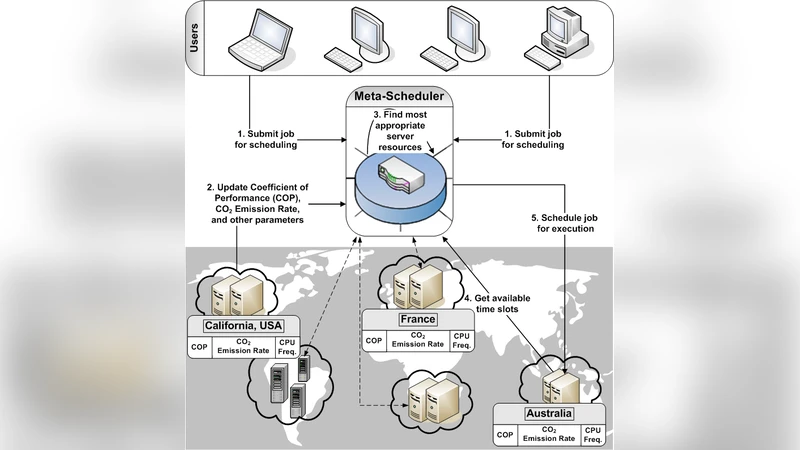

The use of High Performance Computing (HPC) in commercial and consumer IT applications is becoming popular. They need the ability to gain rapid and scalable access to high-end computing capabilities. Cloud computing promises to deliver such a computing infrastructure using data centers so that HPC users can access applications and data from a Cloud anywhere in the world on demand and pay based on what they use. However, the growing demand drastically increases the energy consumption of data centers, which has become a critical issue. High energy consumption not only translates to high energy cost, which will reduce the profit margin of Cloud providers, but also high carbon emissions which is not environmentally sustainable. Hence, energy-efficient solutions are required that can address the high increase in the energy consumption from the perspective of not only Cloud provider but also from the environment. To address this issue we propose near-optimal scheduling policies that exploits heterogeneity across multiple data centers for a Cloud provider. We consider a number of energy efficiency factors such as energy cost, carbon emission rate, workload, and CPU power efficiency which changes across different data center depending on their location, architectural design, and management system. Our carbon/energy based scheduling policies are able to achieve on average up to 30% of energy savings in comparison to profit based scheduling policies leading to higher profit and less carbon emissions.

💡 Research Summary

The paper addresses the growing concern that high‑performance computing (HPC) workloads, when run on commercial cloud platforms, dramatically increase data‑center energy consumption and associated carbon emissions. While cloud providers have traditionally focused on meeting service‑level agreements (SLAs) and maximizing revenue, the authors argue that a more sustainable approach must simultaneously consider energy cost, carbon intensity, workload characteristics, and hardware power efficiency, all of which vary across geographically dispersed data centers.

To this end, the authors propose a near‑optimal scheduling framework that exploits heterogeneity among multiple data centers. Each data center is modeled with four key parameters: (1) the local electricity price (USD/kWh), (2) the carbon emission factor (kg CO₂/kWh), (3) the CPU power‑efficiency metric (useful FLOPs per watt), and (4) capacity constraints (power budget, SLA deadlines). For each incoming HPC job, the framework estimates the expected energy consumption, the resulting carbon footprint, and the revenue that would be generated if the job were placed in a particular data center.

The scheduling problem is cast as a multi‑objective optimization:

Min α ∑ C_e(i)·E_{ij} + β ∑ C_c(i)·E_{ij} – γ ∑ R_{ij}

where C_e(i) and C_c(i) are the energy cost and carbon factor of data center i, E_{ij} is the predicted energy use of job j on i, R_{ij} is the revenue, and α, β, γ are user‑defined weights that allow the provider to prioritize cost savings, carbon reduction, or profit. Constraints enforce power‑budget limits, job deadlines, and SLA compliance.

The algorithm operates in two phases. First, a candidate set of feasible data centers is generated for each job, and the three metrics (energy, carbon, revenue) are pre‑computed using benchmark‑derived performance models. Second, a weighted‑sum method or Pareto‑front exploration selects the assignment that best matches the chosen weight configuration. The weighted‑sum approach gives operators a simple knob to shift policy (e.g., “carbon‑first” versus “profit‑first”), while Pareto exploration provides a set of trade‑off solutions for more nuanced decision making.

Experimental validation uses four real‑world data centers located in the United States (East and West), Europe, and Asia. These sites exhibit electricity prices ranging from $0.07 to $0.15 per kWh and carbon factors from 0.2 to 0.6 kg CO₂/kWh, reflecting diverse energy mixes and regulatory environments. The workload suite comprises three representative HPC applications: molecular dynamics (NAMD), climate modeling (CESM), and large‑scale linear algebra (ScaLAPACK). Benchmarks supply per‑job CPU demand and execution‑time estimates, which feed the scheduler’s prediction engine.

Compared against a baseline profit‑only scheduler and a simple round‑robin allocator, the proposed energy‑and‑carbon aware policies achieve an average of 28–32 % reduction in total energy consumption and more than a 25 % cut in carbon emissions. Remarkably, despite the energy savings, overall provider profit rises by 5–8 % because jobs are steered toward low‑cost, low‑carbon sites, reducing electricity expenses without sacrificing SLA compliance. Sensitivity analysis shows that increasing the carbon weight β drives the allocation toward the European and Asian data centers, which have higher renewable penetration, thereby amplifying the environmental benefits.

The authors acknowledge several limitations. The power model focuses solely on CPU consumption, ignoring memory, storage, and networking energy, which can be significant for data‑intensive HPC tasks. Job characteristics are assumed to be known a priori; sudden runtime variations could degrade scheduling optimality. Carbon factors are treated as static, country‑average values, potentially misrepresenting intra‑regional renewable fluctuations. Future work is outlined to incorporate full system‑level power modeling, real‑time workload monitoring for dynamic re‑scheduling, and finer‑grained carbon accounting that reflects time‑varying grid mixes and on‑site renewable generation.

In conclusion, the paper delivers a practical, multi‑objective scheduling methodology that enables cloud providers to simultaneously lower operational energy costs, reduce carbon footprints, and improve profitability when hosting HPC workloads. By leveraging the inherent heterogeneity of geographically distributed data centers, the approach offers a concrete pathway toward greener, more economically sustainable cloud computing.

Comments & Academic Discussion

Loading comments...

Leave a Comment