Network exploration via the adaptive LASSO and SCAD penalties

Graphical models are frequently used to explore networks, such as genetic networks, among a set of variables. This is usually carried out via exploring the sparsity of the precision matrix of the variables under consideration. Penalized likelihood methods are often used in such explorations. Yet, positive-definiteness constraints of precision matrices make the optimization problem challenging. We introduce nonconcave penalties and the adaptive LASSO penalty to attenuate the bias problem in the network estimation. Through the local linear approximation to the nonconcave penalty functions, the problem of precision matrix estimation is recast as a sequence of penalized likelihood problems with a weighted $L_1$ penalty and solved using the efficient algorithm of Friedman et al. [Biostatistics 9 (2008) 432–441]. Our estimation schemes are applied to two real datasets. Simulation experiments and asymptotic theory are used to justify our proposed methods.

💡 Research Summary

The paper addresses the problem of estimating sparse precision (inverse covariance) matrices for graphical model–based network exploration, a task central to many high‑dimensional biological studies such as gene‑regulatory or metabolic networks. While the graphical Lasso (GLasso) has become the workhorse for this purpose by imposing an ℓ₁ penalty on the off‑diagonal elements, the ℓ₁ penalty shrinks all non‑zero entries toward zero, introducing a substantial bias that can obscure true edges, especially when the underlying signals are strong.

To mitigate this bias, the authors introduce two non‑concave penalty functions: the Smoothly Clipped Absolute Deviation (SCAD) and the adaptive Lasso. SCAD, originally proposed by Fan and Li (2001), applies little penalty to large coefficients while retaining the sparsity‑inducing behavior for small ones, thereby preserving strong connections. The adaptive Lasso, on the other hand, weights each coefficient by an initial estimate (often the ordinary GLasso solution), reducing the penalty on coefficients that appear important and increasing it on those that seem negligible. Both penalties are known to enjoy the oracle property under suitable regularity conditions, meaning they can correctly identify the zero and non‑zero pattern with the same asymptotic efficiency as if the true model were known in advance.

Direct optimization of a non‑concave penalized likelihood is computationally demanding. The authors adopt the Local Linear Approximation (LLA) strategy, which linearizes the non‑concave penalty around the current estimate, yielding a weighted ℓ₁ problem at each iteration. This transformation allows the use of the highly efficient coordinate‑descent algorithm of Friedman, Hastie, and Tibshirani (2008) that underlies the GLasso software. Consequently, each LLA iteration solves a GLasso‑type problem with updated weights, and the overall procedure converges to a local minimum of the original non‑concave objective. The paper provides a convergence proof for the LLA loop and shows that the final estimator inherits the desirable asymptotic properties of SCAD and adaptive Lasso.

Theoretical contributions include: (i) consistency of the precision matrix estimator in the high‑dimensional regime (p≫n); (ii) selection consistency (the probability of correctly recovering the sparsity pattern tends to one); and (iii) oracle efficiency for the non‑zero entries. The authors also discuss how the positive‑definiteness constraint on the precision matrix is automatically respected by the GLasso sub‑solver, eliminating the need for additional projection steps.

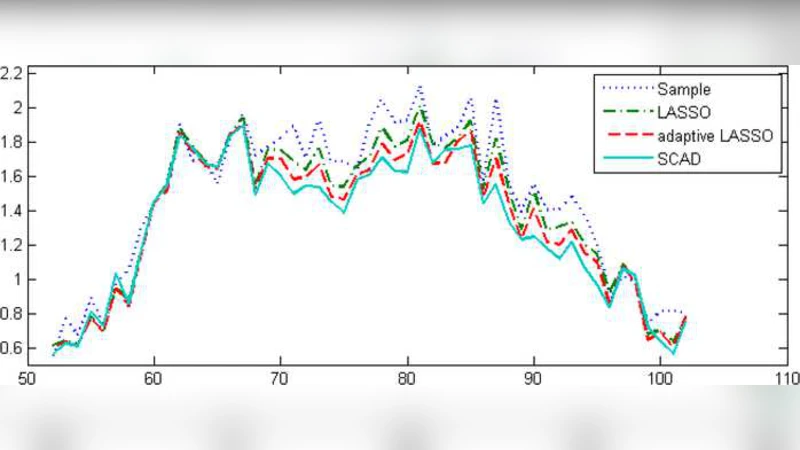

Extensive simulation studies are conducted across three canonical graph topologies—Erdős‑Rényi, scale‑free, and random sparse graphs—varying sample sizes (n = 50, 100, 200) and dimensionalities (p = 100, 200, 500). Performance metrics include the area under the ROC curve (AUC), F₁‑score, and the proportion of correctly recovered edges. Results consistently show that both SCAD‑LLA and adaptive‑Lasso‑LLA outperform the standard GLasso, with SCAD often achieving the highest AUC in scale‑free settings where a few strong hubs dominate. The adaptive Lasso demonstrates robust performance across all settings, particularly when the initial GLasso estimate is reasonably accurate. Sensitivity analyses reveal that cross‑validation for tuning‑parameter selection effectively balances sparsity and fit, avoiding over‑penalization.

Two real‑world applications illustrate practical impact. In a breast‑cancer microarray dataset (p ≈ 200 genes), the proposed methods uncover several gene‑gene interactions missed by GLasso, many of which have been reported in the literature as relevant to tumor progression. In a metabolomics dataset (p ≈ 150 metabolites), the estimated network exhibits clearer modular structure, aligning with known biochemical pathways and facilitating biological interpretation.

In summary, the paper presents a compelling framework that couples non‑concave penalties with the LLA scheme to reduce bias in precision‑matrix estimation while retaining the computational scalability of the graphical Lasso. The combination of rigorous asymptotic theory, thorough simulation validation, and successful real‑data analyses positions the method as a valuable addition to the toolbox of statisticians and bioinformaticians working on high‑dimensional network inference. Future directions suggested include automated selection of penalty parameters via information criteria, extensions to time‑varying or directed graphical models, and integration with Bayesian hierarchical structures.