On the Stability of Community Detection Algorithms on Longitudinal Citation Data

There are fundamental differences between citation networks and other classes of graphs. In particular, given that citation networks are directed and acyclic, methods developed primarily for use with undirected social network data may face obstacles. This is particularly true for the dynamic development of community structure in citation networks. Namely, it is neither clear when it is appropriate to employ existing community detection approaches nor is it clear how to choose among existing approaches. Using simulated data, we attempt to clarify the conditions under which one should use existing methods and which of these algorithms is appropriate in a given context. We hope this paper will serve as both a useful guidepost and an encouragement to those interested in the development of more targeted approaches for use with longitudinal citation data.

💡 Research Summary

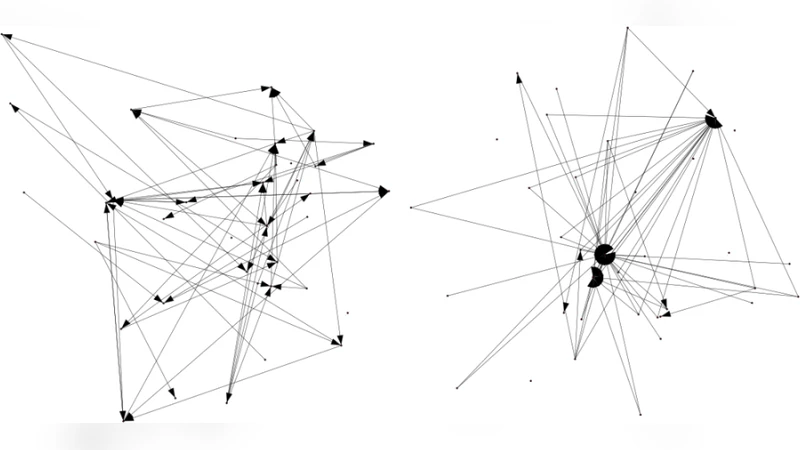

The paper addresses a fundamental gap in network science: the majority of community‑detection methods have been designed for undirected, static graphs, yet citation networks are inherently directed, acyclic, and evolve over time. Because citations always point from newer to older works, the resulting graph is a directed acyclic graph (DAG) that grows as new nodes (papers, patents, etc.) are added and edges (citations) are created. This structural peculiarity violates the assumptions of many popular algorithms such as Louvain, Leiden, and the original Infomap, which either ignore edge direction or assume the possibility of cycles. Consequently, it is unclear when these methods can be safely applied to citation data and how to choose among them for longitudinal analyses of community evolution.

To answer these questions, the authors construct a flexible simulation framework that mimics the growth dynamics of real citation networks. The simulator incorporates four key parameters: (1) a Poisson process governing the yearly arrival of new documents, (2) an average out‑degree controlling how many references each new document makes, (3) a community‑mixing ratio that determines the probability that a reference stays within the same topical community versus crossing to another, and (4) the initial number and size distribution of communities. By varying these parameters, the authors generate four distinct scenarios: low‑growth/high‑density, high‑growth/low‑density, clearly separated communities, and fuzzy‑boundary communities. For each synthetic network a “ground‑truth” community labeling is embedded, providing a benchmark for algorithmic evaluation.

The study evaluates three families of community‑detection techniques. The first family consists of traditional undirected methods applied after symmetrizing the citation graph (Louvain, Leiden, and standard Infomap). The second family includes direction‑preserving extensions (Directed‑Louvain and Directed‑Infomap) that treat edge orientation explicitly but still operate on a static snapshot. The third family comprises dynamic probabilistic models, most notably a Dynamic Stochastic Block Model (DSBM) that jointly infers community assignments and temporal evolution, as well as a recent Temporal Graph Neural Network (TGNN) approach. For each algorithm the authors compute (i) static similarity metrics (Normalized Mutual Information, Adjusted Rand Index) against the ground truth, (ii) a temporal stability metric based on Jaccard similarity of successive community partitions, and (iii) computational cost (runtime and memory footprint).

Results reveal a nuanced landscape. Undirected methods perform surprisingly well in terms of NMI when the network is sparse and communities are well separated, but their temporal stability collapses rapidly as the DAG expands; the loss of directionality leads to frequent re‑assignments of nodes across time steps. Directed‑Infomap maintains high NMI and stability during early growth phases, yet it tends to over‑split communities once the average out‑degree surpasses a critical threshold, causing a sharp drop in ARI. The DSBM, while computationally the most demanding, delivers the most consistent community tracking across all scenarios, especially when inter‑community citation rates are low and the growth rate is moderate. In the high‑mixing, rapid‑growth scenario, even DSBM shows modest degradation, suggesting a ceiling for any model that relies on relatively stable block structures. The TGNN approach offers a middle ground: better scalability than DSBM and improved temporal coherence over static directed methods, but it still lags behind DSBM in absolute accuracy.

Based on these findings, the authors propose practical guidelines for researchers working with longitudinal citation data. For exploratory analyses on early‑stage or low‑density corpora, direction‑aware lightweight algorithms such as Directed‑Infomap are recommended due to their speed and acceptable accuracy. When the research goal is to monitor the evolution of well‑defined research fronts over long periods—e.g., for policy evaluation, funding impact studies, or systematic literature reviews—dynamic probabilistic models like DSBM should be the method of choice, despite their higher computational overhead. Undirected methods can serve as quick sanity checks or for generating initial community seeds, but they should not be relied upon for longitudinal inference. Crucially, the authors stress that “temporal stability” must be treated as a primary evaluation criterion, alongside traditional static similarity scores.

The paper concludes by outlining future research directions. First, there is a need for scalable Bayesian inference techniques that retain the robustness of DSBM while handling millions of nodes typical of large bibliographic databases. Second, integrating textual content, citation context, and metadata (author affiliations, funding sources) could produce richer, multimodal community definitions. Third, developing truly online algorithms that update community assignments incrementally as each new citation arrives would enable real‑time monitoring of emerging scientific topics. By highlighting both the limitations of existing tools and the promise of more tailored approaches, the study aims to serve as a roadmap for scholars seeking reliable community detection in the dynamic, directed world of citation networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment