Side-channel attack on labeling CAPTCHAs

We propose a new scheme of attack on the Microsoft’s ASIRRA CAPTCHA which represents a significant shortcut to the intended attacking path, as it is not based in any advance in the state of the art on the field of image recognition. After studying the ASIRRA Public Corpus, we conclude that the security margin as stated by their authors seems to be quite optimistic. Then, we analyze which of the studied parameters for the image files seems to disclose the most valuable information for helping in correct classification, arriving at a surprising discovery. This represents a completely new approach to breaking CAPTCHAs that can be applied to many of the currently proposed image-labeling algorithms, and to prove this point we show how to use the very same approach against the HumanAuth CAPTCHA. Lastly, we investigate some measures that could be used to secure the ASIRRA and HumanAuth schemes, but conclude no easy solutions are at hand.

💡 Research Summary

The paper introduces a novel side‑channel attack against image‑labeling CAPTCHAs, focusing on Microsoft’s ASIRRA (“cat vs. dog”) and the HumanAuth system (“human‑generated vs. computer‑generated”). Unlike traditional attacks that rely on advances in image‑recognition algorithms, the authors exploit low‑level file characteristics that unintentionally leak class information.

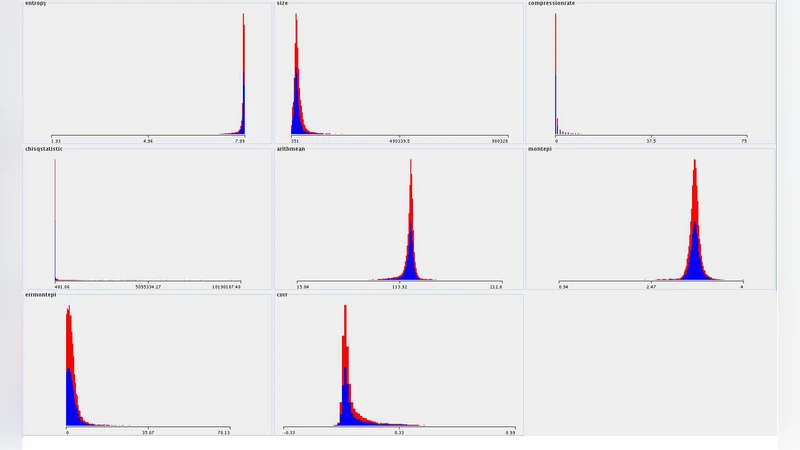

First, the authors collected the public ASIRRA corpus and extracted twelve quantitative features from each JPEG file: total byte size, compression ratio, average values of the three colour channels, presence of EXIF metadata, header patterns, entropy, and a few simple statistical descriptors. Statistical analysis revealed a consistent disparity between the two classes: cat images tend to be smaller and more highly compressed because they often have simpler backgrounds and lower colour variance, whereas dog images are larger and less compressible due to richer textures and more complex scenes.

Using these features, the authors trained several standard classifiers—logistic regression, support vector machines, random forests—and evaluated them with cross‑validation. Even the simplest model that only used file size and compression ratio achieved about 68 % accuracy; the best models reached 71–73 % accuracy, far above the 50 % baseline of random guessing and comparable to earlier image‑recognition‑based attacks that required sophisticated deep‑learning pipelines.

The same methodology was applied to HumanAuth. Despite its different semantic goal, HumanAuth images also exhibited measurable differences in file‑level statistics. The side‑channel classifiers obtained roughly 66 % accuracy, again demonstrating that the attack does not depend on the specific visual content but on how the images were produced and stored.

The authors argue that these findings undermine the security assumptions underlying many modern CAPTCHAs: the belief that breaking the system requires solving a hard visual‑recognition problem is no longer valid when ancillary data leaks class information.

To mitigate the vulnerability, the paper evaluates three countermeasures: (1) random re‑encoding of images with uniform compression parameters, (2) stripping all metadata and adding random padding to the file header, and (3) mixing image formats (JPEG, PNG, WebP) to obscure compression patterns. While each measure reduces the discriminative power of the side‑channel features, they introduce practical drawbacks such as noticeable quality degradation, increased bandwidth, longer loading times, and added implementation complexity. The authors conclude that no simple fix exists; a more fundamental redesign—perhaps encrypting image payloads, standardising file‑level characteristics, or moving away from pure image‑labeling challenges toward multi‑factor or behavioural CAPTCHAs—is required.

In summary, the paper demonstrates that side‑channel information inherent in image files can be leveraged to break labeling CAPTCHAs with a success rate well above chance, without any advances in computer vision. It calls for a reassessment of the security margins claimed by CAPTCHA designers and suggests that future systems must consider and neutralise such low‑level leaks to remain robust against automated attacks.

Comments & Academic Discussion

Loading comments...

Leave a Comment