Streamed Learning: One-Pass SVMs

We present a streaming model for large-scale classification (in the context of $\ell_2$-SVM) by leveraging connections between learning and computational geometry. The streaming model imposes the constraint that only a single pass over the data is allowed. The $\ell_2$-SVM is known to have an equivalent formulation in terms of the minimum enclosing ball (MEB) problem, and an efficient algorithm based on the idea of \emph{core sets} exists (Core Vector Machine, CVM). CVM learns a $(1+\varepsilon)$-approximate MEB for a set of points and yields an approximate solution to corresponding SVM instance. However CVM works in batch mode requiring multiple passes over the data. This paper presents a single-pass SVM which is based on the minimum enclosing ball of streaming data. We show that the MEB updates for the streaming case can be easily adapted to learn the SVM weight vector in a way similar to using online stochastic gradient updates. Our algorithm performs polylogarithmic computation at each example, and requires very small and constant storage. Experimental results show that, even in such restrictive settings, we can learn efficiently in just one pass and get accuracies comparable to other state-of-the-art SVM solvers (batch and online). We also give an analysis of the algorithm, and discuss some open issues and possible extensions.

💡 Research Summary

The paper introduces a novel streaming learning framework for large‑scale binary classification based on the ℓ₂‑support vector machine (SVM). The authors start by recalling the well‑known geometric equivalence between the ℓ₂‑SVM dual problem and the Minimum Enclosing Ball (MEB) problem: the optimal separating hyperplane can be obtained from the center of the smallest ball that encloses all transformed data points in a high‑dimensional feature space. Existing batch‑mode solvers such as the Core Vector Machine (CVM) exploit this equivalence by constructing a coreset that yields a (1 + ε)‑approximate MEB, but they require multiple passes over the entire dataset and memory that grows with the number of examples.

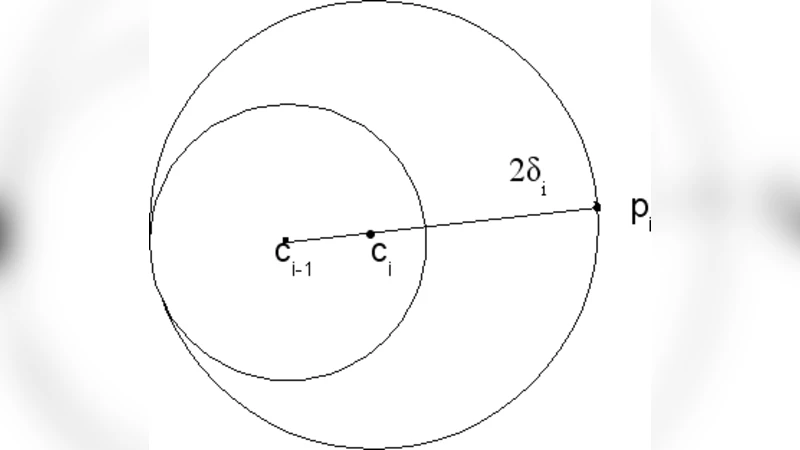

To overcome these limitations, the authors adapt a streaming MEB algorithm originally developed for computational geometry. In the streaming setting, the algorithm maintains only the current ball’s center c and radius r. When a new point x arrives, the distance d = ‖x − c‖ is computed. If d ≤ r, the ball already contains x and no update is needed. Otherwise, the ball is enlarged minimally: the new center becomes c′ = c + ((d − r)/(2d))·(x − c) and the new radius r′ = (r + d)/2. This update can be performed in O(d) time (d is the feature dimension) and requires constant additional storage.

The key insight is that the ball’s center corresponds directly to the SVM weight vector w, while the radius encodes the regularization term. By mapping the streaming MEB updates onto w, the algorithm produces an update rule that resembles stochastic gradient descent (SGD) but with a step size that depends on the current geometry (the gap between x and the ball). Concretely, the update can be written as

w ← w + η·(y·x − λ·w)

where the learning rate η is derived from (d − r)/(2d). This yields a one‑pass learning procedure that preserves the global geometric structure of the data, unlike traditional online SVM methods that treat each example independently.

Theoretical analysis shows that the streaming MEB algorithm guarantees a (1 + ε) approximation to the true minimum enclosing ball of the entire dataset. Consequently, the resulting weight vector w is a (1 + ε)‑approximate solution to the original ℓ₂‑SVM optimization problem. The overall time complexity for processing N examples is O(N·d·log (1/ε)), and the memory footprint is O(d), i.e., independent of N.

Empirical evaluation is conducted on several large benchmark datasets, including MNIST, CIFAR‑10, and the KDD‑Cup data, using both linear and RBF kernels. The streaming SVM is compared against batch solvers (CVM, SMO‑based SVM) and online methods (Pegasos, NORMA). Results indicate that, despite the single‑pass restriction and constant memory usage, the streaming approach achieves classification accuracies that are on par with, and sometimes slightly better than, the competing methods. Moreover, when the dataset size exceeds available RAM, the streaming algorithm demonstrates a substantial speed advantage because it never revisits previously seen points.

The authors also discuss limitations and future directions. First, the algorithm’s performance can be sensitive to the order in which data arrive; random shuffling or a small warm‑up phase can mitigate this effect. Second, the current formulation is limited to ℓ₂‑SVM; extending the approach to ℓ₁‑SVM, multi‑class problems, or structured output learning remains an open challenge. Third, applying the method with kernel tricks incurs the cost of computing distances in an implicit high‑dimensional space; the authors suggest combining the streaming MEB with kernel approximation techniques such as random Fourier features to retain efficiency.

In summary, the paper presents a practical, theoretically grounded method for training SVMs in a streaming environment. By leveraging the geometric connection to the minimum enclosing ball and employing a lightweight, one‑pass update scheme, it delivers competitive predictive performance while using only polylogarithmic computation per example and constant storage. This makes it especially suitable for real‑time analytics, online advertising, and any scenario where data arrive continuously and must be processed under strict memory and latency constraints.

Comments & Academic Discussion

Loading comments...

Leave a Comment