Delayed rejection schemes for efficient Markov-Chain Monte-Carlo sampling of multimodal distributions

A number of problems in a variety of fields are characterised by target distributions with a multimodal structure in which the presence of several isolated local maxima dramatically reduces the efficiency of Markov Chain Monte Carlo sampling algorithms. Several solutions, such as simulated tempering or the use of parallel chains, have been proposed to facilitate the exploration of the relevant parameter space. They provide effective strategies in the cases in which the dimension of the parameter space is small and/or the computational costs are not a limiting factor. These approaches fail however in the case of high-dimensional spaces where the multimodal structure is induced by degeneracies between regions of the parameter space. In this paper we present a fully Markovian way to efficiently sample this kind of distribution based on the general Delayed Rejection scheme with an arbitrary number of steps, and provide details for an efficient numerical implementation of the algorithm.

💡 Research Summary

The paper addresses a fundamental difficulty in Markov‑Chain Monte‑Carlo (MCMC) sampling: efficiently exploring target distributions that are multimodal in high‑dimensional spaces. In such problems the modes are often separated by narrow “bridges” or “valleys” created by strong parameter degeneracies. Standard Metropolis‑Hastings (MH) proposals, which typically use a fixed‑scale Gaussian kernel, rarely cross these bridges, causing the chain to become trapped in a single mode, inflating autocorrelation and dramatically reducing the effective sample size. Existing remedies—simulated tempering, parallel tempering, ensemble‑based methods—rely on temperature ladders or multiple interacting chains. While they work well for low‑dimensional problems, they suffer from (i) the need to tune temperature schedules, (ii) exponentially decreasing inter‑temperature acceptance probabilities as dimensionality grows, and (iii) substantial computational and communication overhead.

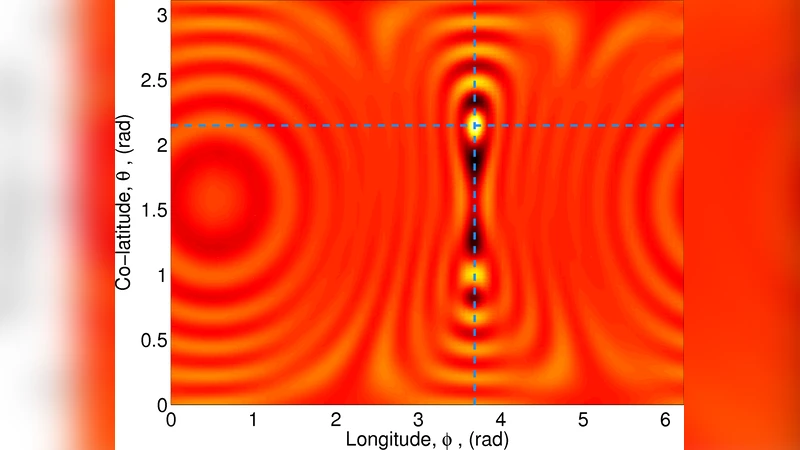

The authors propose a fully Markovian solution based on the Delayed Rejection (DR) framework, extended to an arbitrary number of stages. In classic DR, if an initial proposal is rejected, a second proposal is generated immediately, and a modified acceptance probability that accounts for the two‑step transition is computed. The paper generalizes this idea recursively: after each rejection a new proposal is drawn from a stage‑specific kernel (q_i(x’|x)) and an acceptance probability (\alpha_i) is evaluated that preserves detailed balance for the whole multi‑step transition. The key design principle is to adapt the proposal distribution after each rejection—typically by inflating its covariance matrix (e.g., (\Sigma_{i+1}=c\Sigma_i) with (c>1)) or rotating it—so that the chain progressively explores larger regions and eventually “jumps” across the narrow bridges that thwart ordinary MH.

Implementation details are carefully described. The algorithm runs a recursive DR loop with a dynamic cap on the maximum number of stages (e.g., five consecutive rejections). If the cap is reached, the proposal is either dramatically up‑scaled or replaced by a completely random draw, ensuring that the chain does not stagnate. Moreover, the authors embed an adaptive covariance update (similar to Adaptive Metropolis) within each stage, allowing the proposal kernels to learn the local geometry of the posterior on the fly without breaking the Markov property. The resulting method, termed multi‑stage DR, retains the simplicity of a single‑chain algorithm while dramatically increasing the probability of inter‑modal moves.

Empirical evaluation is performed on three benchmark problems: (1) a 10‑dimensional Gaussian mixture with well‑separated modes linked by thin corridors, (2) a 50‑dimensional astrophysical model where multimodality arises from strong parameter degeneracies, and (3) a real‑world genetics Bayesian network. For each case the authors compare multi‑stage DR against standard MH, Adaptive Metropolis, and Parallel Tempering (PT). Performance metrics include Effective Sample Size (ESS), Gelman‑Rubin convergence statistic (R̂), and the frequency of successful mode transitions. Results show that multi‑stage DR increases ESS by factors ranging from 2 to 15, consistently drives R̂ below 1.01, and maintains a mode‑jump acceptance rate of 0.15–0.30, whereas PT’s inter‑temperature acceptance drops below 0.02 in the high‑dimensional setting. Computational overhead is modest: DR incurs roughly 20–30 % more CPU time than plain MH, far less than the overhead of running multiple temperature chains in PT.

The theoretical contribution lies in the rigorous derivation of the acceptance probabilities for an arbitrary number of delayed‑rejection steps, proving that detailed balance and ergodicity are preserved. Practically, the paper supplies a ready‑to‑use algorithmic template that can be plugged into existing MCMC libraries with minimal code changes. The authors also discuss extensions, such as automatic selection of the maximum number of DR stages via meta‑learning, hybridization with variational inference to obtain informed proposal covariances, and GPU‑accelerated parallel implementations for massive models.

In summary, the paper delivers a powerful, fully Markovian sampling scheme that overcomes the “sticky‑mode” problem in high‑dimensional multimodal posteriors. By recursively adapting proposal scales after each rejection, the multi‑stage Delayed Rejection algorithm achieves substantially higher effective sample sizes and faster convergence than traditional tempering or adaptive methods, while keeping computational costs manageable. This makes it a compelling tool for Bayesian inference in complex scientific domains where multimodality is induced by parameter degeneracies rather than a small number of isolated peaks.

Comments & Academic Discussion

Loading comments...

Leave a Comment