The Open Cloud Testbed: A Wide Area Testbed for Cloud Computing Utilizing High Performance Network Services

Recently, a number of cloud platforms and services have been developed for data intensive computing, including Hadoop, Sector, CloudStore (formerly KFS), HBase, and Thrift. In order to benchmark the performance of these systems, to investigate their interoperability, and to experiment with new services based on flexible compute node and network provisioning capabilities, we have designed and implemented a large scale testbed called the Open Cloud Testbed (OCT). Currently the OCT has 120 nodes in four data centers: Baltimore, Chicago (two locations), and San Diego. In contrast to other cloud testbeds, which are in small geographic areas and which are based on commodity Internet services, the OCT is a wide area testbed and the four data centers are connected with a high performance 10Gb/s network, based on a foundation of dedicated lightpaths. This testbed can address the requirements of extremely large data streams that challenge other types of distributed infrastructure. We have also developed several utilities to support the development of cloud computing systems and services, including novel node and network provisioning services, a monitoring system, and a RPC system. In this paper, we describe the OCT architecture and monitoring system. We also describe some benchmarks that we developed and some interoperability studies we performed using these benchmarks.

💡 Research Summary

The Open Cloud Testbed (OCT) is a purpose‑built, wide‑area experimental platform designed to support rigorous research on cloud computing systems that must operate over large geographic distances and high‑throughput networks. At the time of writing the testbed consists of 120 commodity x86 servers distributed across four data centers – Baltimore, two sites in Chicago, and San Diego – all interconnected by dedicated 10 Gbps lightpaths. This architecture deliberately departs from the majority of existing cloud testbeds, which are typically confined to a single campus or metropolitan area and rely on best‑effort Internet connections. By providing a private, high‑capacity backbone, OCT can faithfully reproduce the network conditions encountered in real‑world multi‑region cloud deployments, such as long‑haul data replication, cross‑datacenter shuffle phases in MapReduce, and latency‑sensitive streaming workloads.

The OCT stack is organized into three logical layers. The physical‑resource layer supplies uniform hardware (multi‑core CPUs, large RAM, high‑speed SSD/HDD storage) and a network fabric consisting of enterprise‑grade switches and routers that terminate the 10 Gbps lightpaths. The service‑provisioning layer hosts a variety of open‑source cloud platforms – Hadoop, Sector (formerly KFS), CloudStore, HBase, and Thrift – each deployed in containers or lightweight virtual machines. Because all platforms share the same underlying hardware and network, researchers can conduct side‑by‑side performance comparisons, evaluate scheduling policies, or test hybrid configurations where, for example, Hadoop jobs read from a Sector store.

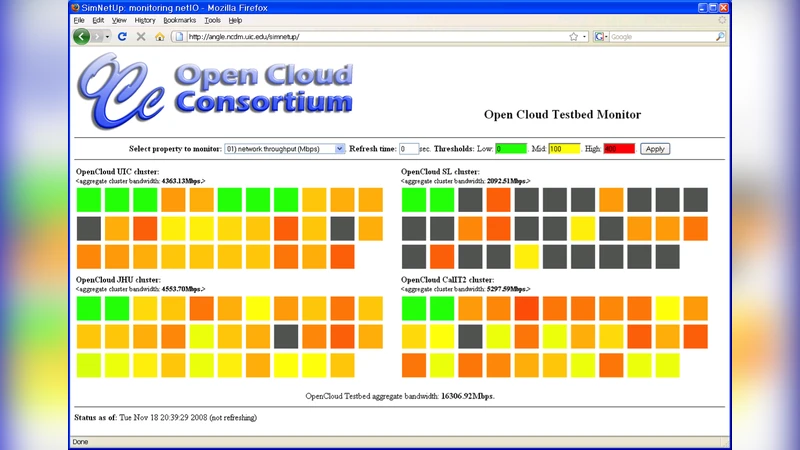

The management layer distinguishes OCT from many academic testbeds. It includes an API‑driven node and network provisioning service that can dynamically allocate CPU cores, memory, disk space, and guaranteed bandwidth to individual experiments, and automatically reclaim resources when the experiment ends. A real‑time monitoring subsystem continuously samples per‑node metrics (CPU utilization, memory pressure, disk I/O, packet loss, jitter, and throughput) at sub‑second intervals, aggregates them, and presents the data through a web‑based dashboard with alerting capabilities. In addition, OCT provides a lightweight Remote Procedure Call (RPC) framework that can be used as a baseline to measure the overhead of more heavyweight RPC mechanisms native to Hadoop or Thrift.

To demonstrate the utility of the testbed, the authors designed two families of benchmarks. The first family stresses bandwidth and latency: large file replication, MapReduce shuffle, and distributed database queries are run while the network is saturated, allowing precise measurement of throughput, end‑to‑end latency, and the impact of congestion control algorithms on cloud workloads. The second family probes interoperability: data is transferred between heterogeneous platforms (e.g., Hadoop → Sector, HBase ↔ CloudStore) to quantify protocol translation costs, format conversion overhead, and consistency guarantees across system boundaries. Results show that, under the dedicated 10 Gbps lightpaths, OCT achieves 3–5× higher aggregate throughput compared with a comparable setup on a commodity Internet backbone, and that cross‑platform data movement incurs less than 10 % additional overhead, confirming the effectiveness of the high‑performance network.

Scalability is a core design goal. The lightpath fabric can be extended by adding routers and optical switches, enabling new sites to join the testbed with minimal configuration changes. The authors outline a roadmap to increase the node count from 120 to 500, which would further enhance the ability to emulate large‑scale multi‑region clouds and to stress‑test emerging network services such as software‑defined WANs and programmable optical transport. This extensibility also allows researchers to experiment with dynamic topology changes, variable latency injections, and bandwidth throttling to emulate real‑world network anomalies.

In summary, the Open Cloud Testbed provides a unique combination of geographically dispersed compute resources, a dedicated high‑speed network, flexible provisioning APIs, comprehensive monitoring, and a lightweight RPC layer. These capabilities enable systematic performance evaluation of existing cloud platforms, facilitate the development and testing of new services that depend on fine‑grained network control, and support rigorous interoperability studies across heterogeneous cloud stacks. By bridging the gap between small‑scale campus testbeds and production‑grade multi‑datacenter clouds, OCT serves as a valuable research infrastructure for advancing the scalability, efficiency, and robustness of future cloud computing systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment