Graphical Probabilistic Routing Model for OBS Networks with Realistic Traffic Scenario

Burst contention is a well-known challenging problem in Optical Burst Switching (OBS) networks. Contention resolution approaches are always reactive and attempt to minimize the BLR based on local information available at the core node. On the other h…

Authors: Martin Levesque, Halima Elbiaze

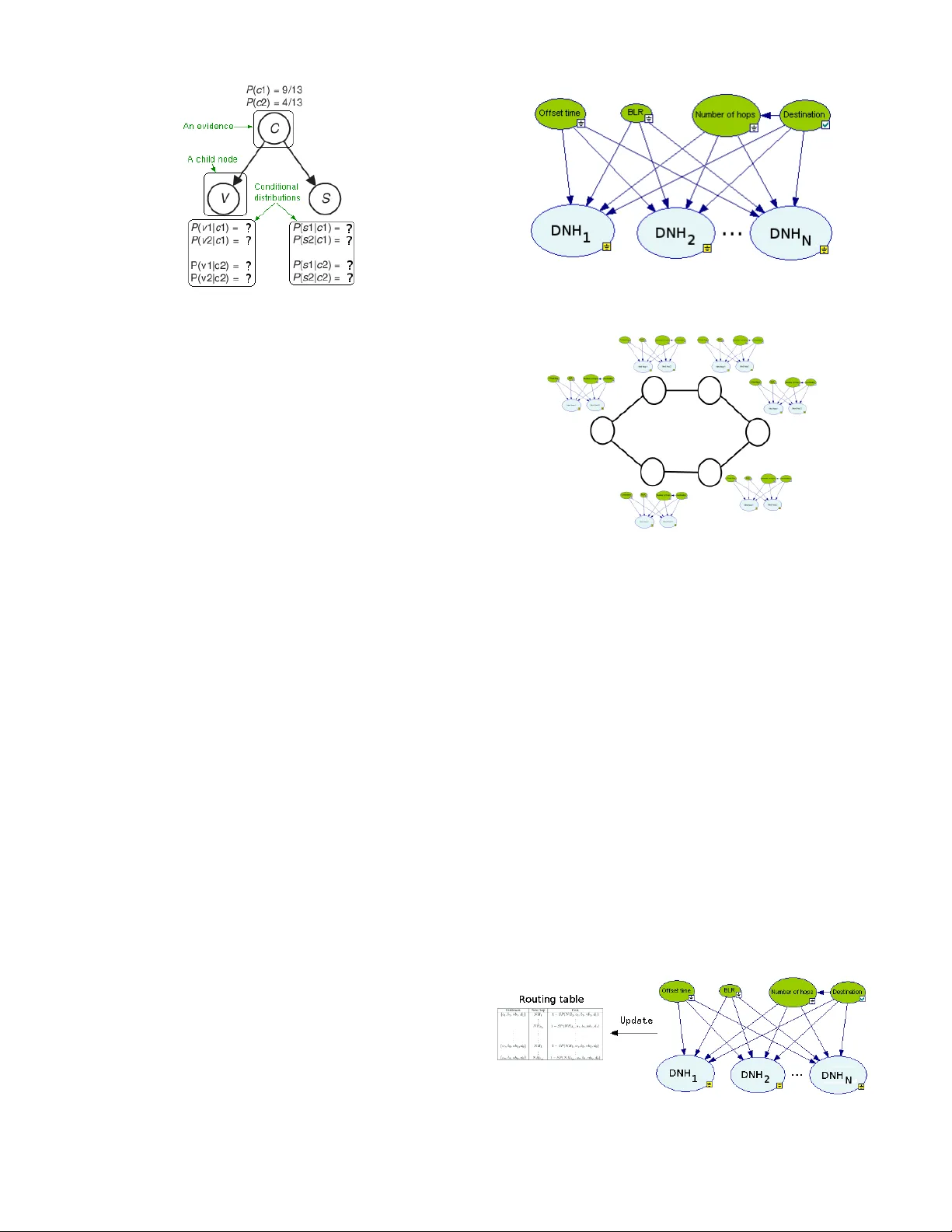

Graphical Probabilistic Routing Model for OBS Networks with Realistic T raf fic Scenario Martin L ´ evesque and Halima Elbiaze Department of Computer Science Univ ersit ´ e du Qu ´ ebec ` a Montr ´ eal Montr ´ eal (QC), Canada Email: lev esque.martin.6@courrier .uqam.ca, elbiaze.halima@uqam.ca Abstract —Burst contention is a well-known challenging problem in Op- tical Burst Switching (OBS) networks. Contention r esolution approaches are always reactiv e and attempt to minimize the BLR based on local information available at the core node. On the other hand, a proactive approach that a voids burst losses bef ore they occur is desirable. T o r educe the probability of burst contention, a more rob ust r outing algorithm than the shortest path is needed. This paper proposes a new routing mechanism for JET -based OBS networks, called Graphical Probabilistic Routing Model (GPRM) that selects less utilized links, on a hop-by-hop basis by using a bayesian network. W e assume no wavelength conv ersion and no buffering to be av ailable at the core nodes of the OBS network. W e simulate the proposed approach under dynamic load to demonstrate that it r educes the Burst Loss Ratio (BLR) compared to static approaches by using Network Simulator 2 (ns-2) on NSFnet network topology and with realistic traffic matrix. Simulation results clearly show that the proposed approach outperf orms static approaches in terms of BLR. I . I N T RO D U C T I O N Optical Burst Switching (OBS) [1], [2], [3] is a promising technol- ogy to handling bursty and dynamic Internet Protocol traf fic in optical networks effectiv ely . In OBS networks, user data (IP for example) is assembled as a huge se gment called a data bur st which is sent using one-way resour ce r eservation . The burst is preceded in time by a control packet, called Burst Header P ack et (BHP), which is sent on a separate control wav elength and requests resource allocation at each switch. When the control packet arriv es at a switch, the capacity is reserved in the cross-connect for the burst. If the needed capacity can be reserved at a given time, the burst can then pass through the cross- connect without the need of b uffering or processing in the electronic domain. Since data bursts and control packets are sent out without waiting for an acknowledgment, the burst could be dropped due to resource contention or to insufficient offset time if the burst catches up the control packet. Thus, it is clear that burst contention resolution approaches play an essential role to reduce the BLR in OBS networks [4]. Burst contention can be resolved using sev eral approaches, such as wavelength con version , buffering based on fiber delay line (FDL) or deflection routing . Since deflection cannot eradicate the burst loss, retransmission at the OBS layer has been suggested by T orra et al. [5] to av oid resource contention. Another approach, called burst se gmentation , resolves contention by dividing the contended b urst into smaller parts called se gments , so that a segment is dropped rather than the entire burst. All these approaches are reacti ve and attempt to minimize the BLR based on local information available at the core node. Whereas a proactiv e approach that avoids burst losses before they occur is desirable. This paper introduces a novel algorithm called Graphical Pr oba- bilistic Routing Model (GPRM) for OBS networks in order to build more effectiv e routing tables and hence to reduce the BLR without affecting the end-to-end delay . T o the best of our knowledge, our work is the first that proposes a graphical probabilistic model to the problem of optimal routing in OBS networks. Reinforcement Learn- ing algorithms for path selection and wav elength selection hav e been proposed [6]. Howe ver predetermined routes are computed. GPRM does not need an y precomputed paths since a path is constructed based on local knowledge on adjacent hops. From the ingress node to the destination, the BHP selects at each intermediate node the next hop by using a lookup in the routing table. These routing tables are periodically updated by the learning process of the bayesian network. GPRM algorithm exploits the e xchange of P ositive Acknowledg ement (A CK) and Ne gative Ac knowledgement (N A CK) messages in order to update bayesian networks so that each node learn the status of the other nodes. At each OBS node, an agent is placed and makes dynamic updates to his routing table by using a bayesian network. Our approach allows us to update the local policies while avoiding the need for a centralized control or a global knowledge of the network state. W e choose Bayesian networks [7] for our learning models because of their expressi veness and more eleg ant graphical representation compared to other black box machine learning models. Bayesian networks, sometimes called belief networks or graphical models, can be designed and interpreted by domain e xperts because they e xplicitly communicate the relev ant variables and their interrelationships. This paper is organized as follo ws. Section II giv es the motiv ation of this work and in section III, we present the proposed model. Finally , Section IV sho ws simulation testbed and results and Section V contains the conclusion and future work. I I . M OT I V A T I O N The traffic between two cities highly depends on the population, the number of employees and on the number of hosts [8]. Thus, the reality is that the traffic is not uniformally distributed. So intuitiv ely static algorithms such as the shortest path are not effecti ve in terms of network utilization. The main idea of this work is to propose an intelligent routing mechanism for OBS Networks to adapt routing paths according to the network en vironment (traffic variations, link or node failure, topology changes). From a machine learning perspectiv e, it is desirable for the routing mechanism to tune itself into a systematic, mathematically- principled way . A probabilistic graphical model specifies a family of probability distributions which can be represented in terms of a graph [9]. A bayesian network is a probabilistic graphical model and a directed acyclic graph. Nodes represent variables and links represent depen- dencies. For example Fig. 1 presents a very simple example of a bayesian network composed of 3 nodes (the variables) and 2 directed links (the dependencies). The main components of a variable are his state and Fig. 1. A bayesian network example a conditional distribution (a conditional probability table). A state is typically a possible category of values. For example let’ s say we hav e a variable T emperatur e which can be either Raining ( Ra ), Cloudy ( C l ) or Sunny ( S u ). In this case, possible states are { R a , C l , S u } . In the example (Fig. 1), variable C is called an evidence since it is a known information. V ariables V and S depend on the state of the variable C . V ariable C is a parent variable of V and S . In general we say that a variable depends on his connected parents. The conditional distribution of a gi ven v ariable gi ves probabilities for all combinations of the given variable’ s states and all his parents states. More formally , the joint distribution of a bayesian network which contains a set of N v ariables X 1 , X 2 , ..., X N is giv en by the product of each node distribution and its parents : P ( X 1 , X 2 , ..., X N ) = N Y i =1 P ( X i | par ents ( X i )) (1) If a variable has no parent, it is said to be unconditional. Another important concept is the notion of inference. Resolving a probabilistic inference is done by applying Bayes’ theorem [9]. If a giv en node X i has M e vidences, the probability that variable X i is in the state x i is found by doing the following inference: P ( X i = x i | E 1 = e 1 , E 2 = e 2 , ..., E M = e M ) (2) This inference application is trivial. Sev eral more advanced appli- cations can be done [9]. A bayesian network is a model to represent the kno wledge as well as a conditional probability calculator . It is also a learning model that can be used to learn the parameters (conditional distributions) or the structure of the bayesian network. The proposed model presented in Section III uses parameter learning to optimize the routing table. The use of a bayesian network, in our study , is motivated by the followings: • The probabilistic formulation of a bayesian network is exploited for performing the resource reservation. Next hop wavelength selection is then represented by a conditional probability calcu- lated using our model. • The best next hop must be selected based on sev eral metrics. Those metrics are represented by e vidences in our model. A bayesian network inference is used to calculate the probability to reserve the bandwidth based on several evidences (metrics). For example, some evidences in OBS networks are: BLR, end- to-end delay , load, offset time, etc. • The concept of linking variables by using arrows reduces the complexity of interrelationships between relev ant variables. Fig. 2. The Graphical Probabilistic Routing Model (GPRM) - A Bayesian Network Fig. 3. A v ery basic topology with GPRM Thus, the studied system can be easily modelized and modified graphically by the domain expert. • The learning capability of the bayesian network allows the net- work to systematically be adapted to its en vironment (topology changes, traffic variation, node and link failure). The probabilis- tic graphical model can recompute and reoptimize automatically routing tables. I I I . P RO P O S E D M O D E L A detailed description of the proposed model is given in this section as well as the routing table. Then, the signaling scheme and the notification packets are defined. A. GPRM description The proposed model is a bayesian network composed of known information (evidences) and decision nodes (Fig. 2). There is one bayesian network for each network node within the OBS topology (Fig. 3). The main functionality of GPRM is the selection of the next hop for the forwarding process. Obviously , the forwarding process must be fast and a typical fast routing table lookup is computed Fig. 4. GPRM updating the routing table Fig. 5. Low , medium and high traffic delimitations by using the goodput periodically by the proposed model (Fig. 4). This routing table is used when the BHP attempts (in the electronic domain) to reserve the resource for the data burst. GPRM is composed of four evidences and one decision node for each possible next hop. A lookup to the routing table is done according to evidences in order to successi vely get the best next hop in terms of probability of success to reach the destination. GPRM includes the following evidences: • Offset time ( O ): The offset time has a significant impact on burst loss since if the offset time is insufficient, the burst will be dropped. Consequently the offset time is a relev ant metric to be considered in order to select the next hop. The states of this e vidence are based on the number of hops to reach the destination from the source (we used 0 to 15). Knowing the path length, the offset time can be easily calculated. • BLR ( B ): The BLR is used to categorize statistics. In our study , we consider three possible states for this variable : Low , Medium and High (Fig. 5). • Number of hops ( N B ): The states of this variable depends on the destination. Howe ver it has been added as an input variable for decision nodes. • Destination ( D ): Possible states of this variable are the OBS node identifiers. GPRM also includes one decision node per possible next hop. Each decision node has two possible states: Success (noted ⊕ ) and F ailure (noted ). Let k be an OBS node identifier , D N H k expresses the bayesian decision node of the OBS next hop k , the joint probability function of the proposed model is given by: P ( O , B , N B ,D, D N H 1 , ..., D N H N ) = N Y i =1 P ( D N H i | O , B , N B , D ) ! ∗ P ( O ) ∗ P ( B ) ∗ P ( N B | D ) ∗ P ( D ) (3) The maximum a posteriori of D N H k is defined by: M AP DN H k = arg max ϕ P ( ϕ | o, b, nb, d ) (4) where ϕ ∈ {⊕ , } which is a possible value of node D N H k and where o, b, nb, d are possible values of the evidences in the bayesian network ( o is a possible state of the Offset time variable, etc.). M AP DN H k = arg max ϕ P ( ϕ ) P ( o, b, nb, d | ϕ ) P ( o, b, nb, d ) (5) M AP DN H k = arg max ϕ P ( ϕ ) P ( o, b, nb, d | ϕ ) (6) If we assume that the evidences are independent, the maximum a posteriori can be approximated as follows: M AP DN H k ≈ arg max ϕ P ( ϕ ) P ( o | ϕ ) P ( b | ϕ ) P ( nb | ϕ ) P ( d | ϕ ) (7) Let S P ( D N H k , o, b, nb, d ) (Success Probability , D N H k = ⊕ ) be the approximation of M AP DN H k when D N H k = ⊕ . B. Routing table The proposed model uses a dif ferent routing table compared to the typiv al approaches such as the shortest path in order to consider GPRM’ s bayesian network. In most routing approaches, metrics used in the routing table are < D estination, N ext hop, C ost > . How- ev er GPRM’ s routing table uses < E v idences, N ext hop, C ost > where evidences add granularity in order to route the traffic more effecti vely . GPRM’ s routing table is a fast routing table defined as follows: T ABLE I G P R M R O U TI N G TA B L E Evidences Next hop Cost { o 1 , b 1 , nb 1 , d 1 } N H 1 1 1 − SP ( D N H N H 1 1 , o 1 , b 1 , nb 1 , d 1 ) . . . . . . N H 1 β 1 1 − SP ( D N H N H 1 β 1 , o 1 , b 1 , nb 1 , d 1 ) . . . . . . . . . . . . . . . . . . { o γ , b δ , nb η , d θ } N H E P 1 1 − SP ( D N H N H E P 1 , o γ , b δ , nb η , d θ ) . . . . . . N H E P β E P 1 − SP ( D N H N H E P β E P , o γ , b δ , nb η , d θ ) where β i expresses the number of different next hops depending on the evidences of the i permutation, γ , δ, η , θ are the number of possible states for evidence variables. N H j i represents the i th next hop of the j th evidence permutation. N H j 1 represents the minimum cost of the j th evidence permutation. For example if we have the evidence permutation { o 1 , b 1 , nb 1 , d 1 } and β 1 = 3 , we could have the following next hops: { 1 , 3 , 7 } . The column Evidences represents the combinations of all states of all e vidences. The number of evidence permutations is defined by: E P = γ ∗ δ ∗ η ∗ θ (8) Thus, the number of rows in the routing table is defined by: N Rows = E P X i =1 β i (9) The cost is expressed by: C ost ( N H , o, b, nb, d ) = 1 − S P ( N H, o, b, nb, d ) (10) W e note that for a given e vidence permutation, next hops are sorted as follows: ∀ N i =1 ∀ β i − 1 j =1 C ost ( N H j , o, b, nb, d ) ≤ C ost ( N H j +1 , o, b, nb, d ) (11) The following algorithm defines the lookup mechanism to get the best next hop: Algorithm 1 : Look up in the routing for the best next hop according to evidences. Data : N , a set of all OBS node identifiers. At each node i ∈ N 1 for each BHP do 2 Extract evidences { o, b, nb, d } 3 Find the corresponding row ( k ) in the routing table. 4 Select N H 1 k . 5 end 6 The following algorithm maps the bayesian network to a fast routing table periodically: Algorithm 2 : Maps the bayesian network to a fast routing table periodically . Data : N , a set of all OBS node identifiers. Data : T , time interval update. At each node i ∈ N 1 At every T 2 for each Evidence permutation j th { o, b, nb, d } do 3 Locate { o, b, nb, d } in the routing table. 4 Get next hop identifiers ( ids ) according to { o, b, nb, d } . 5 index ← [] 6 for each id ∈ ids do 7 Add ( id, 1 − S P ( D N H id , o, b, nb, d )) by order of cost 8 in index . end 9 Associate { o, b, nb, d } to index in the routing table. 10 end 11 C. Signaling scheme and notification packets The proposed model uses the well-known JET signaling scheme [2]. Howe ver notification packets are used in order to update GPRM’ s bayesian network. A positive acknowledgement (A CK) is sent when a BHP reaches the destination (Fig. 6). A ne gative ac knowledgement (N A CK) is sent when a BHP can not reserve the bandwidth for the data burst (Fig. 7). W e note that these notification packets can also be used in an OBS scheme where retransmission is av ailable in order to free buffered bursts. Also, evidences are stored in BHP and in notification packets in order to update GPRM’ s bayesian network. When an OBS node receives a notification packet, GPRM’ s bayesian network is updated and the routing table is refreshed at the next update period. The following algorithm (Algorithm 3) describes the reception of a notification packet and the update of the bayesian network according Fig. 6. Signaling scheme without contention Fig. 7. Signaling scheme with contention to evidences. Algorithm 3 : Reception of a notification packet and update of the bayesian network according to evidences. Data : N , a set of all OBS node identifiers. At each node i ∈ N 1 for each ACK/N ACK do 2 Extract evidences { o, b, nb, d } 3 Extract the last hop ( LH ). 4 Extract the notification packet type ( N P T ). 5 S P ← S P ( D N H LH , o, b, nb, d ) 6 S P 0 ← αS P + (1 − α ) A 7 Where α ∈ [0 .. 1] and A is giv en by: 8 9 A = 1 if N P T = AC K 0 if N P T = N AC K Update the bayesian node DN H LH such that S P 0 is the 10 new v alue in the conditional distribution according to evidences. end 11 I V . S I M U L A T I O N R E S U LT S Simulations are performed with NSFnet (Fig. 8) topology by using Network Simulator 2 (ns-2) [10] with an extra module for OBS. The C++ library Structural Modeling, Infer ence, and Learning Engine (SMILE) [11] is used for the bayesian network. GPRM is compared to the well-known Shortest Path algorithm for the performance comparison. The shortest path algorithm always selects Fig. 8. NSFnet topology Fig. 9. GPRM learning NSFnet topology without initial routing information paths minimizing the number of hops. The proposed model (GPRM) defined in Section III is used, which tends to select paths in order to maximize link utilization and in order to decrease the BLR. The following simulation configuration is used: • Each wav elength has 1 Gbit/s of bandwidth capacity . • Each link has 2 control channels and 4 data channels. • The mean burst size (noted L ) equals 400 KB. • Packet and burst generation follows a Poisson distribution for the input packet rate and for the burst size. • Connections are distributed over the network proportionately to the well-known reference transport network scenario of the US Network [8]. • Let N be the number of nodes in the topology , ξ i,j the number of OBS connections between i and j , λ i,j,k the number of bursts sent per second of the k connection between i and j , µ i the capacity available at i , the load is given by: Load = N X i =1 N X j =1 ξ i,j X k =1 λ i,j,k ∗ L µ i (12) • No deflection, retransmission or other contention resolution strategies are used. A. GPRM learning NSFnet topology without initial r outing informa- tion An implicit benefit of GPRM is the capability of an OBS node, without initial routing information, to learn his neighbors in order to route and distribute the traffic efficiently . It can be useful when faults happen in a topology since an automatic fault recov ery mechanism Fig. 10. BLR is applied. The Shortest Path algorithm has initial information about the topology such as next hops, number of hops to reach destinations, etc. GPRM requires less than 1 second to learn ho w to distrib ute the traffic at least as effecti vely as the Shortest Path (Fig. 9). B. Comparison of GPRM and Shortest path For the rest of the comparison, we assume that the initial routing information are av ailable for both algorithms. GPRM giv es significativ e improvements in terms of BLR even at high loads (Fig. 10). Let N be the number of simulations where each simulation has a different load. The BLR gain is given by: B LR Gain = N X i =1 B LR S P,i − B LR GP RM,i B LR S P,i (13) where B LR S P,i defines the BLR of the i simulation by using the shortest path. The utilization gain is then defined by: U Gain = N X i =1 U GP RM,i − U S P,i U S P,i (14) W e can observe that when the load is less than 0.5, using GPRM reduces the number of bursts dropped by 50 % ( BLR Gain ) compared to the shortest path algorithm (Fig. 11). GPRM giv es at most 1 ms more of end-to-end delay compared to the shortest path algorithm (Fig. 12). It can be explained by the fact that GPRM does not necessarily use the shortest path. GPRM can select next hop which requires more hops to reach the destination in order to use less utilized links. Howe ver , 1 ms does not have an impact on transport protocols such as TCP . GPRM gives significati ve improvements in terms of network utilization (about 20 % of U Gain ) as shown in Fig. 13. W e can observe that a gain of 20 % of network utilization can decrease more than 50 % of BLR so the routing mechanism is very important in paradigms such as OBS where contention is an important issue. V . C O N C L U S I O N A N D F U T U R E W O R K This paper presents a nov el routing scheme called Graphical Pr obabilistic Routing Model (GPRM) that selects less utilized links by using a bayesian network. Decisions are based on local knowledge from a bayesian network updated each time an OBS node receiv es Fig. 11. BLR and utilization gains Fig. 12. End-to-End delay Fig. 13. Network utilization a notification packet. The bayesian model contains one decision node for each possible next hop from the current node. Conditional distributions are constructed from evidences, from known metrics. A routing table is periodically updated by using the bayesian network in order to not penalize the forwarding process. Permutations of all states of all evidences are included in the routing table in order to make decisions more ef fectiv e. GPRM is capable to learn an unknown topology as well as recover network faults. Simulation results show that the proposed model performs efficiently in NSFnet since it decreaces significantly the BLR and increases the network utilization without affecting considerably the end-to-end delay . A possible future step of this research is to combine several contention resolution strategies in a dynamic way because we believ e that the feasibility of OBS requires effecti ve and adaptiv e algorithms to overcome the burst loss issue. R E F E R E N C E S [1] C. Qiao and M. Y oo, “Optical Burst Switching - A New Paradigm for an Optical Internet”, Journal of High Speed Networks , vol. 8, no. 1, pp. 69–84, 1999. [2] J. Jue and V . V okkarane, Optical Burst Switched Networks , Springer, 2004. [3] C. Gauger , Novel Network Arc hitectur e for Optical Burst T ransport , PhD thesis, Universit ¨ at Stuttgart, Stuttgart, Germany , 2006. [4] C. Gauger, M. K ¨ ohn, and J. Scharf, “Performance of Contention Resolution Strategies in OBS Network Scenarios”, Proceedings of the 9th Optoelectr onics and Communications Conference/3r d International Confer ence on the Optical Internet (OECC/COIN2004) , 2004. [5] A. Agusti-T orra and C. Cervello-Pastor , “A New Proposal to Reduce Burst Contention in Optical Burst Switching Networks”, 2nd Interna- tional Confer ence on Br oadband Networks , vol. 2, 2005. [6] Y . V . Kiran, T . V enkatesh, and C. Siv a Ram Murthy , “Reinforcement Learning Based Path Selection and W av elength Selection in Optical Burst Switched Networks”, BRO ADNETS 2006 , v ol. 3, pp. 1–8, 2006. [7] J. Pearl, Probabilistic Reasoning in Intelligent Systems: Networks Plausible infer ence , Morgan Kaufmann Publishers, 1997. [8] R. H ¨ ulsermann, S. Bodamer, M. Barry , A. Betker, C. Gauger, M. J ¨ ager , M. K ¨ ohn, and J. Sp ¨ ath, “Reference Transport Network Scenarios”, MultiT eraNet Report , 2003. [9] R. Neapolitan, Learning Bayesian Networks , Prentice Hall, 2003. [10] “Network Simulator 2 (ns-2), an event-dri ven simulator , http://www .isi.edu/nsnam/ns/”. [11] “Structural Modeling, Inference, and Learning Engine (SMILE), a C++ library for graphical probabilistic and decision-theoretic models, http://genie.sis.pitt.edu/”.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment