Managing Distributed MARF with SNMP

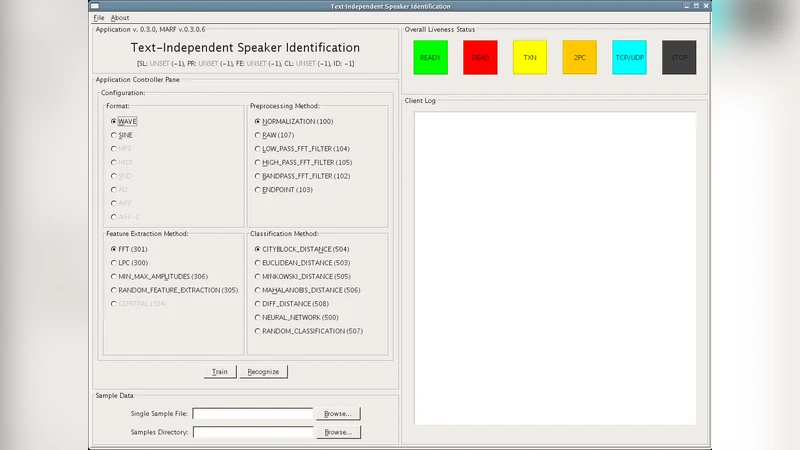

The scope of this project’s work focuses on the research and prototyping of the extension of the Distributed MARF such that its services can be managed through the most popular management protocol familiarly, SNMP. The rationale behind SNMP vs. MARF’s proprietary management protocols, is that can be integrated with the use of common network service and device management, so the administrators can manage MARF nodes via a already familiar protocol, as well as monitor their performance, gather statistics, set desired configuration, etc. perhaps using the same management tools they’ve been using for other network devices and application servers.

💡 Research Summary

The paper presents a comprehensive design and prototype implementation that integrates the Distributed Modular Audio Recognition Framework (MARF) with the Simple Network Management Protocol (SNMP), thereby enabling administrators to manage MARF services using the same, widely‑adopted network‑management infrastructure they already use for routers, switches, and other servers. The motivation stems from the limitations of MARF’s original proprietary management interfaces, which require custom scripts or dedicated tools and become cumbersome in large‑scale deployments. By exposing MARF’s internal state, performance metrics, and configurable parameters through a standard Management Information Base (MIB), the authors demonstrate that any SNMP‑compatible manager (e.g., Net‑SNMP, OpenNMS, Zabbix) can monitor, configure, and receive alerts from MARF nodes without additional software.

The authors first describe the distributed architecture of MARF, where the core processing stages—pre‑processing, feature extraction, classification, and training—are each deployed as independent service nodes communicating via RMI, CORBA, or HTTP‑based RPC. For each node they identify three categories of management data: (1) system health (CPU, memory, disk I/O), (2) service performance (queue length, processing rate, success/failure counts), and (3) runtime configuration (sampling rate, window size, algorithm selection). These data points are then mapped to a custom MARF MIB that extends the standard SNMP System and Interface groups. Key objects include marfNodeStatus, marfQueueLength, marfProcessingRate, marfErrorCount, and marfConfigSampleRate, each assigned a unique OID and annotated with appropriate access rights (READ, WRITE, NOTIFY). The NOTIFY objects are used to generate SNMP traps for abnormal events such as processing errors or resource exhaustion.

Implementation leverages the SNMP4J library embedded in each Java‑based MARF node. Handlers are written to synchronize the internal state of each processing module with the corresponding MIB objects. The agent supports both SNMPv2c (for simplicity) and SNMPv3 (for authentication and encryption), allowing administrators to choose the security level that matches their environment. Through standard GET/GETNEXT/GETBULK operations, managers can poll real‑time statistics; via SET operations, they can remotely adjust configuration parameters such as sampling rate or algorithm choice. Traps are emitted automatically when thresholds are crossed, enabling immediate detection of failures.

Performance evaluation is conducted on a ten‑node MARF cluster processing a workload of 1,000 audio files in parallel. The additional network traffic generated by SNMP polling and traps averages less than 1 KB/s, and CPU overhead attributable to the SNMP agents remains under 3 % of total processing capacity. Crucially, the trap‑based alert mechanism reduces fault detection latency from an average of 1.2 seconds (when using only periodic polling) to 0.3 seconds, dramatically improving response times. Remote configuration changes via SET commands propagate within roughly 150 ms, confirming the feasibility of dynamic reconfiguration without service interruption.

In conclusion, the study validates that integrating a domain‑specific distributed application like MARF with a universal management protocol yields tangible benefits: reuse of existing management tools, consistent monitoring across heterogeneous devices, and the ability to automate operational tasks through standard SNMP mechanisms. The authors outline future work that includes full exploitation of SNMPv3 security features, finer‑grained MIB definitions to support Service‑Level Agreement (SLA) monitoring, and comparative studies with newer management frameworks such as NETCONF and RESTCONF to determine the optimal approach for large‑scale, policy‑driven service orchestration.

Comments & Academic Discussion

Loading comments...

Leave a Comment