Untangling the Braid: Finding Outliers in a Set of Streams

Monitoring the performance of large shared computing systems such as the cloud computing infrastructure raises many challenging algorithmic problems. One common problem is to track users with the largest deviation from the norm (outliers), for some m…

Authors: Refer to original PDF

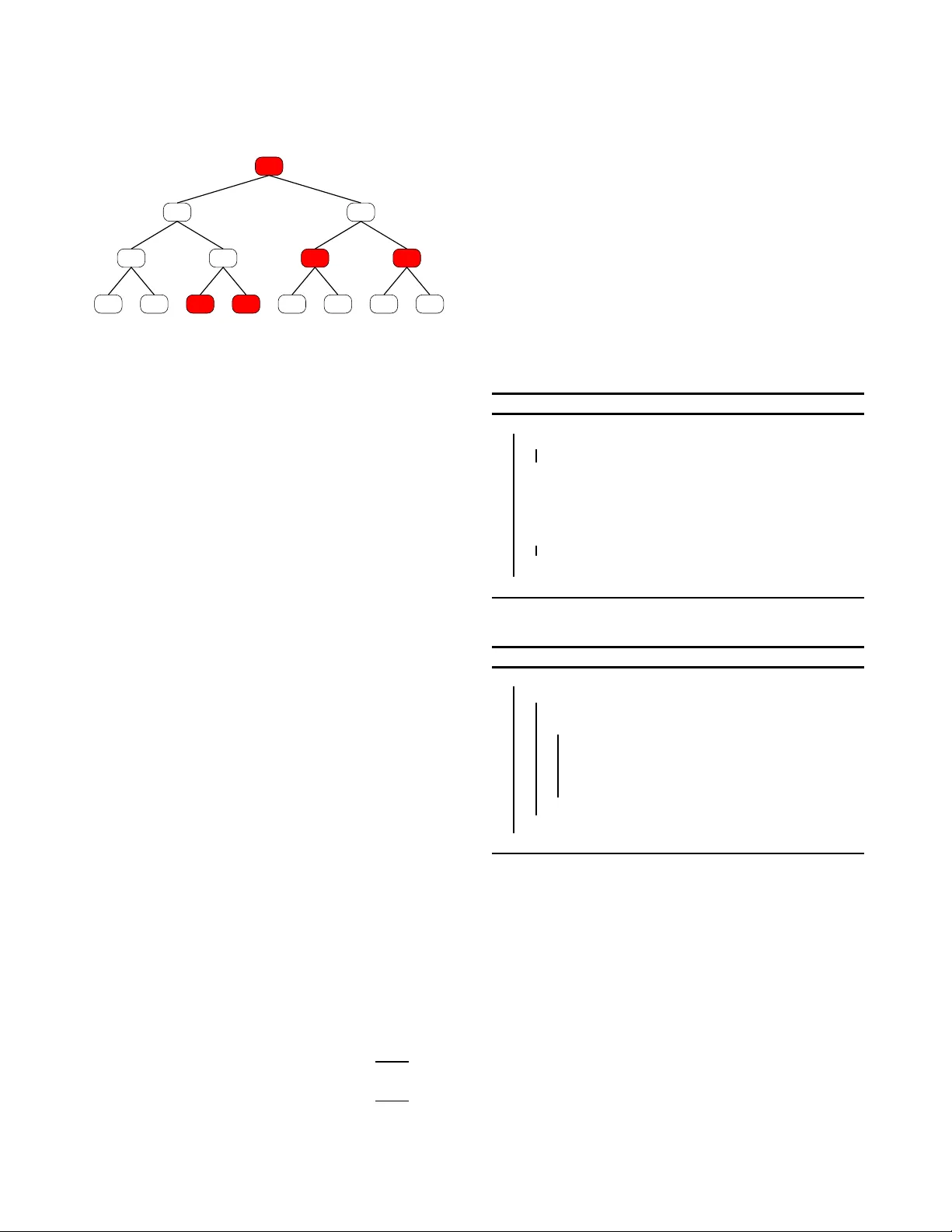

Untangling the Braid: Finding Outliers in a Set of Streams Chiran jeeb Buragohai n Luca F oschini Subhash Suri Amazon .com Dept. of Compute r Science Dept. of Compute r Science Seattle , USA Univ ersity of Calif ornia Univ ersity of Calif ornia chiran@ama zon.com Sa nta Barbar a, USA Santa Barbar a, USA foschini@c s.ucsb.edu suri@cs.uc sb.edu ABSTRA CT Monitoring the performance of large shared computing sy s- tems such as the cloud computing infrastructure raises man y chal lenging algorithmic problems. One common problem is to track users with the largest deviation from the norm (out- liers), for some measure of performance. T aking a stream- computing p erspective, we can think of eac h user’s p erfor- mance p rofile as a stream of numbers (such as response times), and the aggregate p erformance p rofi le of the shared infrastructure as a “braid” of these intermixed streams. The monitoring system’s goal t h en is to untangle this braid suf- ficiently to trac k th e top k outliers. This p ap er inv esti- gates the space complexity of one-pass al gorithms for ap- proximati ng outliers of this kind, prov es lo w er b ounds using multi-part y communication complexity , and prop oses small- memory heu ristic algorithms. O n one hand, stream outliers are easily track ed for simple measures, such as m ax or min, but our th eoretical results rule out ev en g oo d approxima- tions for most of the natural measures such as av erage, me- dian, or the quantiles. On the other hand, we show through sim ulation th at o ur prop osed heuristics p erform quite well for a v arie ty of sy n thetic d ata. 1. INTR ODUCTION Imagine a general pu rpose stream monitoring system faced with the task of d etecting misb ehaving str e ams among a large number of d istinct data strea ms. F or in stance, a net- w ork diagnostic program at an IP router ma y wish to high- ligh t flows whose pac k ets exp erience un usually large a v er- age netw ork latency . Or, a cloud comput in g service such as Y aho o Mail or Amazon’s Simple Storage Service (S3), cater- ing to a large number of distinct users, ma y wish to track the qualit y of service exp erienced by its users. The perfor- mance monitoring of large, shared infrastructures, suc h as cloud compu ting, provides a comp elling backdrop for our researc h, so let u s dwell on it b riefly . An imp ortant charac- teristics of c loud computin g applications is the sheer scale and la rge n um b er of users: Y aho o M ail and Hotmail sup- Permission to m ak e digit al or hard copies of all or part of this work for personal or classroom use is grante d without fee provide d that copies are not made or distribute d for profit or commercial adv antage and that copies bear this notic e and the full citatio n on the first page. T o cop y otherwise, to republi sh, to post on serv ers o r to re distrib ute to li sts, require s prior specific permission and/or a fee. Copyri ght 200X A CM X-XXXXX-XX-X/XX/XX ... $ 5.00. p ort more than 250 mil lion users , with each user having sever al GBs of storage. With this scale, an y down time or p erformance d egradation affects many users: e ven a guaran- tee of 99 . 9% av ailability (th e published num bers for Google Apps, includ ing Gmail) leav es op en the p ossibilit y of a large num ber of users suffering dow ntime or p erformance degra- dation. In other words, even a 0 . 1% user do wn time affects 250,000 users, and translates to significan t loss of prod uc- tivity among users. Managing and monitoring systems of this scale presents many alg orithmic chal lenges, including the one we focus on: in the multitude of users, tr ack those r e c eivi ng the worst servic e . T aking a s tream-computing p erspective, w e can th ink o f eac h user’s p erformance profile as a stream of num bers (such as response times), and the aggregate performance profile of the w hole infrastructure as a br aid of these in termixed streams. The monitoring system’s goal then is to un tangle this braid sufficiently to track the top k outliers. In this pap er, we study qu estions motiv ated by this general setting, such as “which stream has t he highest a verage latency?”, or “what is the median latency of the k worst streams?,” “how many streams ha ve th eir 95th p ercentile latency less th an a giv en v alue?” a nd so on. These problems seem t o require p eering into individual streams more deeply than typically studied in most of t he ex- tant literature. In particula r, while problems suc h as he avy hitters and quantiles also aim to understand the statistical prop erties of I P traffic or latency distributions of w ebserv ers, they do so at an a ggr e gate lev el: hea vy hitters attempt to isolate flo ws that hav e large total mass, or users whose to- tal resp onse time is cumulativ ely large. In our context, th is ma y b e uninteresting b ecause a user can accum ulate large total resp onse time b ecause h e sends a lot of requests, even though eac h request is satisfied q uic kly . On the other hand , streams that consistently show high latency are a ca use for alarm. More generally , we wish to isolate flows or users whose s ervice response is b ad at a fi ner level, p erhaps tak- ing in to account the entire d istribution. 1.1 Pr oblem Formulation W e h a ve a set B , which w e call a br aid , of m streams { S 1 , S 2 , . . . , S m } , where the i th stream h as size n i , namely , n i = | S i | . W e assume that th e num b er of streams is l arge and each stream contains p otentially an unbounded number of items; that is, m ≫ 1 and n i ≫ 1, for all i . By v ij , we will mean the v alue of the j th item in the stream S i ; we mak e no assumptions ab out v ij b eyo nd that they are real-va lued. In th e examples mentioned ab ov e, v ij represents the latency 1 of the j th request by the i th u ser. W e formalize the misb e- havior quality of a stream by an abstract weight fun ction l , whic h is function of t he set of val ues in the stream. F or in- stance, l ( S ) may denote the a vera ge or a particular qu an tile of the stream S . Our goal is to design streaming algorithms that can es timate certain fundamenta l statistics of the set { l ( S 1 ) , l ( S 2 ) , . . . , l ( S m ) } . When n eeded, we use a self-descriptiv e sup erscript to dis- cuss specific weigh t functions, such as l a vg for av erage, l med for median, l max for maxim um, l min for minim um etc. F or instance, if we c hoose the w eigh t to be the aver age , then l a vg ( S ) denotes the a verag e v alue in stream S , and max { l a vg ( S 1 ) , l a vg ( S 2 ) , . . . , l a vg ( S m ) } computes the w orst-str e am by aver age latency . Throughout w e will fo cus on the one-pass mo del of d ata streams. As is commonly t he case with data stream algorithms, w e must content ourselves with appr oximate weig ht statis - tics b ecause even in the single stream setting neither quan- tiles nor frequent items can b e computed ex actly . Wi th this in mind, let us now precisely define what we mean by a guaran teed-quality approximation of high w eigh t streams. There are tw o natural and commonly used wa y s to q uanti fy an approxima tion: by r ank or by value . (R ecall th at the r ank of an elemen t x in a set i s the numb er of items with v alue equal to or less th an x .) • Rank A ppro ximation: Let l b e an arbitrary w eigh t function (e.g. median), and let l i = l ( S i ) b e t he v alue of this funct ion for stream S i . W e sa y that a v al ue l ′ i is a r ank appr oximation of l i with err or E if the rank of l ′ i in the stream S i is within E of th e rank of l i . Namely , | rank( l ′ i , S i ) − rank( l i , S i ) | ≤ E , where rank( x, S ) denotes th e rank of element x in stream S and E is a non- negativ e integer. Th us, if we are estimating th e median latency of a stream, then l ′ i is its rank app ro ximation with error E if | rank( l ′ i , S i ) − ⌊| S i | / 2 ⌋| ≤ E . • V alue A pproximation: Let l b e an arbitrary weigh t function (e.g. median), and let l i = l ( S i ) b e t he v alue of this function for stream S i . W e say that l ′ i is a value appr oximation of l i with r elative err or c if | l ′ i − l i | ≤ cl i . While rank appro ximation often seems more app ropriate for q u an tile-based wei ghts, and v alue approximatio n for a v- erage, t hey b oth yield useful in sigh ts into th e underlying distribution. F or instance, given any p ositive α , at most | S | /α items in S can hav e v alue more than αl a vg ( S ). Th us, a rank app ro ximation of l a vg ( S ) also localizes the relativ e p osition of the approximatio n. Conv ersely , a v alue app ro x- imation of the median or qu an tile can b e esp ecially u seful when the distribution is highly clustered, making the rank approximatio n rather volatile—t w o items may d iffer greatly in rank , bu t still have va lues very close to eac h o ther. Our o veral l goal is to estimate streams with large wei ghts with guaran teed qualit y of appro ximation: in other words, if w e assert that the worst stream in th e set B has median weigh t l ∗ then w e w ish to guara ntee that l ∗ is an a pproximation of max { l med ( S 1 ) , l med ( S 2 ) , . . . , l med ( S m ) } , eith er by rank or by v alue. W e prov e p ossibilit y and imp ossibilit y results on what error b ound s are achiev able with small memory . 1.2 Our Contrib utions W e begin with a simple observ ation that finding the top k streams under the l max or l min w eigh t functions is eas y: this can b e done using O ( k ) space and O ( log k ) p er-item processing time. In the con text of webservices monitoring applications, this allow s u s to track the k streams with worst latency v alues. As is wel l kno wn, ho w ever, statistics based on max or min v alues are highly volatile due to outlier ef- fects, and filtering b ased o n mo re robust w eight f unctions such as quantiles or even avera ge is preferred. W e prop ose a generic scheme th at can estimate the weigh t of an y stream using O ( ε − 2 log U log δ − 1 ) space (b eing U t h e size of the ra nge of the v alues in the s treams), with rank error ε P m i =1 n i with probability at leas t 1 − δ . With this, w e can rep ort the weigh ts of t h e top k streams for any of the natural functions suc h as a v erage, median, or other q uantil es so th at the rank error in the rep orted v alues is at most ε P m i =1 n i . One may ob ject to the P m i =1 n i term in t h e rank ap- proximati on, and ask for a more desirable εn i error term so that th e error dep ends only on the size of an ind ividual stream, rather th an the whole set of streams. On p ragmatic terms also, this is justified b ecause even for modest v alues (a few thousand streams, ea ch with a million or so items), the P m i =1 n i error can make the approximation guaran tee w orthless. In essense, our error approximation is li ne arly worsening with the num ber of streams, which is not a very scalable use of space . Unfortunately , we p ro ve an impossibility result sho wi ng that achieving εn i error in rank approximation requ ires sp ac e at le ast line ar i n the numb er of str e ams (the br aid size) . W orse yet, our low er b ound even rules out ε ˜ n rank approxi- mation, where ˜ n is t h e aver age stream size in the set. Th us, the space complexity is not an artifact o f r arity , as is the case with the fr e quent item p roblem. In particular, w e sh ow that even if a ll streams in the braid hav e size Θ( ˜ n ), ac hiev- ing rank appro ximation ε ˜ n requires space Ω( m ( 1 − 2 ε 1+2 ε ) 2+ γ ), for any γ > 0, where m is t he num ber of streams in the braid. Similarly , for the v alue approximation we show that esti- mating the av erage latency of the w orst stream in B w ithin a factor t requires Ω( m/t 2+ γ ) space. Our low er b ounds also rule out optimistic b ounds e ven for highly s tructured and sp ecial-case streams. F o r instance, co nsider a r ound r obin setting where v alues arrive in a roun d -robin order o ver the streams, so at any instan t the size of any t w o streams dif- fers by at most one. One may ha ve hoped th at for such highly structured streams, i mprov ed error es timates should b e p ossible. Unfortunately , a v arian t of our main constru c- tion rules ou t that p ossibilit y as well. In the face of t h ese lo w ers b ound s, w e designed and imple- mented t w o algorithms, ExponentialBucket and V ari- ableBucket , and ev aluated them for a v arie ty of synthetic data d istributions. W e use three quality metrics to eval uate the effectiveness of our schemes: pr e ci sion and r e c al l , whic h measures ho w many of the top k captu red by our scheme are t ru e top k , distortion , whic h measures th e av erage rank error of the captured streams relativ e to the t rue top k , and aver age value err or , which measures absolute v alue differ- 2 ences. W e tested our sc heme on a va riet y of synthetic data distributions. These data use a normal distribution of v al- ues within th e streams, and either a uniform or a norma l distribution across streams. In all these cases, our precision approac hes 100% for all th ree metrics (a ve rage, median, 95th p ercen tile), th e distortion is b etw een 1 and 2, and t he a ver- age error is less th an 0 . 02. The memory usage p lot also con- firms the t heory that the size of the data structure remains unaffected by the num ber and t h e sizes of the streams. 1.3 Related W ork Estimation of stream statistics in th e one-pass model has receiv ed a great deal of in terest within the database, net- w orking, theory , and algorithms communities. Wh ile the one-pass ma jority fi nding algorithm dates back to Misra and Gries [14], and tradeoffs betw een memory and the n umber of passes required go es b ac k to the work of Munro and Pa- terson [15], a systematic study of th e stream mo del seems to hav e b egun with the infl uentia l paper of Alon, Matias and Szegedy [1], who show ed sev eral striking re sults, including space lo w er b ounds for estimating frequency moments, as w ell as for determining the f requency of the most frequen t item in a single stream. While some statistics such as the a verag e, min, and the max can b e computed exactly and space-efficien tly , oth er more holistic statistics such as quan- tiles cann ot. F ortunately , ho w ever, several methods hav e b een prop osed ov er the last decade to appr oximate these v alues with b ounded error guaran tees. F or instance, quan- tiles can b e estimated with additive error εn using space O ( ε − 1 log n ) [9] or space O ( ε − 1 log U ) [18] where n is th e stream size and U is the largest integer value for the stream items. There is also a rich b od y of literature on finding fre- quent items, top k items, and h ea vy hitters [5, 4, 10, 12, 13, 17]. Schemes such as Counting Bloom filters [8] or Count-Min sketc hes [6] can b e v iew ed as metho ds for estimating statis- tics over multiple streams. In particular, these method s are motiv ated by the need to estimate t h e sizes of lar ge flows at a router: in our t erminology , these meth ods estimate the aggr e gate sizes of the top k streams in th e braid. By con- trast, we are interested in more refined statistics (e.g. top k by t he av erage v alue) that require p eering in to the streams, rather than simply aggrega ting them. Bonomi et al. [3] hav e extended Blo om filters to maintain not just th e presence or absence of a stream, but also some state information ab out the stream. But this state informatio n does not reflect any aggregate statistical prop erties of t he stream itself. The time-series data mining comm unity has fo cused on finding similar [7] or d issimil ar sequences [11] in a set of large time-series sequences. But this work is n ot geared to w ards a one-pass stream setting and h ence assumes that w e hav e O ( m ) memory av ail able where m is the number of streams. 1.4 Organization Our pap er is organized in five sections. In Section 2, w e p resen t our main theoretical results, namely , the low er b ounds on th e space complexity of single-pass algorithms for detecting outlier streams in a braid. In Section 3, we pro- p ose tw o g eneric space-efficient sc hemes for estimating the top k streams in a braid, and analyze their error guarantees. In Section 4 , we discuss our exp erimental results. Finally , w e conclude with a d iscussion in Section 5. 2. SP A CE COMPLEXITY LO WER BOUNDS In this sec tion, w e present our main theoretical results, namely , space complexity low er b ounds that rule out space- efficien t approximation of outlier streams in a fairly broad setting. W e men tioned earl ier that for simple weigh t func- tions suc h as the max or min, one can easily track the top k streams, using just O ( k ) space and O (log k ) p er-item pro- cessing. (This is easily done by maintaining a heap of k distinct streams with the largest item va lues.) Surprisingly , this go od n ews ends rather abruptly : w e show that even trac king top k streams using the se c ond lar gest item is al- ready hard, and requ ires memory prop ortional to the size of the braid, |B | . Similarly , we argue that while trac king streams with the maxim um or th e minim um items is easy , trac king streams with the largest spr e ad , namely , difference of t he maximum and the minimum items, req u ires linear space. Our main result rules out ev en go od approximation of most of th e ma jor statis tical measures, such as av erage, median, quantiles , etc. W e b egin by recalling our formal defin ition of app ro xi- mating the out lier streams. Supp ose w e wish to ra nk the streams in the braid B using a weigh t function l . Without loss of generalit y , assume that the top k streams are ind exed 1 , 2 , . . . , k ; that is, l 1 ≥ l 2 ≥ · · · ≥ l k . W e sa y that a stream S i is appr oxim ately a top k str e am if its l -v alue is at least as large as l k within the appro ximation error range. F or in- stance, supp ose w e are using the median latency l med , then stream S i is a top k s tream with rank approximation E if the v alue of item with rank | S i | / 2 + E (true median plus the rank error) in S i is at least as large as l k . Similarly , one can d efine th e approximation fo r v alue approximatio n. In the follo wing, w e discuss our lo w er b oun ds, which are all based on the multi-party communication complexity [2, 19, 16]. All our low er b ounds employ v ariatio ns on a single con- struction, so w e begin by d escribing this general argument b elo w. 2.1 The Lowe r Bound Framework Our low er b ounds are based on reductions from the mul ti - p arty set -disjointness problem, which is a w ell-know n p rob- lem in communication complexity [16]. An instance DIS J m,t of the multi-party set disjoin tness problem consists of t play- ers and a set of items A = { 1 , 2 , . . . , m } . The pla y er i , for i = 1 , 2 , . . . , t , holds a subset A i ⊆ A . Eac h instance come s with a promise: either all th e sub sets A 1 , A 2 , . . . A t are pair- wise disjoint, or th ey al l share a single common elemen t bu t are otherwise d isjoin t. The former is called the Y ES instance (disjoin t sets), and the latter is called the NO instance (n on- disjoin t sets). The goal of a comm unication proto col is to decide whether a given instance is a YES instance or a NO instance. The proto col only counts the total number of bits that are ex c hanged among the play ers in order to decide this; the comp u tation is free. W e will use the follo wing re- sult from communication complexity [2]: any one-w a y pro- tocol (where play er i sends a m essage to pla yer i + 1, for i = 1 , 2 , . . . , t , that decides b etw een all Y ES instances a nd NO instance with success probability greater than 1 − δ , for any 0 < δ < 1, requires at least Ω( m/t 1+ γ ) bits of communi- cation, where recall that t is the num b er of play ers and m is the size of the set and γ > 0 is an arbitrarily small constant. The idea behind our low er b ound argumen t is to sim u - late a one-wa y multi-party set-disjointness proto col using a streaming algorithm for the top k streams. If the strea m- 3 Stream ID Synopsis Player 1 2 3 4 {2} {2,4} {1,2,5} {2,6} Figure 1: Illustration of a NO i ns tance of D ISJ 6 , 4 , with four players. The subsets of the pl ayers are shown ab o ve their ids, a nd the v alues inserted by them int o the streams (foll o wing the protocol of Theorem 1) are sho wn in t he T able 1 . Stream ID 1 2 3 4 5 6 Pla ye r 1 0 1 0 0 0 0 Pla ye r 2 0 1 0 1 0 0 Pla ye r 3 1 1 0 0 1 0 Pla ye r 4 0 1 0 0 0 1 T able 1: The v alues i nserted by the play ers of Fig- ure 1 in the low er b ound construction of Theorem 1 are shown in this table. ing algorithm uses a synopsis data structure of size M , th en w e show th at th ere is a one-w a y p rotocol using O ( M t ) bits that can solve the t - party d isjoi ntness problem. Because th e latter is known to hav e a lo w er b ound of Ω( m/t 1+ γ ), it im- plies that the memory fo otprint of the streaming algorithm b ecomes Ω( m/t 2+ γ ). The basic construction asso ciates a stream wi th eac h element of the set A ; namel y , the stream S i is identified with the item i ∈ A . See Fig. 1 for an exam- ple. W e initialize ea ch strea m with some v alues, and then insert th e remaining v alues based on the sets A i held by the t play ers; these val ues d ep end on sp ecific constructions. The key idea behind all our constructions is that t he braid of streams is such that approximating the t op k streams within the appro ximation range requires distinguishing betw een the YES and NO instances of the underlying set-disjoi nt prob- lem. W e present the details b elo w as we d iscuss sp ecific constructions. W e b egin with our main res ult on the space complexity of tracking the top k streams under the most common statistical measures, such as median, quantile, or a verag e. 2.2 Space Complexity of Ranking by Median or A ver age Theorem 1. L et B b e a br aid of m str e ams, wh er e e ach str e am has Θ( n ) elements. Then, determining the top str e am in B by me dian value, within r ank err or εn ( 0 < ε < 1 / 2 ) r e quir es sp ac e at le ast Ω m „ 1 − 2 ε 1 + 2 ε « 2+ γ ! . for arbitr arily smal l γ > 0 . That i s, finding the str e am wi th the maximum me dian l atency, wi thin addi tive r ank appr oxi- mation err or ε n , r e qui r es essential ly sp ac e li ne ar in the num- b er of str e ams. Pr oof. Sup pose there ex ists a stream synopsis of size M that ca n estimate the latency of the maxim um median pt pt p p YES NO Median: p(t+1)/2 0,0,... ,0,1,1,... ...,1 0,0,... ,0,1,1,... ...,1 ................................. ................................. Figure 2: Di s tribution of v alues in streams for YES and NO instances of DISJ m,t . F or the YES instance, the m edian v al ue is 0 up to a rank error of pt − p ( t + 1) / 2 , while for the NO i nstance, the median v alue is 1 up to a rank e rror of p ( t + 1) / 2 − p . latency stream within rank erro r εn . W e no w sho w a re - duction that can use this sy n opsis to solve the multi-part y set d isjoin tness p roblem using O ( M ) bits of communication. Let p b e an integer, to b e fix ed later. W e initialize t he syn- opsis by in serting p items in each stream with v alue 0. The multi-part y proto col then modifi es the stream as follo ws, one pla yer at a time, from p laye r 1 through t . On his turn, if pla y er j has item i in its set, then it inserts p i tems of v alue 1 in stream i , for each i ∈ A j . If the play er j do es not have item i in its set, then i t inserts p items of v alue 0 in the strea m i . (Recall that items of the ground set A cor- respond to streams in our construction.) Th us eac h pla y er inserts precisel y pm v alues to t he streams , and in the end, eac h stream has exactly p + pt items in it. A n ex amp le of the running of this proto col is sho wn in Fig. 1 and the correspondin g v alues in serted by eac h play er is t abulated in T able 1 . After all the t play ers are done, we outpu t YES if the maximum med ian latency among all strea ms is 0, and oth - erwise w e retu rn NO. W e now reason why this helps decide the set-d isjoi ntness p roblem. Su pp ose the instance on which w e ran the proto col is a YES instance. Then any stream has either all 0 v alues (this happ ens when index corres p onding to this stream is absent from all sets A j ), or it has p v alues equal to 1 and p t v alues equal to 0 (b ecause of the disjoin t- ness promise, the index corresponding to this stream o ccurs in p recisely one set A j and that j in serts p copies of 1 to this stream, w hile others insert 0s). See Fig. 2. Therefore up to a ra nk error p ( t − 1) / 2 the median latency of all the streams is 0. On the other hand, if this is a NO instance, then there exists a stream that h as p 0 v alues and p t v alues equal to 1. This stream, therefore, has a median latency of 1 u p to a rank error p ( t − 1) / 2. Therefore our algorithm can distinguish b et w een a YES and a NO instance. W e may c h oose p = n/ (1 + t ), so that e ac h strea m has size n and our rank error is n 1 2 t − 1 t +1 . S ince the algorithm uses O ( M ) space and there are t play ers, the total com- municatio n complexity is Θ( M t ), whic h by th e communica- tion complexity th eorem is Ω( m/t 1+ γ ). Finally , solving the equality ε = 1 2 t − 1 t +1 for t , w e get the d esired lo w er b ound that M = Ω „ m “ 1 − 2 ε 1+2 ε ” 2+ γ « . This completes the p roof. 4 W e p oint out that our stream construct ion is highly stru c- tured, meaning that this low er b ound rules out go od ap- proximati on even for very regular an d b alanced streams. In particular, the d ifficult y of estimating the maximum median latency is not a result of rarit y of the target stream: indeed, all streams hav e equal size. Moreov er, th e construction ca n b e implemented in a wa y so that items are inserted into the streams in a round - robin w a y (see T able 1 ). Therefore, the construction i s also not d epend en t on a pathologica l spikes in stream p opulation. Thus, even under very strict ordering of v alues in the s treams, the problem of determining high w eigh t streams remains hard. It is easy to see th at the construction is easily mo dified to p ro ve similar lo w er b ounds for other quantiles . The same construction also show s a space low er b ound for determining the maximum aver age l atency stream. Simply observe th at the a ve rage la tency for t he NO instance is 1 / (1 + t ), while the av erage latency for th e YES instance is t/ (1 + t ). There- fore an y t -approximation algorithm for the av erage measure requires space at least Ω( m/t 2+ γ ), for any t ≥ 2 and γ > 0. Theorem 2. Determining the top str e am by aver age value within r elative err or at most t r e quir es at le ast Ω( m/t 2+ γ ) sp ac e, wher e m is the numb er of str e ams i n the br aid, t ≥ 2 and γ > 0 . 2.3 Lowe r Bound for Se cond Largest Surprisingly , similar constructions also show t hat even mi- nor v aria tions of the easy case (fi nding th e stream with the largest or the smallest ext remal v al ue) make the problem prov ably hard. In p articular, supp ose we wan t to track the stream with the maximum se c ond lar gest va lue. Let us d e- note this weig ht function as l 2 max . Ou r p roof belo w prov es a space low er b ound for even approximating this. In partic- ular, w e sa y that an streaming algorithm finds the second largest-v alued stream with appr oximation factor c if it re- turns a stream whose rank by the second largest va lue is at most 2 c , for an y integer c ≥ 1. Note that this definition of approximatio n is one sided, because the ap p ro ximate v alue returned alw a ys has rank 2 c ≥ 2. (Of course, allo wing c < 1 trivializes th e problem because t h en w e can alw ays use th e max v alue instead of the second largest.) Then, we hav e th e follo wing. Theorem 3. Determining the top str e am by se c ond-lar gest value within appr oximation f actor t r e quir es at le ast Ω( m/t 2+ γ ) sp ac e, f or any γ > 0 , w her e m is the numb er of str e ams i n the br aid and t ≥ 2 is an inte ger. Pr oof. In this case, su pp ose th ere exists a streaming al- gorithm for the top stream by second largest v alue problem using space M , and consider th e follo wi ng reduction from an instance of the t -p arty set-disjointness problem. Each play er j , for j = 1 , 2 , . . . , t , in turn adds items to stream using the follo wing rule: pla ye r j inserts v alues j + 1 and 1 / ( j + 1) in the stream S i for eac h i in its set A j . F or all other streams that it does not hold, it inserts tw o 0 va lues in t h at stream. Therefore eve ry play er inserts 2 m v al ues into the braid and at the end of th e proto col, each stream has exactly 2 t v alues. At the end, we return YES to th e set-disjoin tness prob- lem if our streaming algorithm computes the v alue of the t op stream as less than 1, and NO otherwise. W e now reason its correctness. If the t -p art y instance is a YES instance, then any stream i either contains the v alues 0, or it contai ns v al- ues 0 , p + 1 and 1 / ( p + 1), for some 1 ≤ p ≤ t . Thus, the top stream by the second largest v alue has v alue less than 1 up to an approximation factor of t/ 2. On the other hand , if t his is a N O instance, then there exists a stream t hat includes all the v alues 1 / ( t + 1) , 1 /t, . . . , t, t + 1, whose second largest v alue is t . Therefo re if our algorithm has an approximation ratio b etter than t/ 2, it can distinguish b etw een YES and NO instances. Because our proto col requires sending M -size synopsis to t play ers, the total comm unication complexity is Θ( M t ), whic h by th e l ow er bound on set-disjoin tness is at least Ω( m/t 1+ γ ). Therefore, determining the top stream by second largest m ust require at lea st Ω( m/t 2+ γ ) space, and this completes th e p roof. 2.4 Lowe r Bound for Sp re ad W e next argue that while trac king the top stream with the largest or the smallest v alue is p ossible, tracking the top stream with the largest spr e ad , namely , max( S ) − min ( S ) is not p ossible without linear space. Theorem 4. Determining the top str e am by the spr e ad r e quir es at le ast Ω( m ) sp ac e, wher e m i s the numb er of str e ams in the br aid. Pr oof. Let us consider an instance of DISJ m,t and a streaming algorithm with a sy nopsis of size M which can determine the stream with maximum spread. In this case, w e can u se a 2- part y se t-disjointness low er b ound. Let us call the t w o play ers, OD D and EVEN. W e b egin by inserting a single v alue 0 in eac h of the m streams. First, the ODD pla yer inserts the val ue − 1 into each stream S i for whic h i is in its s et A ODD . Next, th e EVEN pla yer inserts the v alue +1 into each stream S i for which i is in its set A EVEN . Cl early , the top stream by the maximum spread has spread 1, then the sets of ODD and EVEN are disjoin t, and so this is a YES instance. Ot herwise, the top stream has spread 2, and this is a NO instance. The synopsis size of the streaming algorithm, therefore, is at least Ω( m ). T his completes the proof. This fi n ishes the discussion of our low er b ounds. The main conclusion is that approximating the top k streams either by av erage v alue, within any fixed relative error, or by any qu an tile, within a rank ap p ro ximation error of εn i , is not possible, where n i is the size of the top stream. In fact, the low er b ound even rules out the rank app roximation within error of ε ˜ n , where ˜ n is the aver age size of the streams in the b raid. In the follo wi ng section, we complement these lo w er b ounds by describing a sc heme with a w orst-case rank appro xima- tion error P m i =1 εn i , using rough ly O ( ε − 2 log U ) space. 3. ALGORITHMS FOR BRAID OUTLIERS W e b egin with a generic sc heme for estimating top k streams, and then refin e it to get the desired erro r b ound s. The b asic idea is simple. Without loss of generalit y , supp ose the items (val ues) in the streams come fr om a range [1 , U ]. W e subd ivide this range into subranges, called buckets , t hat are pairwise d isjoin t and cov er the entire range [1 , U ]. All stream entries with a val ue v are mapp ed t o the buck et that conta ins v . Within the buck et, we use a sketc h, such as the Count-Min sketc h, to keep track of the num b er of items b elonging to different streams. With this data structure, giv en any v alue v and a stream index i , we can estimate how many items of stream S i hav e val ues in the range [1 , v ]. 5 This is sufficien t to estimate v arious streams statistics such as quantiles and the av erage. W e p oint that this estimation incurs tw o k inds of error: one, a sketch h as an inherent error in estimating how many of th e items in a bu cket belong to a certain stream S i , and tw o, ho w many of those are less than a v alue v when v is some arbitrary v alue in the range cov- ered by the buck et. F or the former, we simply rely on a go od sketc h for frequency estimation, such as the Count-Min, bu t for the latter, we explore tw o options, whic h control how the buck et b oundaries are c hosen. The fi rst algorithm, cal led the ExponentialBucket al- gorithm, splits the range into pre-det ermined buckets, with b oundary [ ℓ b , r b ] suc h t h at the ratio ℓ b /r b is constant. This ensures t h at the relativ e value error o f our appro ximation is b ounded by ( r b − ℓ b ) /r b . How ev er, the p re- determined buck ets is unable to pro vide a non-trivial rank appro xima- tion error b ound. Ou r second scheme, therefore, takes a more so phisticated a pproach to buc k eting, and adapts the buck et bou n daries to d ata, so as to ensure that roughly an equal num ber of items fall in each bu c ket. Before describing these algorithms in detail, let us first quickly review th e key prop erties of our frequency -estimation data structure, Count-Min sketc h, b ecause we rely on its er- ror analysis . The Coun t-Min (CM) sk etch [6] is a random- ized synopsis structure that supp orts approximate count queries ove r data streams. Giv en a stream of n items, a CM sketc h estimates the frequency of any item up to an additive error of εn , with (confidence) probabilit y at least 1 − δ . The syn opsis requires space O ( 1 ε log 1 δ ). The p er-item processing time in the stream is O ( 1 δ ). W e shall use the Count-Min data structu re as a building b lock of our algo- rithms, b ut any simila r sketc h with the frequency estimation b ound will do for our purp ose. 3.1 The Exponen tial Bucket Algorithm The Exponenti alBucket scheme divides t h e v alue range [1 , U ] into roughly log 1+ ρ U buck ets. The fi rst b uck et has th e range [1 , 1 + ρ ) , the second one has range [1 + ρ, (1 + ρ ) 2 ), and so on. There are a total of log 1+ ρ U buck ets, with the last one b eing [ U / (1 + ρ ) , U ]. Note that th e ranges are semi-closed, including the left endp oint bu t not the righ t. Only the last buck et is an exception, and includes b oth the endpoints. W e will say th at the i th b uc ket has range [(1 + ρ ) i , (1 + ρ ) i +1 ], with the first bucke t b eing lab eled th e 0th buck et. Algorithm 1 : ExponentialBucket Algorithm foreac h item v ij in the br aid do buck etId ← log v ij log(1+ ρ ) ; insert( i ) into CMSketch(buc ketId); end A stream en try v , is associated with the unique buc ket conta ining the va lue v . F or every buck et, we maintain a CM sk etc h to count items belonging to a s tream id i . In particular, given an item v ij ( j -the v alue in stream i ) in the braid, we first d etermine th e b uc ket ⌊ log 1+ ρ v ij ⌋ con taining this item, and th en in the CM sk etc h for that buc k et, w e insert th e stream id i . Because there are log 1+ ρ U buck ets, and each buck et’s Count-Min sketc h requ ires O ( ε − 1 log δ − 1 ) memory , the total sp ace needed is O ( ε − 1 log 1+ ρ U log δ − 1 ). Let us now consider how to estimate the num ber of v al ues b elonging to stream i in a particular buck et b . The Count- Min ske tch can estimate the occurrences of stream i in th is buck et with additive error at most εn ( b ), where n ( b ) is the num ber of v alues from a ll streams that fall into b uc ket b . Now supp ose we wan t to approximate th e median v alue for a stream i . W e first estimate n i = P b n i ( b ), th e total num- b er of items in stream i o ver all the buck ets. The error in estimating n i is given by the sum of individual errors in each buck et error( n i ) = X b εn ( b ) = εn. (1) Then w e find th e b u c ket B such that B − 1 X b n i ( b ) ≤ n i / 2 ≤ B X b n i ( b ) . (2) Then we rep ort th e left b oundary of the bu c ket B as our estimate for the median v alue f or stream i . W e hav e the follo wing theorem. Theorem 5. The ExponentialBucket is a data struc- tur e of size O ( ε − 1 log 1+ ρ U log δ − 1 ) t hat, with pr ob abili ty at le ast 1 − δ , c an find the top k str e ams in a s et of m str e ams by aver age, me dian, or any quantile v alue. The Exponenti alBucket scheme is simple, space-efficient, and easy to implement, but unfortunately one cannot guar- antee any significan t rank or va lue approximatio n error with this scheme. F or instance, in the wo rst-case, all items could fall in a single buc k et, giving us only th e trivial rank error of n . Similarly , it could also happ en t hat all elements tend to fall into the tw o extreme buck ets, and the εn error in size estimation ma y cause us to be incorrect in our v alue approximatio n by Θ( | U | ). Thus, we will use Exponential- Bucket only as a heuristic whose main virtue is simplicit y , and whose practical p erformance ma y b e muc h b etter than its w orst-case. I n the follo wing, we presen t a mor e sophis- ticated scheme that adapts its buck et b oundaries in a data- dep endent wa y to yield a rank appro ximation error bound of εn . 3.2 The V ariable Buck et Algorithm The basic b uilding block of V a riableBucket is the q - digest data structure [18], which is a deterministic sy n opsis for estimating t h e quantile of a data stream. At a high leve l, giv en a stream of n val ues in the range [1 , U ], the q- digest partitions this range in to O ( ρ − 1 log U ) buck ets suc h that each buck et con tains O ( ρn ) v alues. This synopsis al- lo ws u s to estimate the φ -th q uantil e of the v alue distribu- tion in t he stream u p to an additive error of ρn u sing space O ( ρ − 1 log U ). W e briefly d escribe the q-digest data struc- ture below wi th its important properties, and then discuss how to constru ct our V ari ableBucket stru cture on top of it. Throughout we shall assume that U is a p ow er of 2 for simplicit y . 3.2.1 Ap pr oximate Quantiles Thr ough q-digest The q-digest divides the range [1 , U ] into 2 U − 1 tree- structured b uck ets. Eac h of the lo w est level (zeroth level) buck et spans just a single v alue, n amely , [1 , 1] , [2 , 2] , . . . , [ U, U ]. The next lev el buck et ranges are [1 , 2] , [3 , 4] , . . . , [ U − 1 , U ], 6 3 4 5 6 7 8 2 2 2 1 1 4 6 Figure 3: A q-digest formed ov er the v alue range [1,8]. The complete tree of buc kets is shown and the buck ets that actually exist on the q-digest are highlighted in red. The numbers next to the filled buc kets show how many v alues were count ed wi thi n that buc ket. F or this q-digest, n = 15 , U = 8 and the threshold ⌊ nρ/ log U ⌋ = 3 (see eqns. 3, 4). the one after that [1 , 4] , [5 , 8] , . . . , [ U − 3 , U ] a nd so on un - til the h ighest level buck et span the entire range [ 1 , U ]. I n general the b uc kets at lev el ℓ are of the form [2 ℓ (2 i − 1) + 1 , 2 ℓ 2 i )] , where ℓ = 0 , 1 , 2 , . . . and i = 0 , 1 , 2 . . . . These buck ets can b e naturally organized in a binary tree of depth log U as shown in Fig. 3 . F or example, the buc k et [1 , 4] has tw o children: [1 , 2] and [3 , 4], while [1 , 4] itself is the left child of [1 , 8]. Every buc ket contains in integ er counter whic h counts the num ber of val ues counted within that buck et. N ote that the buck ets in a q-d igest are not disj oin t: a single buc ket o verla ps in ra nge with all its c hildren and descendants. A q-digest with e rror parameter ρ consists o f a s mall subset (size O ( ρ − 1 log U )) of all p ossible 2 U − 1 buckets. Intuitiv ely a q- digest has many simila rities to a equ i- depth histogram: the buck ets corresp ond to the histogram bu c kets and we striv e to maintain the q -digest such th at all bu c kets hav e roughly equal counts. The memory footprint of th e q-digest is prop ortional to the n um b er of buc k ets. There- fore to reduce memory consumption, we can t ak e tw o sibling buck ets and mer ge them with the parent buck et. The merge is d one by deleting b oth the c hildren and then adding their counts to the parent buck et. The merge step loses infor- mation, since t h e coun ts of both t h e c hildren are los t, but reduces memory consumption. F ormally sp eaking, a q-digest with error parameter ρ , is a su b set of all p ossible bu c kets su c h that it satisfies t h e follo wing q- digest in v arian t. Supp ose that th e total num b er of v alues counted within th e q- digest is n . Then any bucket b in th e q -digest satisfies the follo wing tw o prop erties: count (b) ≤ — nρ log U (3) count (b) + count(b p ) + count(b s ) > — nρ log U (4) where b p is the parent buck et of b and b s is the sibling b uc ket of b . The first property (3) ensures that n one of the buc k- ets are too hea vy and hence attempts to preserve accuracy b eing lost by merg ing t o o many buck ets. The o nly excep- tion t o this prop erty is the leaf bu c ket, whic h due to integer v alue as sumption cann ot be divid ed an y further. The sec- ond prop erty (4) en sures th at the total v alues counted in a buck et, its sibling and parent are not to o few; therefore it encourages merging of b uck ets to reduce memory . The only exception to this prop erty is th e root buck et since it does not hav e a parent. The q-digest supp orts tw o basic operations: Inser t and Compress . Belo w, we sho w how w e extend this q-d igest structure to implement our V ari ableBucket algorithm and how the b asic operations work. 3.2.2 V ariable Buckets Using q-digest Algorithm 2 : V ariableBu cket Inser t Algorithm foreac h item v ij in the br aid do if bucket b = [ v ij , v ij ] do es not exist then create b uc ket b ; end insert i into CMSke tch( b ); increment count( b ) ; if b and its p ar ent and sibling violates q-digest pr op erty then Compress end end Algorithm 3 : V ariableBucket Compress Algorithm for ℓ = 0 to (log U − 1) do foreac h bucke t b in level ℓ do if c ount ( b ) + c ount ( b p ) + c ount ( b s ) < ⌊ nρ/ log U ⌋ then count( b p ) ← count( b ) + count ( b s ) ; CMSketc h( b p ) ← CMSketch( b p ) ∪ CMSketc h( b ) ∪ CMSketc h( b s ) ; delete b and b s ; end end end The V ariableBucket al gorithm can b e understo o d as a d eriv ative of the q -digest data stru cture. I n the basic q-digest, we divide the input v alues in to ρ − 1 log U buckets and in each buck et we coun t the v alues in that buck et u s- ing a simple counter. In th e V ariableBucket synopsis, w e augment this simple counter b y an CM sketc h of size O ( ε − 1 log δ − 1 ). Initially the q-digest starts out empty with no buc kets. When pro cessing the next v alue v ij (the j - t h v alue in stream i ) in the stream, w e first chec k t he q-digest to see if t he leaf buck et [ v ij , v ij ] exists. If it exists, then w e insert the stream id i into the CM sketc h f or that buc ket and incremen t the counter for t h at b uck et by 1. If that bucket do es not exist, then we create a new [ v ij , v ij ] bu c ket a nd insert the pair into th at buck et. After adding the pair, we carry out a Compress op eration on the q-digest. A s more and more data is inserted into the V ariableBucket structure, the buck ets are automatically merged and reorganized by the 7 Compress op eration. This adaptive bu c ket structure is th e reason for th e n ame V ariableBucket . Give n this data structure, let us now see how to approxi- mate the median val ue of a stream i . Firs t we estimate n i by taking the union of all the CM sketc hes in each bucket and then q uerying it for th e v alue of n i . W e then do a p ost-order tra versal of the q-digest tree and merge the CM sketc hes of all the bu c kets visited. As w e merge the CM sk etc hes, w e chec k t h e v alue of the count for stream i . Supp ose when w e merge buc ket b to the unified C M sk etch, the n um b er of v alues in the u nified CM sk etc h exceeds n i / 2. Then w e rep ort the right edge of the bucket b as our estimate for the median. This estimate has three sources of error. The first error is in t h e estimate of n i itself, which is at most εn using the CM sketc h error b ound. The second source of error is from estimating the v alue of n i / 2 while taking union of m ultiple CM sk etc hes and this error is again εn . The third error is from the erro r i n co unt of i i n bu c ket b . This error arises from the fact that any va lue which is co unted in buc ke t b can b e counted on its ancestors as we ll, b ecause the bucket b o verla ps with all its ancestors. Since there are at most log U ancestors and ev ery ancestor has count at most nρ/ log U (eqn. 3 ), the total error i s nρ from this source. Therefore the total error is 2 εn + ρn . By rescaling ε by a factor of tw o, and setting ρ = ε , w e arriv e at t he follo wing theorem. Theorem 6. The V ariableBucket is a dat a structur e of size O ( ε − 2 log U log δ − 1 ) that, with pr ob ability at l e ast 1 − δ , c an fin d the top k str e ams i n a s et of m str e ams by aver age, m e dian, or any quantile value, with (additive) r ank appr oximation err or ε n , wher e n is the total s ize of al l the str e ams in the br aid. Similarly , w e can fi nd top k streams using oth er measures such as 95th p ercen tile, ave rage, etc. 4. EXPERIMENT AL RESUL T S In this section, we discuss our empirical results. W e im- plemented b oth of our schemes ExponentialBucket and V ariableBucket and ev aluated them on a v ariety of datasets. In all of our exp erimen ts w e found that V ariableBucket consisten tly outp erformed Exponentia lBucket and the main adv an tage of Exponenti alBucket is its somewhat smaller memory usage. (How ev er, the memory adv an tage is at most a factor of 2.) Therefo re, w e rep ort all p erformance num bers for the V ariableBu cket on ly and later sh ow one exp erimen t comparing th e relativ e p erformance of the tw o sc hemes. W e fo cused on three most common statistical measures of streams: the median, the 95th p ercentil e, and the a v erage v alue. Our goal in these experiments was to ev aluate t he effectiveness of these sc hemes in extracting the top k streams using these measures. W e used the fol lo wing p erformance metrics for t h is eva luation. 1. Precision and Re call : Precision is the mos t basic measure f or quantifying the success rate of an infor- mation retriev al system. If S ( k ) is th e t rue set of top k streams u nder a w eigh t function and S ′ ( k ) is the set of streams returned by our algorithms, then the precision at k P ( k ) of our scheme is defined as P ( k ) = |S ( k ) ∩ S ′ ( k ) | |S ( k ) | = |S ( k ) ∩ S ′ ( k ) | k . (5) Thus, precision provides the relative measure of ho w many of the top k are found by our scheme. The preci- sion v alues alwa y s lie b etw een 0 and 1, and closer th e precision to 1 . 0, the b etter the algorithm. W e note that in this particular case, precision is the same as recall, d efined as R ( k ) = |S ( k ) ∩ S ′ ( k ) | |S ′ ( k ) | = |S ( k ) ∩ S ′ ( k ) | k . (6) since |S ( k ) | = |S ′ ( k ) | . 2. Distortion : The precision is a g oo d measure of the fraction of top k streams found by our algorithm, but it fails to capture the ranking of those streams. F or example, supp ose w e have tw o algorithms, and both correctly return t he top 10 streams but one returns them in the correct rank order while t he other returns them in the reverse order. Both algorithms enjo y a precision of 1.0 but clearly the second one p erforms p oorly in its ra nking of the s treams. Our dist ortion measure is meant to captu re this ranking q ualit y . Sup- p ose for a stream S i the true rank is r ( S i ), while our heuristic ranks it as r ′ ( S i ). Then, we define the (rank ) distortion for stream S i to b e d i = r ( S i ) /r ′ ( S i ) , if r ( S i ) ≥ r ′ ( S i ) r ′ ( S i ) /r ( S i ) , otherwise . The o vera ll distortion is taken to be the av erage dis- tortion for the k s treams identified b y our sc heme as top k . T he ideal distortion is 1, while the wo rst distor- tion can b e Θ( m/k ), where m is the size of th e braid: this h appen s when the algorithm ranks t h e b ottom k streams as top k , for k ≪ m . Thus, smaller the d is- tortion, t h e b etter the algorithm. 3. V alue Error : Both p recision and distortion are purely rank-based measures, and ignore th e a ctual v alues of the w eight funct ion λ ( S ). In th e cases when data is clustered, man y streams can ha ve roughly the same λ v alue, yet b e far apart in their absolute ranks. Since in many monitori ng a pplication, w e care ab out streams with larg e wei ghts, a user may be p erfectly satisfied with an y stream whose w eigh t is close to the w eigh ts of true top k streams. With this motiv ation, we define a va lue-based error metric, as follo ws. Supp ose the true and app roximate strea ms at rank k are S k and S ′ k . Then the r elative v alue e rr or e ( k ) is defined as e ( k ) = ˛ ˛ ˛ ˛ λ ( S k ) − λ ( S ′ k ) λ ( S k ) ˛ ˛ ˛ ˛ (7) The av erage v alue error e for the top k is th en d efined as th e av erage of e ( k ) ov er all k streams. 4. Memory Consumption : The space b ounds th at our theorems give are unduly p essimistic. Therefore we also empirically ev aluated the memory usage of our sc heme. 8 W e generate several synthetic data sets using natural dis- tributions to ev aluate t he performance and quality o f our algorithms. In all cases, w e use 1000 streams, with ab out 5000 items each, for the total size of all the streams 5M. In all cases, the v alues within each stream are distributed using a Normal d istribution with v aria nce U / 20. The mean v alues for eac h distribution are pic ked by an inter-stream distribution, for which w e t ry 3 different distributions: uni- form, outlier, and n ormal. • In t he Uniform di stribution , we p ic k v alues uni- formly at random from the range U = [1 , 2 16 ], and eac h such v alue acts as the mean µ i for stream S i . • In th e Outlier distributions , w e choose 900 of the streams w ith v alues in the range [ 0 , 0 . 6 U ] and the re- maining 100 streams in the ran ge [ aU, ( a + 0 . 2) U ], with a < 1, for different v alues of a . • In the Normal distribution , the v alues are chose n from a normal distribution with mean 2 15 , and stan- dard d eviation 2 14 . 4.1 Pr ecision Our first exp eriment ev al uates th e precision quality: how many of the true top k streams are correctly identified b y our alg orithms. Figure 4 shows the results o f this exp eri- ment, where for eac h d ata set (of 1000 streams), w e ask ed for the t op k , for k = 10 , 20 , 50 , 100. In Figure 4 the out lier distribution has parameter a = 0 . 8. W e ev aluated the preci- sion for each of the three choices of λ : a vera ge, median, and 95th p ercentile. As the figure sh ows, the precision q ualit y of V ariableBucket b egins to approach 100% for k ≥ 50. The pattern is similar for a vera ge, median, or the 95th p ercen tile. The precision ac hieved by V ariableBucket on the outlier distribution degrades as th e parameter a decreases. as it can b e seen in Fi gure 5. This behavior is easily explained by the fact that the parameter α sets the separation b e- tw een outlier and n on-outlier streams. The smaller α is, th e fuzzier b ecomes the separation, th erefore V ari ableBucket success rate in identif ying out liers d ecreases. 4.2 Distortion P erf ormance Our second ex periment measures distortion in ranking the top k streams, un d er the three weigh t functions av erage, median, and 9 5th p ercentile. The results ar e shown in Fig- ure 6 . F or all three data sets, distortion is uniformly small (b et w een 1 and 4), even for k as small as 10, and it actually drops to the range 1–2 for k ≥ 50. 4.3 A v erage V alue Err or The p revious tw o exp eriments ha ve attempted to measure the qu alit y of our scheme using a rank -based metric. In t his section, w e consider the performance using the value error, as defined earlier. The results are shown in Figure 7. F or all distributions, the relative erro r in th e va lue of the top k streams i s quite small: of t he order of 1–2%. Thus, even when the algorithm fin ds streams outside the true top k , it is identifying streams that are close in v alue to th e true top k . This is especially encouraging b ecause i n data without clear outliers, the meaning of top k is alw a ys a bit fuzzy . 4.4 Exponen tialBucket vs. V ariableBucket In our exp eriments, we tried b oth our schemes, Exponen- tialBucket and V ariableBucket , on all the data sets, uniform outlier normal 0.0 0.2 0.4 0.6 0.8 1.0 k=10 k=20 k=50 k=100 (a) Precision for λ =av erage uniform outlier normal 0.0 0.2 0.4 0.6 0.8 1.0 k=10 k=20 k=50 k=100 (b) Precision for λ =median uniform outlier normal 0.0 0.2 0.4 0.6 0.8 1.0 k=10 k=20 k=50 k=100 (c) Precision for λ =95th p ercentile Figure 4: The precisi on qual it y of V ariableBuck et as a function of k , for th e three choices of λ : average, median, and 95th percentile. 9 0.50 0.55 0.60 0.65 0.70 0.75 0.80 0.0 0.2 0.4 0.6 0.8 1.0 Figure 5: Precision achiev ed by V ariabl eBuc ket for different a in the outlier distribution, top-100, λ =median but d ue to the space limitation, w e rep orted al l the results using V ariableBucket only . In this sectio n, we show one comparison of the tw o schemes to highlight their relative p erformance. Figure 8 sho ws the results for the precision using the median weigh t, for all three data sets. The b ottom figure is the same one as in Figure 4 (middle), while th e top one sho ws the p erformance of Exponenti alBucket for this exp erimen t. One can see that in general V ariableBu cket delivers b etter precision than ExponentialBucket . This w as our observ ation in nearly all the exp eriments , leading u s to conclu d e th at V ari ableBucket has b etter p recision and error g uarantees than ExponentialBucket . This is also consisten t with out theory , where we found that V a riable- Bucket can b e sh o wn t o have boun ded rank error guaran- tee while Exponen tialBucket could not. On the other hand, Exp onentialBucket does ha ve a memory adv an- tage: its data stru ct ure consistently w as more space-efficient that that of V ariableBu cket , so when space is a ma jor constrain t, ExponentialBucket may b e p referable. Ho w- ever, t h e space u sage of V ariableBucket itself is not pro- hibitive, as w e sho w in t h e follow ing ex periment. 4.5 Memory Usage In this exp eriment, we eva luated ho w the memory usage of V ari ableBucket scales with t h e size of the braid. In theory , the size of V ariableBucket does not grow with m , the num b er of streams, or the size of individu al streams. How ever, theoretical b ounds on the space size are highly p es- simistic, so u sed this exp eriment to ev aluate the space u sage in practice. In our implementation of V ariableBucket we used a Count-Min sketc h with depth 64 and width 6 4. W e then built V ariableBucket fo r num b er of streams v ary- ing fro m m = 1000 to m = 10 , 000, and Figure 9 plots the memory usage vs. the num b er of streams. As p redicted, the data structu re size remains virtually constant, and is about 2 MB. 5. CONCLUSION W e inv estigated the problem of trac king outlier streams in a large set (braid) of streams in the one-pass streaming mod el of computation, using a v ariety of natural measures such as av erage, median, or qu an tiles. These problems are motiv ated by monitoring of p erformance in large, shared systems. W e show that b eyond the simplest of the mea- uniform outlier normal 0.0 0.5 1.0 1.5 2.0 2.5 k=10 k=20 k=50 k=100 (a) Distortion for λ =a v erage uniform outlier normal 0.0 0.5 1.0 1.5 2.0 k=10 k=20 k=50 k=100 (b) Distortion for λ = median uniform outlier normal 0.0 0.5 1.0 1.5 2.0 k=10 k=20 k=50 k=100 (c) D istortion for λ =95th p ercentil e Figure 6: The distortion performance of V ariable- Buck et as a function of k , for the three choices of λ : av erage, me dian, and 95th p ercentile. 10 uniform outlier normal 0.00 0.02 0.04 0.06 0.08 0.10 k=10 k=20 k=50 k=100 (a) Average val ue error for λ =ave rage uniform outlier normal 0.00 0.02 0.04 0.06 0.08 0.10 k=10 k=20 k=50 k=100 (b) Averag e v alue error for λ =median uniform outlier normal 0.00 0.02 0.04 0.06 0.08 0.10 k=10 k=20 k=50 k=100 (c) Precision for median λ =95th p ercentile Figure 7: The av erage v al ue error of V ariableB uc k et as a function of k , for the three choices of λ : a verage, median, and 95th percentile. uniform outlier normal 0.0 0.2 0.4 0.6 0.8 1.0 k=10 k=20 k=50 k=100 (a) Precision f or median, Exp onentialBucket uniform outlier normal 0.0 0.2 0.4 0.6 0.8 1.0 k=10 k=20 k=50 k=100 (b) Precision for median, V ariableBu cket Figure 8: A comparison of Exp onentialBuc k et with V ariableBuck et. The Exp onentialBuc ket is more space-efficient but consistently do es worse than V ariableBuck et. This exp eriment s ho ws, side-by- side, the resul ts of the precisi on qual it y exp erime n t for the median w eigh t, using the tw o sc hemes. 2000 4000 6000 8000 10000 0 500 1000 1500 2000 n streams size (KB) Figure 9: Data structure size as a function of the braid size. 11 sures ( max or min) , these problems immed iately b ecome prov ably hard and require space linear in the braid si ze to even approximate. It seems surprising that the p roblem re- mains hard ev en f or suc h minor e xtensions of the max as the “second max im um” or the spread ( max − min), or that even highly structured streams with the round robin o rder remain inapproximable. W e also prop ose t w o heu ristics, Ex- ponentialBucket an d V ariableBucket , analyzed their p erformance guarantees and ev aluated their empirical p er- formance. There are several d irections for fut ure work. F or instance, w e observ ed that the different Count-Min sketc hes are used quite unev enly . Some sketc hes are populated to the point of s aturation, making their error estimates q uite b ad while others are hardly used. This suggest that one could impro ve the p erformance of our d ata structures b y an adaptive al- location of memory to the different sketc hes so that heavily traffic ked sketc hes receive more memory than others. 6. REFERENCES [1] N. Alon, Y. Matias, and M. S zegedy . The space complexity of approximating the frequency momen ts. In Pr o c. of STOC , 1996. [2] Z. Bar-Y ossef, T. S. Jayram, R . Kumar, and D. Siv akumar. An information statistics approac h to data stream and co mmunication complexity . J. Comput. Syst. Sci. , 68(4):702–732, 2004. [3] F. Bonomi, M. Mitzenmac her, R. P anigrahi, S. Singh, and G. V arghese. Beyond blo om filters: from approximate membership chec ks to approximate state mac hines. Pr o c. Sigc omm , 36(4):315– 326, 2006 . [4] M. Charik ar a nd K. Chen M. F arach-Colto n. Finding frequent items in data streams. The or etic al Computer Scienc e , 312(1):3–15, 2004. [5] G. Cormode and M. M. Hadjieleftheriou. Finding frequent items in data streams. Pr o c e e dings of VLDB , 1(2):1530– 1541, 2008. [6] G. Cormode and S. Muthukrishnan. An impro ved data stream summary : the count-min sketc h and its applications. Journal of Algorithms , 55(1):58–75, 2005. [7] Christos F aloutsos, M. Ranganathan, and Y annis Manolopoulos. F ast subsequence matching in time-series databases. In SIGMOD Confer enc e , 1994. [8] L. F an, P . Cao, J. Almeida, and A. Z. Bro der. Summary cac he: A scalable wide-area w eb cac he sharing proto col. I n IEEE/ACM T r ansactions on Networking , pages 254–26 5, 19 98. [9] M. Green w ald and S. Khanna. Space-efficient online computation of quantile summaries. In Pr o c. of A CM SIGMOD , pages 58–66, 2001. [10] R. M. Karp, S. S henker, and C. H . Papadimitrio u. A simple algorithm for fi nding frequent elements in streams and b ags. ACM TODS , 28(1):51–55, 2003. [11] Eamonn J. Keogh, Jessica Lin, an d Ada W ai-Chee F u. Hot sax: Efficien tly finding the most unusual time series subsequence. I n IC D M , 2005. [12] G. S. Manku and R . Motw ani. Approximate frequency counts ov er data streams. In Pr o c e e di ngs of VLDB , pages 346–357, 2002. [13] A. Metw all y , D. Agraw al, and A. El A bbadi. Efficient computation of frequent and top-k elemen ts in data streams. In Pr o c e e di ngs of ICDT , p ages 398–412 , 2005. [14] J. Misra and D. Gries. Find ing rep eated elements. Sci. Comput. Pr o gr amming , pages 143–152, 1982. [15] I. Munro and M. S. P aterson. S election and sorting with limited storage. The or etic al Computer Scienc e , pages 315–323, 1 980. [16] Noam Nisan and Eyal Kushilevitz. Cam bridge Universit y Press, 1997. [17] R. Sch w eller, Z. Li, Y. Chen, Y . Gao, A . Gupta, Y. Zh an g, P . Dinda, M. Kao, and G. Memik. Reversible sketc hes: enabling monitoring and analysis o ver high-sp eed data streams. IEEE/ACM T r ansactions on Networking , 15:1059–1072 , 2007. [18] N. Shriv asta v a, C. Buragohain, D. Agra w al, and S. Suri. Medians an d b eyond: new aggregation techniques for sensor net w orks. In Pr o c. of ACM SenSys , p ages 239–249, 2004. [19] And rew Chi-Chih Y ao. Some complexit y questions related to d istributive compu ting(preliminary report). In A CM STOC , 1979. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment